This article is more than 1 year old

Google Percolator – global search jolt sans MapReduce comedown

The machine that brews the Caffeine

MapReduce reduced

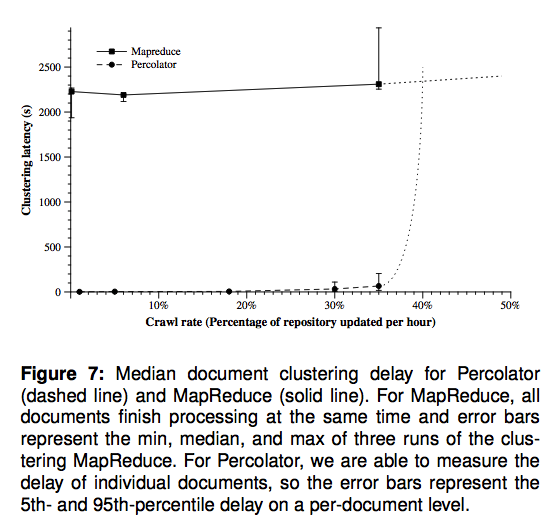

This worked well enough. But, the Google engineers say, it wasn't suited to rapidly updating the index. "Consider how to update that index after recrawling some small portion of the web. It’s not sufficient to run the MapReduces over just the new pages since, for example, there are links between the new pages and the rest of the web. The MapReduces must be run again over the entire repository, that is, over both the new pages and the old pages," they explain.

"Given enough computing resources, MapReduce’s scalability makes this approach feasible, and, in fact, Google’s web search index was produced in this way prior to the work described here. However, reprocessing the entire web discards the work done in earlier runs and makes latency proportional to the size of the repository, rather than the size of an update."

With the old system, the company crawled several billion documents each day and fed them, together with an epic collection of existing documents, through a sequence of roughly 100 MapReduces. Because the system was sequential, this meant that each document spent two to three days being indexed before it would actually turn up in Google's live search results.

Percolator slashes this delay by providing random access to the existing multi-petabyte index, letting Google make updates without reprocessing the entire repository. "Random access allows us to process documents individually, avoiding the global scans of the repository that MapReduce requires," the paper says. The system runs across a sea of machines, making vast numbers of changes in parallel with what the company calls ACID-complaint database transactions.

As Lipkovitz indicated, Percolator runs atop BigTable (Google's distributed database platform) and GFS (its distributed file system). Lipkovitz also explained that the system uses a new version of GFS known as "Colossus" or GFS2, but this is not explicitly discussed in the paper. (You can find more on GFS2 here).

Percolator uses the same basic interface as BigTable. Data is stored in BigTable rows and columns, with Percolator metadata stacked up in its own "special" columns. And it uses a modified version of the BigTable API, with BigTable operations wrapped in computations specific to Percolator.

Unlike BigTable, Percolator does multi-row transactions, and it offers a compute framework for executing code atop the database. This framework is built around what Google calls "observers". In essence, these provide a means of organizing the myriad transactions. "Programmers of an incremental system need to keep track of the state of the incremental computation," the paper says. "To assist them in this task, Percolator provides observers: pieces of code that are invoked by the system whenever a user-specified column changes."