This article is more than 1 year old

Fujitsu CTO: Flash is just a stopgap

Necessary, but not the final destination

Flash is a necessary waystation as we travel to a single in-memory storage architecture. That's the view from a Fujitsu chief technology officer's office.

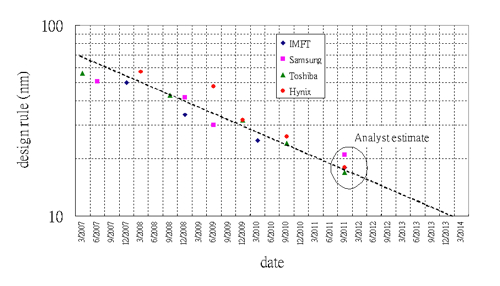

Dr Joseph Reger, CTO at Fujitsu Technology Solutions, is that office-holder, and – according to him – flash is beset with problems that will become unsolvable. He says we are seeing increases in flash density at the expense of our ability to read and write data. Each shrink in process geometry, from 3X to 2X and onto 1X, shortens flash's endurance, and each increase in cell bit level, from 2-bit to 3-bit multi-level cell (MLC), and on to 4-bit, brings its own problems of access speed and endurance.

Dr Joseph Reger, Fujitsu's Chief Technology Officer

He says: "You can get smart in the controller [and] SandForce is very practical, but it doesn't solve the issue; it just pushes out the limit. Flash isn't the target; it's an intermediate stage."

We asked: "What is the target?" and Reger replied: "If I knew I'd be rich."

Reger said, "I think that, over time, flash is going to be history. We are going to need another couple of technologies to get to a clean storage hierarchy in tiers of 10."

His ideal storage hierarchy would involve orders of magnitude jumps in access speed as you cross from one type of memory to another and on to storage. He reckons Phase Change Memory (PCM) is the closest, in terms of time to become a usable technology, than other post-flash contenders such as HP's Memristor.

One aspect of this is – supposing flash were eventually eclipsed by Phase Change Memory or another solid state technology – that flash-focused technology startups such as SandForce and Anobit, would be in trouble. Their technology solves problems intrinsic to flash, and without flash there would be no need for their technologies.

"The big problem today is massive sharing"

Also, Reger points out, the all-flash arrays represent point optimisation, and they could, and possibly will, flourish for a while, but the big problem today is massive sharing. If we envisage massive-scale SAN and NAS repositories, flash is not optimal for those applications.

Memory versus storage

Reger has a concern about new in-memory architectures that are coming up for databases and similar applications: "The challenge becomes how do we treat data access. There is no need to use disk access–based technology ideas like paging. In-memory databases could be smarter about this."

"In the far future can we simply trust the amount of memory we put into a stock server, [because] everything could be in memory? [Imagine] having 2TB of memory. What does that mean in terms of memory management, storage management?"

He asks if everything will be rewritten and re-orchestrated to work with data memory management. Is there effectively only going to be one tier, memory in one form or another?

SandForce SSD processor.

"Currently, having data in storage means it's not in memory. Is it going to stay like that?" After all, storage was invented to deal with memory-size limitations. If those limitations go away then who needs storage?

Reger said: "I truly believe we are going to have a data orientation rather than memory and storage orientations." But this is really far out in the future.

He doesn't think flash will totally overtake data-centre storage in the next couple of years, but it will become a substantial percentage, and important in certain use cases, such as ones with a need for a lot of IOPS and/or the best energy efficiency. He reckons flash is three orders of magnitude better at energy efficiency than hard drives, meaning IOPS/watt, and two orders of magnitude better in bandwidth terms. PCM could provide another order of magnitude improvement.

Even so: "Disks are going to be around at the end of the decade."

All-flash arrays

All-flash arrays like the Nimbus, Violin and Huawei-Symantec products are fine for their purpose, serving data to heavily disk I/O–bound applications, but their controller software will be optimised for flash. Naturally, HDD storage-array controller software will be optimised for disk. But, says Reger, "We have two code stacks", which leads to a problem in managing all-flash arrays and HDD arrays.

There might need to be some sort of abstraction layer sitting above flash arrays and disk arrays, which hides the specifics of each type of storage from upper layers of the stack.

Will there be an all-flash Eternus array? Marcus Schneider, head of Fujitsu's European Storage Development, said there could be, but now is not the time for Fujitsu to be talking about it.

Log scale of NAND process geometry scaling and time.

Very large solid state data stores

The Fujitsu view of the future of flash is that taking advantage of it with all-flash arrays will bring tension between flash array operations and management and disk array operation and management. Each iteration of process-geometry size reduction and cell bit-level will bring fresh problems with flash endurance, speed, and error handling, but will help open the way to massive flash arrays, large enough to hold entire applications and their data in memory.

"I truly believe we are going to have a data orientation rather than memory and storage orientations"

This will bring in a fresh point of tension, as there is no requirement to write data into, and read data from, such arrays as if they had an underlying hard disk drive structure. Also, as they are non-volatile, will there be a need to copy their contents to disk? Probably yes, because flash or a solid state follow-on will be the online store, disk the nearline store, and tape the off-line repository, as it has a cost-profile that disk cannot match.

Talking to Reger, we get the idea that large flash arrays open the door to in-memory architectures, and that these will persist with whatever technology eventually replaces flash, and with whatever technology replaces that.

Nothing lasts forever. Flash is a doorway to solid state and non-volatile storage futures that could, and most probably will, cause radical changes in how we address, manage, and protect large volumes of data – and even what we mean when we say "storage"... ®