This article is more than 1 year old

Give me 10 gig Ethernet now!

This back up is so backed up

Data storage demands within the enterprise grow every year. Managing this data is a challenge for organisations of all sizes, writes Trevor Pott.

The data we move around is now practically measured in terabytes. Depending on your data usage and backup requirements, traditional gigabit Ethernet is simply too slow.

Consider for a moment a fairly typical backup requirement of 10 terabytes. At gigabit Ethernet speeds, allowing for 10 per cent network overhead and assuming zero media changes, it would take a little over a day to back that up.

If this is your nightly backup then you have a problem; you've entered a realm where your nightly backups will take longer than an entire day! You can try to ruthlessly control data proliferation but as the history of IT tells us you will lose this battle in the end. More bandwidth will be required.

For individuals, data management can mean feeding a seemingly infinite number of optical discs into a drive for backups. Large enterprises will employ teams of dedicated storage specialists and specialised hardware to address the same problem. In the fuzzy grey middle is a large segment of IT increasingly served by storage appliances.

For primary storage, Network Attached Storage (NAS) filers are now the norm. They bring with them their own management hurdles, issues that separate them from their server-based predecessors.

Stretched to breaking

Unlike traditional PC filers, appliance filers are rarely their own backup servers. That task is increasingly falling to yet another dedicated device. The traditional filer NAS finds itself competing for resources with network-attached backup appliances, malware protection appliances, virtualisation appliances and still more.

Dedicated boxes doing dedicated tasks are big money, and their proliferation has driven businesses of all sizes to build multi-tiered networks with client traffic separated from storage flow. The network is the thread that binds all aspects of modern computing together, and it is stretched to breaking.

Multiple Ethernet links can be bonded together using 802.3ad link aggregation to make things more viable in the short term, but this requires that both your switch and your storage appliances to support the protocol. It also brings with it the burden of additional cabling and eats up valuable switch ports.

With server density increasing - and virtualisation driving skyrocketing bandwidth demands per server - every port on a switch a precious. A standard rack is 48U. If we take a generous 10U out for power distribution and another 2U for storage and client-facing network switches we are still left with 36U.

Assuming standard 1U pizza-box servers and 48 port switches, there isn't a whole lot of room left on those switches to do any sort of port aggregation except that which is necessary to create adequate bandwidth between the top-of-rack switch and the core.

Link aggregation may be a reasonable-sounding interim solution to the bandwidth problem, but it is in reality little more than a stopgap.

When do we want it? Now!

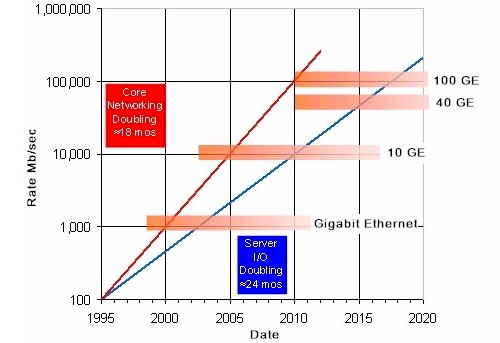

What's needed is widespread deployment of 10 gigabit Ethernet. Thankfully, motherboard vendors are at last starting to build 10 gigabit ports into servers and switch port prices are starting to drop. Proper network card vendors have mature and reliable offerings.

It's time to stop lashing together gigabit Ethernet links in the desperate hope that we can eke out enough bandwidth to migrate around our VMs at usable speeds, or get our nightly backups done on time. It's time for 10 gigabit Ethernet to become the new standard interface, and for 10/100 to finally, mercifully, die.

It's probably too late for 2011 to really be the year of 10 gigabit Ethernet, but 2012 shows a lot of promise. It has to; we can't put this transition off any longer. ®

Hey guys, let's have an Ethernet roadmap

Not long now, Trevor, not now ...

Trevor Pott is a sysadmin, based in Edmonton, Canada.