This article is more than 1 year old

New patent will give iPhone screen interactive 3D

Apple's three-dimensional UI will make cash objects sink into the abyss

iPhones of the future could have virtual 3D interfaces that will detect and respond to the movements of your eyes, revealed an Apple patent granted today.

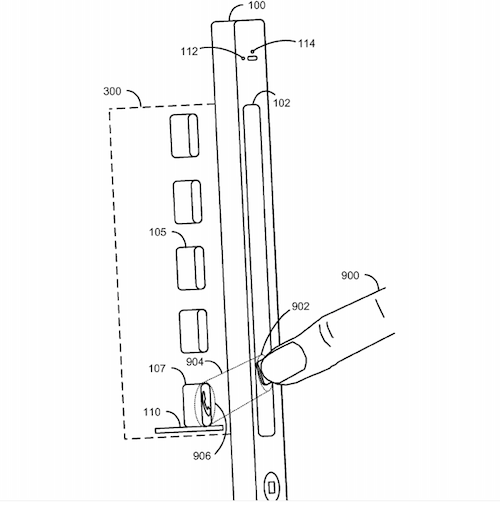

The newly patented technology would also allow for a world-behind-the-screen experience with the user's fingers appearing to reach into a space behind the glass.

Mashing up Kinect-style 3D motion sensing with facial recognition and an awareness of ambient light, the screen would react to where your eyes move and expand icons as your gaze passes over them.

Objects like icons would also cast shadows according to how the ambient light falls on the screen, creating a hyper-realistic 3rd dimension to the gadget interface.

Apple's 3D interface would render using input from your eyes

Apart from reducing fanbois to a gurgling iPhone fingering trance, uses of the new 3D eye-tracking technology would include immersive gaming experiences and a new paradigm in user interfaces.

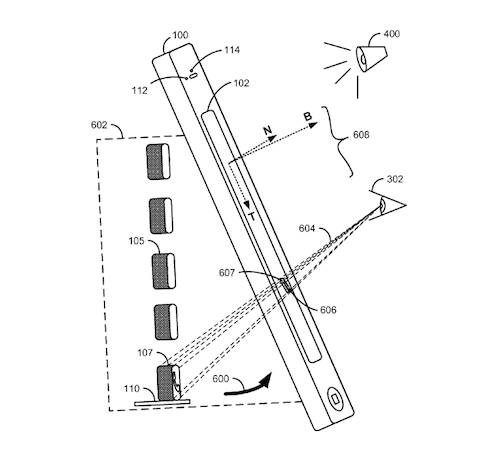

The 3D user interface would be controlled in real-time by the position and direction of the user's gaze, as measured by sensors. Tightly integrated Kinect-style sensors would use information about the iPhone's spatial position to create another space behind the screen - locations in a three dimensional user interface, called a "virtual 3D operating system environment".

Apple describe one possible user interface as a three dimensional “bento box” that would let you turn the screen around and peer inside the boxes:

It is possible to render the virtual 3D operating system environment as having a recessed "bento box" form factor inside the display. [...] As the user rotates the device, he or she could look into each "cubby hole" of the bento box independently. It would also then be possible, via the use of a front-facing camera, to have visual "spotlight" effects follow the user's gaze, i.e., by having the spotlight effect "shine" on the place in the display that the user is currently looking into.

The patent describes how users could even see "behind" the virtual objects in the operating system environment.

The object wouldn't just appear to be 3D, it would behave as if it were 3D too. The patent describes the finger-through-the-screen concept:

[T]he techniques would make it possible to simulate collision effects and other physical manifestations of reality within the virtual 3D operating system environment

Apple's behind the screen touch tech

The position sensors would include a compass, an accelerometer, a GPS module, and a gyrometer, while optical sensors for the facial recognition would include an image sensor and a video camera.

The patent says that this three dimensional user interface could be applied to phones, tablets, portable music devices, televisions, gaming devices, laptops or desktops. ®

Filed in April 2010, the patent 'Three Dimensional User Interface Effects on a Display by Using Properties of Motion', No: 20120036433 was granted today by the US Patent office.