This article is more than 1 year old

SUSE Linux moves to 3.0 kernel with SP2

Bread-and-butter file system goes mainstream

SUSE Linux, the commercial Linux distributor owned by software conglomerate Attachmate, is moving up to the Linux 3.0 kernel and also taking the btrfs file system mainstream alongside Linux container virtual private servers.

These and other changes to SUSE Linux Enterprise Server 11 are contained in Service Pack 2, available today for download. That means the clock begins ticking on SLES 11 SP1, announced in May 2010 and based on the Linux 2.6.32 kernel, which now has a six-month migration window before its standard support is yanked. If you want to use SLES 11 SP1 after August 31, you will have to pay for extended support.

You can scan the release notes for all of the nitty-gritty details on SLES 11 SP2, but Gerald Pfeifer, director of product management at SUSE Linux's development labs in Nuremburg, Germany, gave El Reg a walk through the high points of the release.

One big change, of course, is the shift to the Linux 3.0.10 kernel and a revved-up toolchain, including the GNU gcc 4.3.4 compilers and glibc 2.11.1 libraries, Perl 5.10, PHP 5.2.6, Python 2.6.0, and Ruby 1.8.7. The Linux 3.0 kernel has scheduler and memory optimizations to boost system performance, updates to the networking stack, and various patches and updates to improve the stability of the operating system.

The new Linux kernel is enabled to run all kinds of new hardware, which is what matters for motorheads. In the prior SP1 release, SUSE Linux was supporting new Power7 and System z mainframe iron from IBM as well as then-future x86 processors from Intel and Advanced Micro Devices, but now, Pfeifer tells El Reg, SLES 11 SP2 can fully exploit the features on these machines, including processor features as well as new peripherals (particularly network interface cards and disk controllers) that come with these systems.

SLES 11 SP2 still supports Intel's Itanium processor – which Red Hat Enterprise Linux 6.X does not – but the release notes do not say that the "Poulson" Itanium processor expected this year is in tech preview. You would think that it would be in there now. The AMD "Interlagos" Opteron 6200 and "Valencia" Opteron 4200 processors, which shipped last fall, were not mentioned by name in the release notes, so it is unclear how fully these are exploited; they are certainly supported by SP2.

SLES 11 SP2 also supports the future "Ivy Bridge" third generation Core processors from Intel for desktop and laptop computers, due later this year; the "Sandy Bridge" Xeon E5 processors that are expected before the end of the first quarter; and future Xeon server variants of the "Ivy Bridge" processors. And Kerry Kim, director of solution marketing at SUSE Linux, was quick to point out that SLES 11 SP2 will most definitely run on the Hewlett-Packard ProLiant Gen8 and Dell PowerEdge 12G servers, which are previewing ahead of Intel's official Xeon E5 launch.

Hot-add CPU and memory was in tech preview with SLES 11 SP1 on selected IBM Power, mainframe, and System x machines and is now available on other Xeon 7500 and E7 machines as well as Itanium servers from various vendors.

Cores, threads, and gigabytes, virtual and real

The basic feeds and speeds of SLES 11 SP2 have not changed that much compared to SP1. On x86 and Itanium processors, the kernel can address up to 4,096 logical processors (threads if the processor has them, cores if it doesn't) and up to 64TB of memory. Systems have only been verified supporting 16TB to date, and that is a limit of the hardware supplied by server-makers.

Power iron can support up to 1,024 threads, the top end defined by a 256-core Power 795 machine, and address up to 1PB of main memory in theory with only 512GB verified. (The Power 795 tops out at 8TB of physical memory, and clearly SLES customers are not pushing the limits here yet.) On System z mainframes, SP2 tops out at 64 threads in a single system image, which is an interesting limit considering that the z196 has 96 cores (80 of them available to the OS). The z196 tops out at 3TB of main memory, and SUSE Linux has tested up to 256GB with SP2 against a theoretical limit of 4TB with the S/390 variant of the kernel.

In terms of hypervisors embedded in SUSE Linux, SP2 supports 64 virtual CPUs per guest now, up from 16 with SP1, and the same 512GB of virtual memory per guest. The 64-bit version of the Xen hypervisor is the same, with 255 CPUs (cores or threads, depending) and 2TB of physical memory addressable by the hypervisor and 32 virtual CPUs from 128MB to 256GB of main memory per guest virtual machine. The difference with SP2 is that SUSE Linux has given the axe to the 32-bit implementation of Xen.

btrfs and Linux containers go mainstream

Another big change coming with SLES 11 SP2 is that SUSE Linux is mainstreaming the btrfs file system and Linux containers.

"I think that there is no reason to use ext3 anymore," says Pfeifer. "We think btrfs is ready and the best choice."

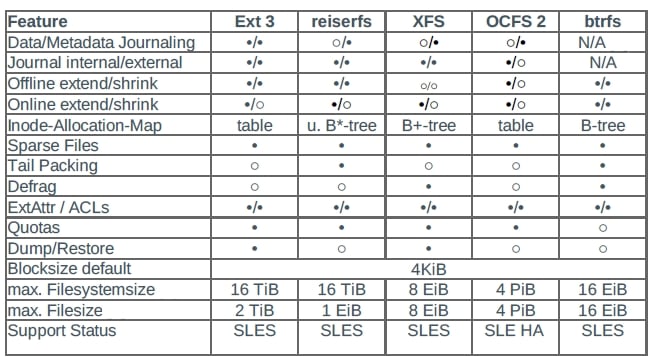

The btrfs (pronounced "ButterFS") file system was been in development since June 2007 and is a POSIX-compliant file system designed for Linux that is being spearheaded by software giant Oracle. It was designed to have object-level mirroring and striping, checksums on data and metadata, online file system check, incremental backup and file system mirroring, subvolumes with their own file system roots, writable snapshots, and index and file packing to conserve space, as well as a slew of other advanced features. (This was long before Oracle bought Sun Microsystems and inherited the Zettabyte File System, which btrfs sort of competes against.) Here's how the different file systems stack up inside of SUSE Linux Enterprise Server 11 SP2:

As you can see, ext3 tops out at 16TB with a maximum file size of 2TB, while btrfs can host 16EB (that's exabytes) of stuff and have a single file that weighs in at 16EB. One of the neat things about btrfs is that is a copy-on-write logging file system, which means you don't journal changes before writing them to the file system, but rather write them to new locations and then link to them. By the way, you can still use the XFS file system if you want, which has been in the SLES distro since version 8.0, and you can read ext4 file systems and migrate them to btrfs even though ext4 is not itself supported in SLES 11. OCFS2, the Oracle clustered file system, is now also supported for high availability server clusters based on SUSE Linux.

But despite all of this variety, Pfeifer says that the snapshotting features of btrfs as well as the scalability are why SUSE Linux is recommending it as the root file system for SLES 11 SP2. The company has encapsulated some of these btrfs features into a tool called Snapper, which integrates with the SLES update and Yast management tool and allows system admins to take a system snapshot before they make important changes to the system and then instantly roll them back if something goes snafu.

SUSE Linux is also claiming to be the first commercial Linux distie to put Linux container, often called LXC, virtualization into production. With LXC, you create virtual private servers based on a shared Linux kernel and file system across those containers. The containers think they are separate physical machines, but they are not. It is far easier to maintain containers than fully virtualized machines, which have a full operating system and file system running in each virtual machine. But the virtual machines are isolated from each other, and that is sometimes important. In either case, LXC has the host OS as a single point of failure and VMs have the hypervisor as a single point of failure.

In recent weeks, Red Hat and Oracle have been making a lot of noise about supporting their Linux distributions for a decade, but Novell (and now SUSE Linux) has been doing that for a long time down, with seven years of standard support and three years of extended support. SLES 10, which was announced in 2006 and which Pfiefer said has only been surpassed by SLES 11 in the installed base last year, will have standard support through the middle of 2013 and then extended support for three years after that.

Generally speaking, the cadence for SLES service packs will be every 18 to 24 months and for new versions every four to five years, Pfeifer added. ®