This article is more than 1 year old

Amazon turns A9 search engine into a cloud service

CloudSearch added to other heavenly wares

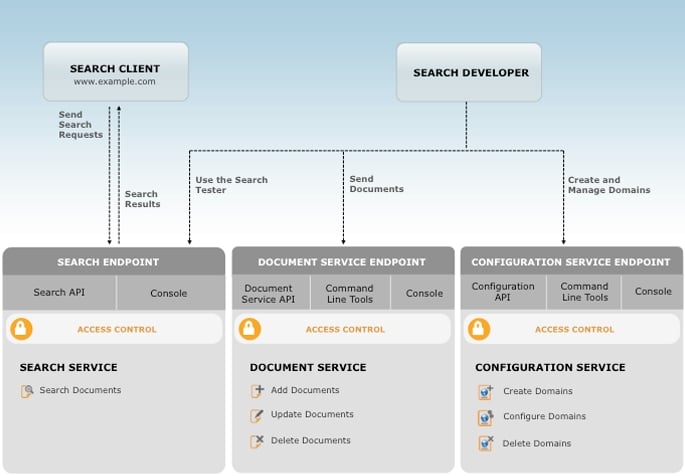

Retailing giant and cloud computing juggernaut Amazon has taken its in-house A9 search engine and converted it into a search service that you can buy through its Amazon Web Services unit to comb through your own documents and files.

The cloudy version of the A9 search engine is called CloudSearch, appropriately enough, and goes into beta testing today. (You can check it out here.) So now, instead of setting up your own servers and grabbing an open source search engine – or buying a Google appliance – you can upload your documents to the CloudSearch service. Amazon says it will automatically index and scale up the processing capacity to meet the search requirements for the end users to which give access to the CloudSearch app.

The CloudSearch service offers both text search and faceted search and customizable relevance rankings and search field and text processing options that developers can monkey around with to fine-tune searches on the data sets uploaded to the service.

Amazon says that it can offer "near real-time" indexing on content uploaded into the service. Indexes for the searches are stored in main memory by the A9 search engine to boost the performance on searches, and the service is completely managed by Amazon. That includes hardware provisioning, setup, configuration, patching and data partitioning to boost search results. As your data sets grow or more people come to do queries – or both – CloudSearch will scale up the CPU, memory, I/O, and storage capacity to keep the search service humming along.

Amazon uses SSL encryption between you and the CloudSearch service to encrypt data in flight, but you cannot upload data that has already been encrypted and have CloudSearch work on it. Amazon does not support user-generated encryption keys on any of the AWS services.

The search service is currently only hosted from Amazon's US East Region in Virginia. It is organized by a search instance, which has a set amount of memory and CPU capacity to run the A9 indexing and search engine against a defined bit of data, which is called a search partition. A search domain is a set of data you want walled off for a particular set of users or applications, and a search domain can be comprised of multiple search instances. Amazon adds capacity as the search requirements go up, which can be because of a larger amount of data or a higher number of end users doing queries.

Amazon is not letting customers see under the covers to see how much capacity is allocated to their search, but you eventually find out when you get the bill. There are three CloudSearch instance sizes – small, medium, and extra large, which cost 12, 48, and 68 cents per hour, respectively, to use.

Based on an average document size of 1KB, the small search instance can hold 1 million documents, the large search instance can hold 4 million documents, and the extra large search instance can hold 8 million documents. If your particular instance hits 80 per cent of CPU capacity doing searches, Amazon automatically adds another instance and if it drops below 30 per cent of CPU capacity, it removes an instance.

At the moment, Amazon is supporting a maximum of 10 search partitions for the beta service; if you need more, you have to ask for special treatment. Amazon is capping CloudSearch at 50 search instances for now, but in a blog, Jeff Barr, evangelist at the AWS unit, says that the company will boost this over time and if you really need more than that now, Amazon is willing to entertain the possibility of giving you more capacity.

CloudSearch can be integrated with Amazon's S3 object storage or its Simple DB or RDS database services, and you can obviously mix and match data from multiple sources in a single search domain and index the heck out of it. Importantly, all of the tricks of the trade that Amazon has come up with to when you search for "dogs" you also get "golden retrievers" if you want – or don't if you don't want that data – as well as faceting so you can bracket information by categories (such as price bands) are built into the CloudSearch service.

In addition to the search instance fees, Amazon is charging bandwidth fees for the amount of data pumped out of the CloudSearch service. You pay 12 cents per GB per month for the first 10 TB of outbound data, 9 cents per GB per month for 10 TB through 50 TB, and 7 cents per GB per month for any data above 50 TB. There is no fee for pumping data into the CloudSearch service. There are also supplemental fees for batch data uploads and re-indexing of documents.

In the example provided by Amazon, say you have 100MB of data in your search domain, and you want to handle 100,000 simple keyword searches per day, do 50 batch uploads per day where each batch has 1,000 1KB documents, and do four index redos per month. That will run you $86.94 on a small search instance. ®