This article is more than 1 year old

TryStack pits ARM against Xeon in the cloud

Take OpenStack out for a test drive

If you are dying to see how software running on a bona fide ARM server stacks up against a Xeon server, then TryStack.org has some time slices running the OpenStack cloudy fabric that have your name written all over them.

TryStack was launched in February, when the companies behind the open source OpenStack project – which includes compute and storage controllers – wanted to let developers take their "Diablo" release out for a spin.

The idea is not so much to put workloads into production, but to give developers and system administrators a sandbox to play in. TryStack allows developers to get familiar with the OpenStack APIs, test apps atop multiple releases of OpenStack, and send bug reports and feature requests to OpenStack project developers.

The initial TryStack iron included five of Dell's PowerEdge C6015 cloudy boxes, which cram four half-width, two-socket servers into a single 2U chassis. These servers use two six-core Opteron 4100 processors and have 96GB of memory per node and 5TB of disk capacity shared across each chassis. Dell, which loves OpenStack, also kicked in an Ethernet switch that is used as a public gateway for the TryStack.org site.

Cisco Systems donated two Catalyst 4948 top-of-rack switches, which have 48 Gigabit Ethernet ports and two 10 Gigabit Ethernet uplinks. One is used for the public IP address network and one is for the private management network linking the server nodes.

The OpenStack setup has one management node running Chef, Nagios, Munin, Jenkins, and Dnsmasq; three pairs of clustered nodes running the OpenStack fabric (Nova for compute, Nova-scheduler, Keystone, Dashboard, Glance, RabbitMQ, and MySQL to store all the configuration data for these); and thirteen nodes for running Nova compute and Nova network.

Thousands of people have given TryStack a whirl on this setup. TryStack makes use of the integrated billing system in OpenStack and gives you around $1,000 worth of compute time to play with. The only rule is that you can't hog all the nodes, and TryStack reserves the right to wipe the virtual machine instances out after they have been running for 24 hours, so others can play.

"We wanted a fast, easy way for developers to test code against a real OpenStack environment, without having to stand up hardware themselves," the site's main page says. "It probably goes without saying that this is not the place for production code – you should host only test code and test servers here. In fact, your account on TryStack will be periodically wiped to help make sure no one account tries to rule tyrannically over our democracy. Play nice in the sandbox!"

At first, TryStack was configured with the OpenStack Diablo release and used Canonical's Ubuntu Server 11.04 Linux variant as the base OS image. The Swift storage controller was not setup on this sandbox.

Somewhere along the way, TryStack got some more x86 iron to run the "Essex" release of OpenStack (we don't know what the configuration is, but earlier this year, servers were being donated by HP).

The new "ARM zone" on TryStack is hosted by Core NAP, a service provider based in Austin, Texas that was no doubt chosen precisely because it is not Rackspace Hosting, one of the dominant contributors to the OpenStack project. Austin is also home to ARM server chip upstart Calxeda, which worked with HP to launch the "Redstone" hyperscale ARM servers last November and should begin shipping product to early customers in August.

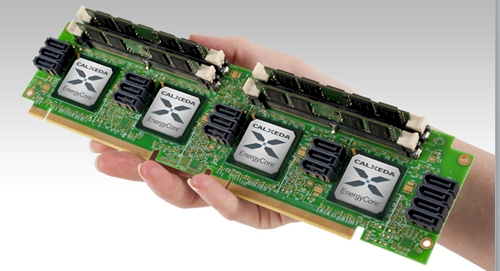

The ARM servers are based on Calxeda's ECX-1000 processors, which pack four 32-bit ARM cores running at up to 1.4GHz into a chip as well as a distributed Layer 2 switch and PCI, SATA, and memory controllers. All you need to make a server is a memory stick, a disk, and a LAN port. The EnergyCore Fabric Switch supports Gigabit and 10 Gigabit ports.

Calxeda's four-socket ECX-1000 server node

Karl Freund, Calxeda's vice president of marketing, tells El Reg that HP is contributing Redstone servers, Calxeda is kicking in the server node cards, and Canonical is providing the Ubuntu Server 12.04 Linux that together make up the ARM zone on TryStack.org. The hardware includes 24 server nodes with 24 disks, and there is another 24 node-24 disk of capacity sitting in standby when and if TryStack.org needs it.

Freund says that right now TryStack.org is allocating a whole socket per test drive, but as more people want to come in and kick the tires, it will use LXC Linux containers to partition a server node and let customers have 1 or 2 ARM cores to play with for their Ubuntu virtual machine. Again, this is not about running performance benchmarks, but about showing people how OpenStack works.

But you know that everyone is going to run their own benchmarks on the two processor architectures, right? ®