This article is more than 1 year old

Intel: Xeon breaks Calxeda's ARM in Apache benchmark

Power lines, damn power lines and statistics

Intel has hit back at upstart server rival Calxeda, which claimed its ARM-powered servers could out do Chipzilla's machines.

Calxeda pitted its ECX-1000 processors against Intel's Xeons on the Apache Bench benchmark test and boasted that its ARM chips had a sizeable advantage. Not so, says Intel, and it has the benchmark tests to prove it.

Calxeda launched its quad-core EnergyCore ECX-1000 server processors in November, firing off the first real salvo in an escalating server front between the ARM and Intel X86 architectures. Both processor families are at war on a number of fronts in the IT racket right now, including smartphones and tablets and maybe someday servers and PCs, too.

The ECX-1000 chips are based on the 32-bit Cortex-A9 processor designs from UK-based ARM Holdings. Four cores running at 1.1GHz or 1.4GHz are packed onto a die along with 4MB of L2 cache, four PCI-Express 2.0 peripheral controllers, a DDR3 memory controller, a SATA 2.0 disk controller, and a very clever distributed Layer 2 fabric switch and related Ethernet ports.

Four of these chips and four memory slots are put onto a single card. The fabric switch links them together through three ports and the remaining five ports can be used to link together as many as 1,024 of these quad-socket processor cards into a baby cluster. The EXC-1000 processors and server cards are being sold by Hewlett-Packard in its Redstone systems and Boston Computing in its Viridis machines. Calxeda is very excited about pushing the ARM architecture into the data centre and against Intel's X86 offerings.

Maybe a little too eager.

As El Reg previously reported, Calxeda was touting to analysts and journalists back in late June and early July that servers based on its ECX-1000 servers smoked Intel Xeon E3 processors on the Apache Bench web serving test. The comparison, says Dave Hill, who heads up server marketing at Intel, was unfortunately an apples-to-oranges one: Chipzilla has run some tests to set the record straight, or rather a little straighter.

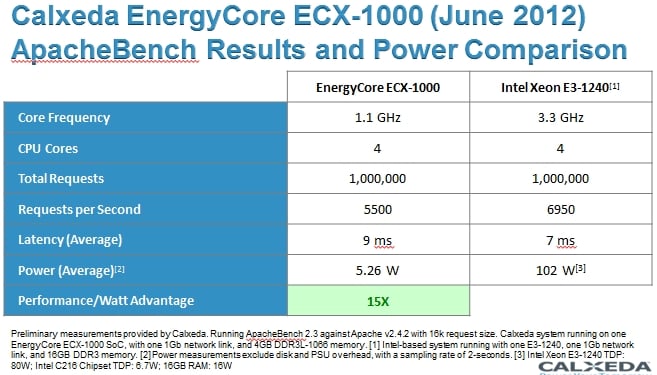

When Calxeda ran the Apache Bench test on its server card, it used a static 16KB file size and did the same thing on the Xeon E3 server. Here was the comparison that the company floated out:

Calxeda's server card has four processors, so it normalised the results for a single socket, which was fine since it wanted to show a socket-for-socket comparison with ECX-1000 and Xeon E3 processors with four cores each.

The ECX-1000 socket was equipped with 4GB of main memory and used a Gigabit Ethernet port to link to the outside world that was hammering the Apache web server, which ran on top of Linux on both machines. The ARM server handled 5,500 requests per second with an average latency of 9 milliseconds; the node, including its 4GB low-voltage memory and a Gigabit Ethernet link, consumed 5.26 watts measured at the wall. Power measurements excluded disks and power supply overhead – that was the first problem.

To gin up a comparison to Intel, Calxeda chose a single-socket server based on Chipzilla's Sandy Bridge Xeon E3-1240 processor. These Sandy Bridge E3-1200 processors were what Intel had available at the time that Calxeda did these tests earlier this year, but they were replaced in May with the Ivy Bridge Xeon E3-1200 v2 singlers.

That was problem number two with the Apache Bench comparisons, and problem three is that Calxeda chose a standard 80 watt part instead of a lower-voltage part that was optimised for performance per watt. El Reg pointed this out at the time, and also noted in the fine print that Calxeda did not measure Xeon power at the wall, but rather used thermal design point (TDP) maximum power draw ratings for processors, disks, and memory.

Also, the Calxeda machine did not have its power consumption for power supplies and fans included – and neither did the Intel setup – and it did not have power consumption from a hard drive included, either.

Finally, the Intel Xeon setup was not CPU constrained, but rather I/O constrained, and could have done more work if it was configured with a faster 10 Gigabit Ethernet adapter. Even Calxeda admitted that the Xeon E3 it tested was only running at 14 per cent of CPU capacity. While adding a fat 10GE card is not as elegant as the ECX-1000 setup, as El Reg suggested back in July, adding that 10GE adapter to the server does indeed boost performance significantly on the Apache Bench test.

Dearth of benchmarks in new world of data centre-class ARM

That was a lot of stuff to pick on, and Calxeda is not happy about this. And it just goes to show you that industry standard benchmarks that are audited and fair serve the industry and every effort should be made to use them as ARM machines make their way into the data centre. The problem we have now is that there are so few machines and no one has run the basic benchmark tests on them yet. Apache Bench is less than ideal, but it is what we have to work with here in the outside world at the moment.

Hill says that rather than picking the $250 Sandy Bridge E3-1240 part, which runs at 3.3GHz and has an 80 watt TDP, it would make far more sense to compare the ECX-1000 to a new Ivy Bridge E3-1220L v2 processor, which runs at 2.3GHz and which has a 17 watt TDP. This chip runs a lot cooler and only costs $189, too. So Intel built a microserver using this chip and ran the Apache Bench test on it, first with a single Gigabit Ethernet adapter and then with a 10GE adapter. Instead of putting 16GB of memory on the server node, Intel thought 8GB was sufficient, and it added one 160GB disk spinning at 7200 RPM to the server as well.

With the Gigabit Ethernet link, this Intel microserver was able to process 7,212 Apache Bench requests per second and the wall power was measured at 35.2 watts during the test, which works out to 205 requests per second, per watt.

And as we all expected, putting a 10GE card in the Xeon E3 server did indeed raise the heat, but it raised the performance a lot more – by nearly a factor of five, in fact. With the faster Ethernet NIC, the Ivy Bridge Xeon E3 microserver was able to process 35,624 Apache Bench requests per second, and it burned 56.9 watts as the test was running. When you do the math, that is 626 Apache Bench requests per second, per watt, more than three times better bang for the watt than a Xeon E3 that is network-constrained.

Without the overhead of the power supply (which only runs at 90 per cent efficiency or so), the fans in the chassis to keep the node cool, and the disk drive, the EXC-1000 microserver did 5,500 requests per second at 5.26 watts measured at the wall, which works out to 1,046 requests per second, per watt. That's about 40 per cent better performance per watt against the Xeon E3 v2 with a 10GE port and about five times better than the Xeon E3 v2 with only a Gigabit Ethernet port. But this comparison is still not exactly fair, says Hill.

If you add 6 watts for the hard drive in the Calxeda server, then you are up to 11.26 watts, and with overhead from voltage regulators, power supplies, and fans added in, the power at the wall for an ECX-1000 could be a few watts higher. Intel is not going to venture a guess there, since it has not seen the Calxeda iron itself. But, says Hill, even if you assume 11.26 watts for the ECX-1000 socket, then the Calxeda ARM server is doing around 489 Apache Bench requests per second, per watt, and the low-voltage part plus the faster networking option gives Intel's Xeon E3 v2 a 28 per cent performance per watt advantage over the ARM server.

Of course, Calxeda could pump up to its 1.4GHz chip and also move to a 10GE interface, which is supported with that distributed Layer 2 fabric, and also boost the performance of its socket – provided it is not CPU constrained, of course.

What Calxeda and any other server chip upstart (like Applied Micro or Tilera) really need to do is run some industry standard SPEC tests on their machines. SPEC CPU2006, jEnterprise 2010, and SPECpower_ssj2008 seem like good places to start. Then they need to do some real web serving and Hadoop benchmarks, which is where ARM and Tilera chips will get traction first in the server racket. And perhaps most importantly, the tests have to include the full system cost of the machine running the test and a cluster of machines (including switching costs) for supporting a horizontal workload because there is no question that Calxeda is going to have a big, big advantage here because of its integrated switching. This is why Intel has bought up Ethernet, InfiniBand, and supercomputer interconnects, after all.

This is just the warming up before the first round of the server fight, and soon Intel will bring its Centerton Atoms to bear as well. ®