This article is more than 1 year old

Inphi: Don't skimp on memory for those virty servers

LRDIMM versus RDIMM benchmark smackdown

The new Xeon E5 processors from Intel pack considerable oomph, but if you want to squeeze the most performance out of them, particularly in virtualized server environments doing real transaction processing and web front-end workloads, you have to remember the oldest bit of advice for the systems racket: don't skimp on the main memory.

And Inphi, which has a vested interest in promoting load-reduced main memory (LRDIMM), has put an fat x86 box through its paces supporting a virtualized server stack running an online transaction processing workload that simulates an online store to show just how much extra work you can get out of a box if you move to endomorph LRDIMM sticks instead of mesomorph RDIMM sticks.

LRDIMM memory replaces the register on a DDR3 memory module with a buffer chip that allows the memory chips on the module to run at a higher clock speed, which boosts performance. This buffer chip also allows for more memory chips to be put on each channel – twice as many, in fact – thereby boosting the capacity.

To support LRDIMM memory, however, the on-chip memory controllers on a server chip have to be tweaked – which is why you can't just add it to any old server. Inphi makes the buffer chips used by Samsung, Elpida, Hynix, and Micron to craft LRDIMM sticks, so it wants to show off a bit.

Intel's Xeon E5 processors support LRDIMM, and so do AMD's Opteron 6200 processors – so this is not an Intel-only thing – and it is likely that future Power7+, Sparc T5, Itanium 9500, and Sparc64-X processors will support LRDIMM fat sticks, as well. One of the reasons why is that LRDIMMs double up the memory per socket, and the other is that they burn less juice.

Back in January, when AMD was touting its LRDIMM support with the Opteron 6200s, Inphi VP of marketing Paul Washkewicz told El Reg that a 1.35 volt LRDIMM with 32GB of capacity will burn 20 per cent less juice than a 1.5 volt RDIMM with 16GB of capacity.

To see just how much of a performance and performance/watt advantage fat LRDIMMs offered over regular RDIMMs, Inphi commissioned the server performance geeks at Principled Technologies to run the DVD Store version 2.1 (DS2) benchmark on a four-socket server to see what effect memory had on performance atop virtualized instances.

The DS2 2.1 test suite was announced in December 2011, and simulates and online music store with a Web front-end and a database backend. You can use Microsoft, Oracle, MySQL, and PostgreSQL databases and the front end has PHP web pages and C# drivers. DS2 is part of the VMmark 2.0 workload stack that VMware uses to test the mettle of its hypervisor.

In this particular case, Inphi and Principled Technologies loaded up VMware's ESXi 5.0 hypervisor on the servers and then ran multiple instances of the DS2 test atop Windows Server 2008 R2 SP1 Enterprise Edition and Microsoft SQL Server 2012. Each instance of the DS2 test had a 50GB database.

The DS2 virty benchmark was done on an IBM System x3750 M4 server sporting four E5-4650 processors running at 2.7GHz, and 20MB of L3 cache on the die. The servers had four disks in a RAID 1 array to host the ESXi 5.0 hypervisor. The system was connected to two disk SAS arrays through a controller in the box, and each array had two dozen 146GB, 10K RPM disks, for a total disk capacity of 7TB.

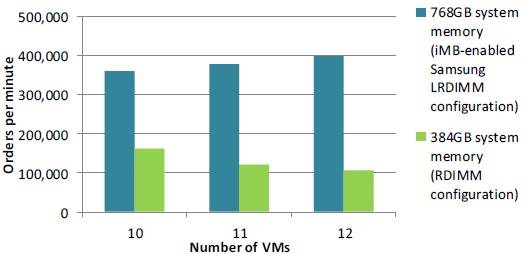

The test was run on a box with 10, 11, or 12 virtual machines, first with a box using 384GB of main memory based on 16GB RDIMM memory running at 1.33GHz. This is with 24 memory slots used, or half of the total slots in the system. Then the same tests were run with half the slots filled with 32GB LRDIMM memory sticks running at the same 1.33GHz speed. Samsung was the memory supplier in both cases.

Here's how the performance stacked up:

More memory means more work gets done

As we all would expect, a machine with twice as much memory can get more work done for any given number of virtual machines. The interesting bit, however, is that as more virtual machines are added to the system, and therefore more load is put onto the four-socket box, the System x3750 M4 setup with the fatter LRDIMM memory continues to boost the number of operations per second (OPS) on the DS2 test, while the system with the lower capacity RDIMM sticks does less and less work as you load it up with VMs.

For the DS2 benchmark at least, clearly having more memory per VM is a big boost in performance. And in this case, it might make sense to add another 384GB of RDIMM memory, given the huge price disparity between LRDIMMs and RDIMMs. But that's not what Inphi tested.

In any event, with 10 virtual machines the machine with the fatter memory did more than double the work. With 11 virtual machines, it was more than triple, and with 12 virtual machines it was nearly quadruple. So hooray for more performance. (Again, El Reg wonders what the performance of the System x box would be with 768GB of RDIMM memory with all 48 of its slots using 16GB sticks.)

So the fatter configuration does more work, which is to be expected. But is it worth the money? If you load up an IBM System x3750 M4 with four E5-4650, 384GB of main memory, and a SAS disk controller, you will see quickly why your boss won't let you have one: it costs $42,796.

If you just isolate the processor, memory, and disk controller costs, then for 10 VMs running the DS2 benchmark test, the machine could process 160,035 operations per second and that works out to 26.7 cents per OPS. With double the memory using LRDIMMs, you can drive 361,433 OPS through those VMs, but the box will cost you $82,156, which comes to 22.7 cents per OPS.

That's 15 per cent better bang for the buck, which is great. And as you add more VMs to these two systems, the spread gets larger as the overhead of running ESXi is much worse on the machine with the skinnier memory, and therefore less work gets done per VM. On the 12 VM portion of the test, the LRDIMM memory costs 20.7 cents per OPS, but the RDIMM memory costs 40.4 cents per OPS. Now you are talking half the transaction costs.

What this shows more than anything is that you have to be very careful about processor, memory, and virtual machine configuration with your workloads.

Obviously, you could add the second bank of 384GB of memory to the system and fill it up, which would cost $11,016 at IBM list prices, boosting the cost of the machine to $53,812. Such a machine may or may not be comparable in performance to the setup using LRDIMMs – El Reg guesses it would be similar, in fact. But then you can double the memory up to 1.5TB using LRDIMMs in all the slots and get the same spread.

With 32GB LRDIMM memory costing $2,099 a stick (or more than twice as much per gigabyte as RDIMMs), this is not a cheap option and it would drive the cost of the base server without storage to $132,532. Such a machine has to do 2.5 times the work to justify the extra cost of the LRDIMM memory. And it also means that you need to get steeper discounts on LRDIMM memory as well. ®