This article is more than 1 year old

IBM plunks Power7+ processors into Flex System servers

Adds integrated Storwize storage, upgrades Flex control freak

Big Blue has trotted out the Power7+ processors in its p260 server nodes in the Flex System modular server lineup.

Back in October, when IBM debuted the first servers to use the Power7+ processors, the company put the new 32-nanometer chips in high-end Power 770+ and Power 780+ servers, then said that it would be next year before we saw any more Power7+ systems rolled out. Not so, it turns out.

It looks like customers were not willing to wait until the new year to get Power7+ processors in their Flexy systems – and maybe IBM's fourth quarter revenues were not able to wait, either.

The Flex System p260 node using the Power7+ processor looks physically the same as the two-socket node using Power7 chips that was announced back in April.

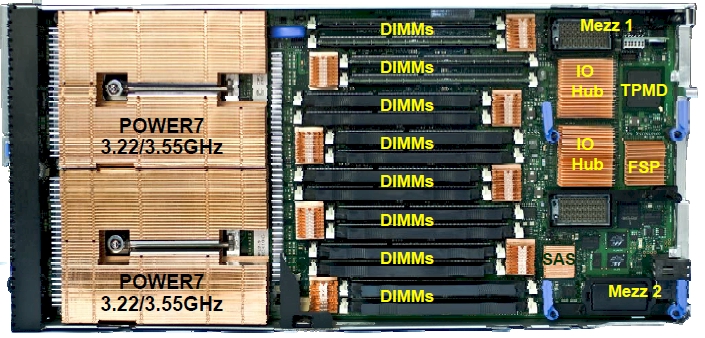

The p260 node has two Power processor sockets in the front with wonking heat sinks, plus eight DDR3 memory slots per socket. Using 16GB memory sticks, the machine tops out at 256GB of main memory – which is not a lot for a two-socket server sporting sixteen cores, but it is probably enough for most workloads.

The lid of the server node has room for two 2.5-inch drives, and if you use them for local operating system or other storage on the node, you have to buy very-low-profile (VLP) main memory that's only available in 4GB or 8GB capacities. Those disks come in 300GB, 600GB, and 900GB capacities. You can slot in two 1.8-inch SSDs with 177GB of capacity instead, and then use regular low-profile DDR3 memory sticks up to the full 16GB capacity that Big Blue supports.

The original p260 node from April (technically known as 7895-22X in the IBM catalog) supported a four-core Power7 chip running at 3.3GHz or an eight-core variant running at either 3.2GHz or 3.55GHz. Processors are installed in the machine in pairs

With the updated p260 node announced on Tuesday, which is 7895-23X in the catalog, the server node can have two four-core Power7+ chips running at 4GHz or an eight-core variant that runs at either 3.6GHz or 4.1GHz. Relative performance metrics are not yet available for this machine, so we don't know how much extra work those extra clocks and other accelerator features of the Power7+ chip enable the servers to do compared to prior Power7 boxes.

In conjunction with the launch of the Power7+ processor support in the p260 node, IBM is also doubling up the memory capacity in the p260 node (either flavor) or the four-socket p460 (which was also announced back in April and is essentially two p260s side-by-side and linked by an IBM NUMA chipset) by supporting low-profile DDR3 memory sticks in 32GB capacities. The VLP memory capacity has not been increased, so if you go with disks, no extra memory for you.

The p260 is a single-wide server node and slides into the Flex System chassis; the p460 is a double-wide node. Interestingly, IBM did not upgrade the p460 node with the new Power7+ chips, but that server can use the new 32GB memory.

The p240L node for so-called PowerLinux servers, which have their firmware altered so they can run only Red Hat Enterprise Linux or SUSE Linux Enterprise Server – they cannot boot IBM's own AIX or IBM i operating systems – did not get the new Power7+ processors either. Both will eventually get a processor upgrade.

Given that it is SC12 and IBM is hot to trot to compete with x86-based servers in the infrastructure, big data, and high-performance computing markets with the PowerLinux machines, it would have been a good guess that IBM would start the Power7+ rollout here.

The PowerLinux machines have slightly lower base-system prices, much lower disk-drive prices (35 to 70 per cent lower per GB), and crazy lower memory prices (on the order of five to seven times lower cost per GB) for the exact same features in the plain-vanilla Power Systems machines that are enabled to run AIX and IBM i. That's how badly IBM wants to compete and how much of a threat it sees the x86 server racket to its Power Systems biz.

It would not be at all surprising to see IBM offer the double-stuffed Power7+ sockets it talked about back at the Hot Chips 24 conference in late summer in the PowerLinux machines first – and maybe only in the PowerLinux machines, if the workloads and competitive pressures map out that way.

The p260 node with Power7+ processors will be available on December 3. With 32GB of main memory and two of the four-core Power7+ chips running at 4GHz, the p260 node costs around $12,000.

Drilling into the pricing a bit, IBM wants $8,603 for the pair of four-core 4GHz processors. If you want to go with two eight-core 3.6GHz Power7+ engines, that will run you $15,409 just for the two chips, and two eight-core chips running at 4.1GHz will cost $17,524. It costs $100 to active each core on the system, which is a lower fee than normal Power Systems machines have. That 1.8-inch SSD with 177GB of capacity costs $4,400 at list price, and a 32GB DDR3 LP memory stick costs $3,200 on this machine.

In a related announcement, IBM will be allowing the p260 to be an option in PureFlex infrastructure rack configurations (which include servers, storage, switching, and management software) starting on December 10. Up until now, only the two-socket x240 server node, which is based on Intel's Xeon E5-2600 processors, was available in this preconfigured infrastructure-cloud-in-a-box.

The PureFlex setups can now also be configured with the x220 and x440 server nodes that IBM announced and shipped in August using the low-end Xeon E5-2400 and the high-end Xeon E5-4600, respectively. IBM's announcement letters make it look like these machines are being announced today, but they are not. Go figure.

Slipping the Storwize in

IBM has also made good on its promise of creating a variant of the Storwize V7000 block storage array that can slide into the Flex System chassis and plug into its power supplies, management controller, and integrated networking.

The Flex System chassis is a rack-mounted enclosure that's 10U high and can sport up to six 2,500 watt power supplies. It has room for seven double-wide and fourteen single-wide devices. The internal V7000 takes up the same space as two double-wide server nodes, and has room for two dozen 2.5-inch SAS drives.

The V7000 internal array has two "node canisters," which are subcomponents with controllers, cache memory, and disks hanging off them. (There are also "expansion canisters", which just have disks and hang off the nodes.) Each node canister in the internal V7000 has 8GB of cache, and has 8Gb Fibre Channel or 10 Gigabit Ethernet ports that can be used to link the array to the server nodes in a Flex System through the midplane in the chassis.

IBM is supporting a bunch of different SAS drives in the internal V7000. You can choose from 200GB and 400GB SSDs; 146GB and 300GB disks spinning at 15K RPM; 300GB, 600GB, and 900GB disks spinning at 10K RPM; or 500GB and 1TB disks spinning at 7.2K RPM.

A Flex System V7000 enclosure with the node controllers costs $14,500, and an expansion chassis in which to hang more drives off this controller costs $3,500. Disk drives range in price from $459 to $1,109, depending on capacity and speed. Those SSDs are crazy expensive, at $4,869 for the 200GB unit and $9,369 for the 400GB unit.

Those prices do not include Storwize software. It costs $11,000 for the base Storwize software, with remote mirroring costing an additional $3,000, external virtualization costing $5,500, and real-time compression costing $5,500.

IBM says that the value of storage is shifting from hardware to software. Another way to interpret it is that hardware prices have fallen over the years and IBM and other storage gear makers are charging for functions that in the past would have been part of – and bundle into in terms of pricing – much more expensive disk arrays. The upshot is that storage prices have not fallen as far as we might be lead to believe, just like server costs have not come down once you add in the now-necessary virtualization layer.

New adapters, switches, and Flex System Manager

The p260, p460, and p24L nodes in the PureFlex system now have a new 10 Gigabit converged network adapter called the CN4058. It has eight ports and costs $3,000. The EN4132 is a two-port InfiniBand adapter that supports the Remote Direct Memory Access over Converged Ethernet protocol – RoCE in networking lingo. This adapter costs $2,100.

The CN4058 adapter is designed to go through the chassis midplane out to the EN4093 10GE switch in the back of the Flex System chassis, which is also announced today and which comes in two flavors.

The EN4093 switch has fourteen internal and ten external 10GE ports in a base configuration, and can be upgraded with a mix of 10GE and 40GE ports. Specifically, one upgrade gives you fourteen more internal 10GE ports and two external 40GE ports that can be split down to four 10GE ports.

No, it's not sixteen 10GE external ports as you would expect – El Reg will explain in a second.

The other upgrade option adds on another fourteen internal ports with four external 10GE uplinks. The EN4093 has three sets of fourteen internal ports, plus two 40GE uplinks and a dozen of what IBM calls Omni ports, which support either 10GE, or 4Gb/sec or 8Gb/sec Fibre Channel uplinks. This switch costs $20,899, with the port upgrades above costing $10,999 each.

The other new switch is called the EN4093R, which is called a "virtual fabric scalable switch" by IBM. This is basically the same switch, but without the Omni ports that can switch hit between Ethernet and Fibre Channel. It starts out at fourteen internal and ten external 10GE ports and scaled up to 42 internal ports with 22 external ports. The base EN4093R switch costs $13,199, with the upgrade costing $10,999 per jump as above.

These switch features will be available on November 16.

Finally, IBM has also updated the Flex System Manager control freak that runs on a server node and can span up to four racks (that's sixteen chassis) of Flex System iron, bossing it around and telling it to work more efficiently. Flex System Manager V1.2 will be able to support the new p260 nodes and V7000 internal array as well as bare-metal deployment of Red Hat Enterprise Linux and the KVM and ESXi hypervisors from Red Hat and VMware, respectively. FSM V1.2 supports end-to-end Fibre Channel over Ethernet configuration and supports IBM's own DVS 5000V virtual switch (which El Reg needs to find out more about). The updated FSM V1.2 will be available on December 3. ®