This article is more than 1 year old

Why 'slow light' might just save the Internet

Photonics lights a path to a high-speed, low-energy, Internet

If we want to keep expanding the performance and the reach of the Internet, we need an inflection point: otherwise, its electricity consumption will become catastrophic.

This isn’t some hippie greenie Luddite concern: even if we ignore the climate question, we need to be able to supply our telecommunications networks with electricity. We also need that electricity to be cheap enough that its cost doesn’t become the constraint on the Internet’s growth.

This Register hack recently had the good fortune to slip into the Australian Institute of Physics’s “Physics in Industry” conference. This meant I was listening to science that wasn’t filtered by political anti-science. In this feature, I’m outlining the research fields discussed by Professor Ben Eggleton of CUDOS.

To date, we’ve been fortunate: the Internet (along with the physical-layer networks that transport the packets around and the data centres that store it and serve it up) is so distributed that the electricity cost is similarly distributed. (Note: for the rest of this article, I am going to use “the Internet” as an omnibus term for everything, from the disk arrays through to the international submarine cable networks to end users with iPhones).

It’s already a fairly standard assumption that the Internet plus the IT industry consumes between three and five percent of the world’s energy budget. More Internet users, more backbone bandwidth, more content, more data centres, more applications – all of these will drive up its power demand. Without a technological inflection point, the very least the industry would have to fear is that it will drive up the cost of the electricity it consumes.

You only need to vary demand by a couple of percentage points to have a noticeable impact on prices. In Australia right now, rooftop solar PV is impacting the wholesale market (discussed at The Conversation here) by suppressing peak prices – and its contribution to national supply is less than the Internet’s contribution to national demand.

If the Internet doubled its electricity appetite, it would have a significant impact on costs throughout the supply chain.

Which is why research laboratories like CUDOS are so keen on identifying the technologies that will let us create faster networks that consume far less power – orders of magnitude less power – than they need today.

The all-optical domain

One of the best ways to cut down networks’ power consumption is to get rid of the power-hungry electronics that does most of the heavy lifting.

We’re only at the beginning of this movement: go inside any data centre, and you’ll still recognise the Ethernet switch, and it (along of course with disk arrays and servers) will be working hard to heat up the joint. The more electricity you get rid of, the less power you need (and the less heat you generate).

The very least you need is an input buffer, an all-optical switch, and an output buffer.

At the heart of this is the world of non-linear optics: the constant search for exotic materials that can do strange things with light.

1. Non-linear optics

This all rests on a discovery made shortly after the laser was invented: that light could be made to behave in non-linear ways.

In a normal medium – the vacuum, or air – light is so relentlessly linear that you can “cross the beams” without anything happening. Try it: shine a laser pointer through another laser pointer, and you won’t see any difference in the resulting points once the beams hit something solid.

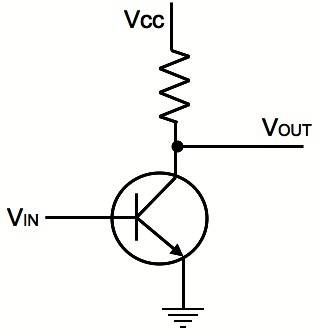

Photons don’t interact with each other. That is the big challenge if you want to build an all-optical amplifier. Let’s look first at a simple transistor amplifier: the low voltage present at the base controls how much of Vcc is presented to Vout.

The simple transistor: we need to reproduce this in light

All of this depends on the interaction of electrons.

In non-linear optics, the aim is to find a medium that allows photons to control other photons in the same way that the semiconductor allows the input voltage to control the output voltage in the transistor.

The right material provides the medium: the incident light interacts with electrons, and those electrons can be used to control the second light beam.

To do this in a way that you could replace an Ethernet switch – with hundreds of ports – you need the device to be small, cheap, and easy to make. As Eggleton told the AIP audience, one of the holy grails of research is to create structures that can be fabricated using standard CMOS lithography.

2. Slow light

It’s easy to slow light down a little: just pass it into a piece of glass at an angle. Refraction comes from light having a lower speed in the glass medium than in free air.

As we know from various experiments and papers, light can be slowed down a lot: if you can create a very exotic state of material called a Bose-Einstein Condensate, pretty much to a dead stop. A Bose-Einstein Condensate is, however, impractical for an Ethernet switch, since it exists close to absolute zero.

Slow light is useful for a couple of reasons, so a second aim of optics researchers is to find non-linear materials and configurations of the materials to significantly slow light down. Eggleston’s CUDOS group is quite pleased with its results – it's getting light down to 70 meters/second.

The obvious application of slow light is in optical memory – buffering the photons by slowing them down.

But there are other benefits that feed back into the previous discussion.

If the photons are moving across a nano-surface slowly, there’s a much greater probability that the photon will interact with the surface. That means you need less of the surface to get the effect you want – which makes it easier to make the features smaller.

And there’s always the chance you’ll get something like what’s described here. While conducting a slow-light experiment in 2009, the researcher saw a flash of light at an unexpected angle – and here, I don’t mean “observed with an instrument”, I mean saw with the naked eye.

Initially, that depressed him because it probably meant he’d damaged the apparatus he was supposed to be testing – but no: what he’d seen was the unexpected generation of visible light at the third harmonic to the slow-light (which was in the infrared) in the experimental waveguide. At the third harmonic, the light was green.

What really got the researchers excited was that this effect was demonstrated the emission of light at the third harmonic (that is, the visible light) not in some highly exotic material, but in ordinary silicon.

3. Broader bandwidth

Which, to demonstrate my command of ironic self-reference, provides a handy segue into a third field of photonics research that has world-changing potential: expanding the capacity of photonic communications by using more wavelengths.

Yes, we already do this – it’s called wavelength division multiplexing – but even so, we’re only using a vanishingly small amount of the available optical wavelengths.

When researchers talk about “broadband photonics” they’re not talking about “can I please haz 100 megabits per second internetz?” to upload cat pictures. Rather, they’re talking about greatly expanding the number of wavelengths we use in the optical spectrum.

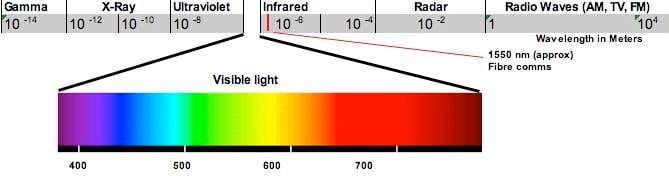

Here’s the whole of the electromagnetic spectrum:

Optical communications is based on the 1530 nm wavelength band – the entire ITU frequency grid for DWDM systems (G.694.1) fits between 1530 nm and 1625 nm – a tiny amount of the near infrared (the vertical red line on the graph above).

We don’t use visible wavelengths, because the ubiquitous Erbium-doped optical amplifier, cheap and everywhere, works at the 1530 nm band.

At the moment, that doesn’t matter all that much. After all, experimental technologies are well into the petabit-per-second range.

But imagine a world in which gigabit links are the default customer access connection – in 1982 it was proven to me that users would never need more than 19.6 Kbps modems – the holy grail will be lots more backbone bandwidth for not much more money or electricity.

More immediately, the simplest approach is to apply techniques learned in the world of radio to the optical domain. Optical waves are, after all, just another form of electromagnetic radiation. If you can use (say) OFDM to expand the capacity of a radio network, it will work just as effectively on an optical network. If you can modulate the angular momentum of a radio signal to expand the channel capacity, you can do exactly the same thing with light. If you can increase the basic symbol rate of a radio wave by using 60 GHz instead of 1 GHz – the same trick will work with light.

If – in the case of light – we find the right materials or (as in the case of the slow-light emission discovery) “trick” common stuff like silicon into giving us visible frequencies.

All of this is a look at a future that's quite some way away. Today, processing photons still needs more power than processing electrons. Next, I'll take a look at the kinds of research that have more near-term applications to reducing the Internet's power consumption. ®