This article is more than 1 year old

Facebook friends bash servers, storage, and racks into bits

Realizing the dream of independently upgradeable subsystems

Open Compute 2013 When you are the company the size of Facebook, you get to dictate the terms of your server acquisitions to your vendors, and a year and a half ago, the social network did a remarkable and unselfish thing and opened up the hardware specs for its first data center and the servers that were built to run in them as the Open Compute Project.

Having scared the living daylights out of tier one server makers, Facebook and Open Compute are now setting forth to take the monolithic server designs that have ruled the data center since they were called systems five decades ago and bash it into bits. Shiny, independently upgradeable bits, if the plan comes to fruition.

The Open Compute Project is hosting its fourth biannual summit in Santa Clara today, and some 1,900 server and data center geeks showed up to see what Facebook and its system friends would do. Facebook is contributing two new server designs as well as discussing a new storage architecture that is the backend of its Facebook Photos application, and other members of the Open Compute community talked about the server and rack designs they have either adopted or modified and contributed back to the community. There's a flurry of activity on that front, as you might expect.

But the big news – albeit news that is a bit esoteric – is what Frank Frankovsky, who is vice president of hardware design and supply chain at Facebook as well as chairman of the Open Compute Foundation, is what he called breaking up the monolith or disaggregating system design. Basically, it means breaking up the components of a server, a rack, and a data center in such a way that subsystems in the server, rack, or data center can be changed when it is suitable for some specific software need rather than when it is suitable for some chip maker's roadmap.

"One of the biggest challenges today in hardware design is trying to predict where the software is going to be," explained Frankovsky. "Software can change very, very quickly, and in the physical world unfortunately we can't change hardware with a few keystrokes. We actually have to plan materials, do designs, bring up tooling, and bring up manufacturing and supply chain operations."

"So there is an impedance mismatch between the speed at which software moves and the speed at which the configuration of hardware can move. Traditionally, we have been designing servers that have been pretty monolithic. Everything is bound to a PCB, wrapped in a set of sheet metal, that doesn't really allow us good flexibility in the way you match the software to the set of hardware that is going to be applied to it."

Facebook hardware VP and Open

Compute chairman Frank Frankovsky

Trying to predict where software will end up in the data center "will drive you crazy," and Facebook does not believe that virtualization is necessarily the best way to drive up the utilization on hardware. In some cases, being able to change elements of the hardware on the fly is a better answer based on the requirements of the workload – and for you motorheads out there, this will always sound like a better answer than paying someone for software.

What Facebook and at least many of its end user customer friends in the Open Compute Project want are what Frankovsky calls smarter technology refreshes. You shouldn't have to change the whole system just to do a processor, memory, or I/O upgrade.

This, of course, cuts against the massive amounts of integration that Intel, Advanced Micro Devices, IBM, and others have done with their processors over the past couple of decades. Processors now include cores for computing, floating point and decimal math units, many levels of cache memory, and now with the Xeon E5 chips from Intel, on-chip PCI-Express controllers. Soon, network interfaces will be moved onto the processors and in many cases in the ARM server processor world, whole switches are being added.

This is not what Facebook wants. In fact, it is the exact opposite, and it will be interesting to see if Open Compute can come up with a truly disaggregated system like I was talking about over four years ago when I first joined El Reg. This is not a new idea, any more than is talk of standardization in the systems racket.

What a modular approach to system components does is make it more expensive to make systems on the one hand – there's no question about that – but what Facebook seems to be arguing is that other elements of a properly designed system may end up with a much longer lifespan – therefore mitigating those costs. In the end, the amount of chippery and bent metal that ends up in the scrap heap could be reduced, and this really matters to the Open Compute Project.

Perhaps as important, explained Frankovsky, is that a modular rather than a monolithic design for servers and racks will allow companies to increase the speed of hardware innovation and do a better job of tuning the hardware to meet the software needs.

First, you don't have to wait until the processor, memory, and I/O technologies all line up to get a new server. And second, if you make a mistake by not adding enough capacity within the system, you can fix it. Or if you add too much, you can swap it out for another set of hardware where its software requires it.

You can already do this with memory sticks and disk drives and CPUs to a certain extent, but the capabilities are all intertwined. And that is precisely the way chip makers and system makers like it because a need for any one technology will compel you to upgrade all of them at the same time. In other words, they make more money.

Frankovsky said during his keynote today that the Open Rack design launched at last May's OCP summit was the first step in this disaggregation process. That rack broke free of the 19-inch wide rack standard that has been around since the early days of radio and electronics in the military and went with a new 21-inch rack that is better suited to a world where fat and cheap disks are 3.5 inches wide.

This way, you can get five of them across the front of a server as well as three half-width server nodes. The Open Rack also busted the power supplies out of the servers and put them on three power shelves in the rack, which were then shared by the various compute, storage, and network nodes in the rack.

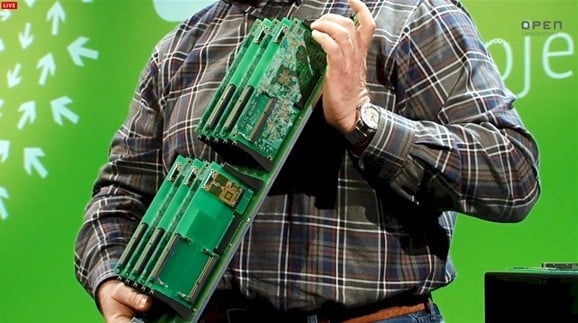

The next step is something called the Group Hug board, and it is a new standard backplane and interconnect for microservers that is based on PCI-Express x8 slots with some tweaked pin outs. "If we had left this to the industry, they would have probably found the most esoteric and expensive interconnect on the planet," said Frankovsky.

Well, maybe not. These Group Hug slots will look familiar to those of you who have followed SeaMicro x86 and Calxeda ARM microserver processor cards, which have a similar architecture. So maybe not. The difference is that the Facebook backplane design has one PCI pin-out per server and the Calxeda and SeaMicro ones have two pin-outs per server.

The Group Hug microserver backplane from Open Compute

Having watched blade servers not be standardized, Facebook is determined that microserver form factors and backplane interconnects will be. And in fact, in the image above, it shows five microservers based on the X-Gene 64-bit ARM server processor in one line and five microservers based on Intel's forthcoming "Avoton" Atom S Series chip in the other slots, all sharing the same power, electrical, and mechanical interconnects and all being able to be slid into the same microserver chassis.

Like many of us, Frankovsky has been annoyed by the lack of socket compatibility in server motherboard, and the Group Hug backplane – technically known as the Open Compute Common Slot specification – is the best that Facebook can do now to compel some sort of compatibility across microservers that don't just have different vendors, but different processor architectures. But Frankovsky vented his frustration just the same.

"It has always driven me crazy, over many, many years of doing server product design, that we couldn't have a common socket," said Frankovsky. "Why do companies have to design two completely different product lines, one for an Intel socket and the other for an AMD socket. Why is there all of this redundant investment? All of the surrounding bits are the same – you still have DDR3 memory, you still have network controllers and drive controllers."

The silicon photonics interconnect contributed by Intel to

Open Compute in action in a rack

The other big news coming out of the Open Compute Summit today was that Intel was contributing silicon photonics cable design and connector specifications to the Open Compute Project in the hopes that these light pipe interconnects will be used to link compute, storage, and networking elements within the rack and across racks to each other.

Intel and a number of other chip makers have been working on silicon photonics – the marrying of lasers and optical cables with electronic chips with the hope of manufacturing both using the same CMOS processes used to make chips – for more than a decade, and Intel CTO Justin Rattner said during his keynote that Chipzilla has cracked the technical problems in manufacturing silicon photonics and will be able to start adding this to systems.

Like Frankovsky, Rattner said that Intel believed in streamlining the rack, getting as much metal out of the boxes as possible and consolidating power supplies. As an aside, Rattner said that back in 1985 he was responsible for building what would have been called a blade server out of 80286/80287 processor cards with eight Ethernet controllers all linked in an n-dimensional hypercube network with 128 blades; this machine went to Yale University for it to monkey around with.

The next phase of disaggregation we are undergoing now is the distribute I/O and to separate switching, storage, and other I/O from the systems. (Ironically, with the ARM server chips, these are being brought onto the silicon, not busted up.) The next phase, said Rattner, would be to separate compute and memory from each other, but to link them with very fast and low-latency optical interconnects.

We are not there yet, of course. But Intel is working on it. El Reg will drill down into the silicon photonics and get back to you on precisely what Intel has cooked up. ®