This article is more than 1 year old

How to build a BONKERS 7.5TB, 10GbE test lab for under £60,000

Sysadmin Trevor runs the sums, builds a dream rig

Part Two In part one of The Register's Build A Bonkers Test Lab feature, I showed you how to build a test lab on the cheap; great for a home or SMB setup, but what if we need to test 10GbE? Part two is a wander through my current test lab to see how I've managed to pull together enough testing capability to give enterprise-class equipment a workout on the cheap.

A proper enterprise test lab would be based on the same equipment as is used in production. This allows sysadmins to test esoteric configuration issues that can – and do – arise. Virtualisation has made this less of a pressing concern for fully (or mostly) virtualised shops, but it is still general good practice. That said, we don't all get that luxury; budgets can stand in the way, or even complications arising from corporate mergers. Let's take a look at what I've pulled together.

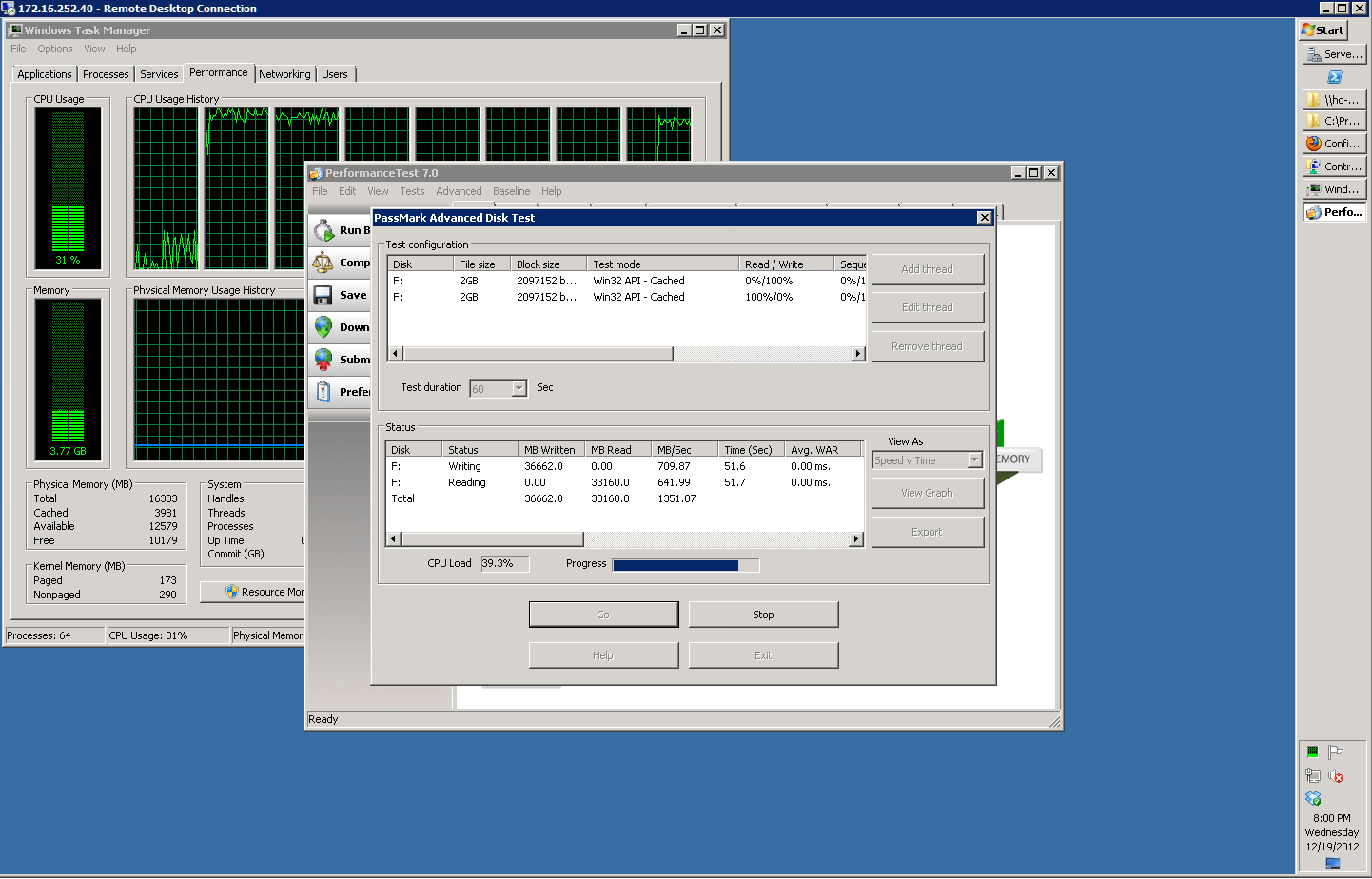

Storage

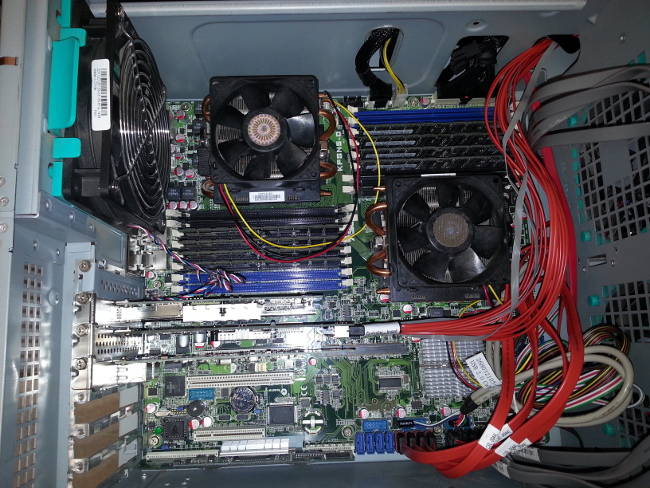

As with most real world test labs, mine contains equipment that spans various generations. Several of the deployed virtualisation servers populating my client sites are white box systems known internally as "Persephone 3" class units. These servers sport ASUS KFSN5-D motherboards with 2x AMD Opteron 2678 processors each.

Inside a Persephone 3 file server

One of theses Persephone 3 servers – sadly, with only 16GB of RAM – serves dual duty as both file server and edge Hyper-V server. It contains an ASUS PIKE allowing for up to 4 RAID 1 arrays. The file server also has a Supermicro AOC-SAS2LP-MV8 8-port SATA HBA connected to 8 Kingston Hyper-X 3K 240GB SSDs.

The operating system on the file server is Windows Server 2008 R2; though only because I do not have licensing that would allow me to use the vastly superior Server 2012 storage technology. Networking is a pair of Intel-provided NICs: one, an Intel 1GbE ET Dual Port, the other an Intel 10GbE X520-2 Dual Port. This gives me the ability to test file and block storage on 1GbE or 10GbE links; alternately configured as teamed or unteamed so that I can also test failover and MPIO capabilities of Hypervisors, VMs and applications. The onboard NICs serve as management interfaces.

The combination of disk array types gives me some options as well. A Windows RAID 0 of the SSDs will provide enough I/O to saturate a 10 gigabit NIC full duplex. A Windows RAID 5 of the same SSDs can get to about 900 MB/sec in one direction or about 750MB/sec full duplex and appears to be bounded entirely by the CPU.

The PIKE card's RAID 1 is good for mounting lots of VMs on big spinning rust arrays that don't need heavy IO. It only runs minor VMs locally, but serves up iSCSI LUNs for the rest of the test lab via Microsoft's free iSCSI Target.

My server is housed in a Chenbro SR-107; this gives me eight 3.5" hotswaps for the RAID 1 arrays. I am planning to install the SSDs in two Icy Dock 4-in-1s. Unfortunately due to lack of stock at the local computer store, at time of press I was still reliant on my ghetto "cardboard and duct tape" 8-in-2 mounting solution. The SR-107 allows for an extended-ATX motherboard; important for my homebrew file server as without a motherboard that large I would run out of PCI-E slots rather quickly.

Being a recycled server, exact costs on my build aren't possible. Assuming your 3.5" hotswaps only contain a pair of 1TB disks for the OS, a new version of this storage node based on a Supermicro X9DR7-TF+, two Intel Xeon E5 2603 and 16GB of RAM will cost roughly $2,000 without the SSD array.

The SSD array cost me $1,400 and the network cards can be had for about $550 if you hunt. Windows Server is $750. This puts the total cost of a fast+slow 10GbE homebrew storage server capable of running a fairly large and diverse test lab at roughly $4,700. I can fill up the rest of the hotswap trays with 2TB drives and still keep costs under $5,500.

The net result of this configuration gives is about 6TB usable of slow storage and about 1.5TB usable fast storage. I also get two 1GbE management/routing NICs, as well as two 1GbE and two 10GbE test lab-facing NICs all on a system perfectly capable of hosting a few VMs in its own right.

Virtual Hosts

One of my virtual hosts is a recycled Persephone 3 server with 64GB of RAM and a pair of SATA hard drives in RAID 1. If your production fleet is older, such legacy systems can be useful to test VMs on the same speed system as you have commonly deployed. Unless you have a spare handy, however, it may not be worth your time. The cost of the RAM is brutal – hence why my file server limps along with 16GB – and the $/flop/watt of operation makes continuing to keep the old girl online increasingly questionable. Even for test labs, OpEx is a real consideration.

Switches and virtualisation nodes

I also have a pair of the "Eris 3" hosts discussed in part 1 of this series. While I certainly wouldn't call homebrew vPro-based systems "enterprise class" by any standard, the Intel Core i5-3470 processors and 32GB of RAM are more than fast enough for all but the most demanding VMs; they've proven to be solid testbed nodes even for intense database work requiring high IOPS. These make great systems for dedicating to a specific high-IOPS VM; typically a database server.

These Eris 3 nodes are basic units costing about $1,000 a piece and sport a 2-port Intel 1GbE NIC. There remains an open PCI-E slot to add 10GbE NICs as required. While wonderfully cheap, their downside is the space requirement they impose; when my test lab is supposed to fit under my desk, they're great. When I need to do enough compute that I require a rack's worth of power and cooling, these are a terrible plan. Another major downside is that they cannot use ECC registered RAM, preventing me from testing RAM configurations in real world use in my server farms.

Enter the Supermicro Fat Twin: eight compute nodes in a 4U box. If you only need to front a handful of enterprise-class VMs, you can probably get by on some Eris 3-like nodes. If your required test environment has dozens – or even hundreds – of different VMs the Supermicro's widgetry makes far more sense. The Fat Twin has far better compute density, is rack mountable and is notably more power efficient.

My my, what a beautiful big Supermicro rack, you have

Configuring each node with two Intel Xeon E5-2609 (quad-core 2.4Ghz processor) and 16 8GB DIMMs (128GB total) costs roughly $21,000, or about $2,625 per node. This configuration would give the Fat Twin about one and a half times the CPU power and four times the RAM for a little over one and half times the price per node of my Eris 3 white box specials.

I will get the opportunity to provide more detail on the Fat Twin option soon. Supermicro is shipping me a Fat Twin with a range of different node configurations; expect a thorough review, including comparisons to both the Persephone 3 and Eris 3 nodes for a variety of computing workloads.