This article is more than 1 year old

Experts agree: Your next car will be smarter than you

Google's dream car? Nope. Head-up displays, parking-spot search, 'platoons', and more

Feature Forget Google's self-driving car – for a few years, at least. Today's real action in the computer-meets-car arena is in the development of advanced driver assistance systems (ADAS), as was made abundantly clear at last week's GPU Technology Conference.

"We're not going to find ourselves driving in an autonomous car tomorrow," said Ian Riches of the research and consulting firm Strategy Analytics. Instead, as self-driving capabilities begin to appear, they'll first be used for "repetitive and dull and boring" things such as parking and driving in congested traffic.

"It's the sort of thing you never see in car adverts," Riches said. "Generally it's the handsome guy driving on a mountainous, twisty road, the handsome guy phoning his gorgeous girlfriend, the stuff you see in the marketing videos. That's not what real life is like."

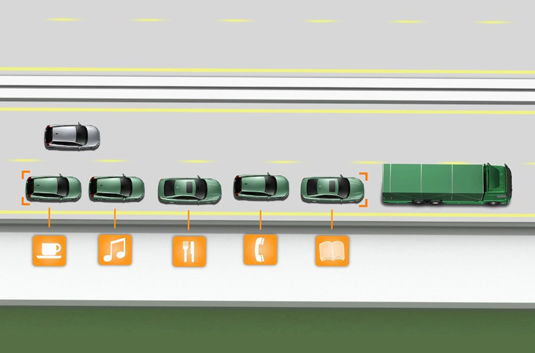

One such repetitive, dull, and boring real-life scenario now under investigation, he said, is the European Commission's "Safe Road Trains for the Environment" (SARTRE) project, in which cars would platoon behind a professional driver – piloting an 18-wheeler, for example – and their cars would semi-autonomously bunch up behind the truck in a tight convoy, allowing their drivers to engage in otherwise illegal activities such as texting or chatting away on their mobile phones.

Riches said that one clear advantage of SARTRE is that it would save money. "The guys behind will be saving some fuel," he said, "so that helps. But also, what do you think is cheaper? Doing this or doubling your road capacity?"

Thanks to SARTRE, as C.W. McCall would say, "Mercy sakes alive, looks like we've got us a convoy!"

But before we even get to semi-autonomous cars on our highways, ADAS-enabled vehicles will provide us – and our cars – with road and traffic information, help us park, assist in lane changes, and snap us back to focus should our attention wander from the task at hand: safe driving.

The need for ADAS is especially acute in urban situations, said Audi research engineer Mario Tippelhofer. "We spend a lot of time thinking about how we can improve safety and how can we avoid accidents in urban areas," Tipplehofer said of his team at the Volkswagen Group of America Electronics Research Laboratory in Belmont, California.

"Our approach was to help the driver to be less stressed, more focused, going into those urban areas in a more relaxed manner," he said. "We're trying to paint a vision of what urban mobility can look like for our Audi customers in the near future.

The areas of study that his research group is investigating include prediction of road conditions and congestion, intuitive interfaces for the presentation of information, and other advanced assistance systems.

There's also the need for ADAS system to be personalized for each individual driver. "Right now," he said, "your car is mostly generic, for a generic driver. But if this car would be really tailored to your needs, it would know about your needs, it could assist you in a much better way."

Part of what's needed in automotive interfaces, Tippelhofer said, is the ability to provide positive suggestions, not merely negative notifications that something has or is about to go wrong. "Right now you have a lot of blinking lights, a lot of warnings in your instrument cluster and infotainment system. But what we really need instead of warning us is helping us to make the right decisions and to stay safe."

Audi's ADAS takes a lot of information from the cloud: traffic status, real-time parking data from sensor-equipped smart meters and parking lots, and weather information, for example. "But we're not only looking at what's happening around us," he said, "we're also looking inside the vehicle."

This personalization includes not only what the driver is doing and focused on in real time, but also what his driving patterns and history are. Adding the cloud-based info to the driver-personalization info, he said, will enable Audi to develop applications to help a driver navigate in what Tippelhofer calls "the urban megacity of the future."

One of those applications, he said, will combine both real-time and predictive parking advice for on-street and off-street parking that will direct a driver to open spots and obviate the need for the all-too-familiar urban "let's go around the block one more time" parking-spot search.

Parking-spot sensors have the obvious advantage of telling an urban parking agency when a meter has timed out, so that they can send a meter maid to that spot to write a ticket, Tippelhofer said, "But as a positive effect they also make that information available to companies like us so that we can see and direct a driver where there is an open parking spot."

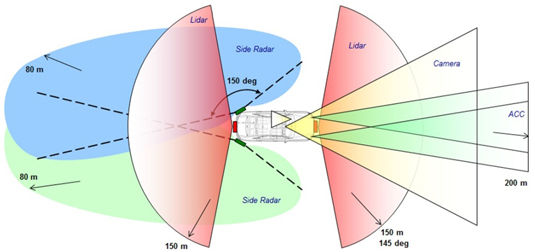

Audi's sensors include radar, lidar, camera, and adaptive cruise-control (click to enlarge; source: Audi)

Predictive algorithms will also advise as to whether that parking spot will still be available when the driver reaches it, and drop it from the list of available spaces if it's likely to have been filled. "For example," he said, "I would leave from Palo Alto, going to San Francisco" – a 35-mile drive – "and as you can imagine, the parking-spot situation is going to change a lot by the time I actually get to the city."

In addition, the more Audi's ADAS learns about the car's driver, the more it can narrow its selection of suggested parking spots based on the driver's history of choosing spots close to or further away from his or her final destination, and factor in the price of the parking and the driver's choice of on-street or off-street parking.

Predictive modeling will also be used to learn a driver's customary routes to a frequent destination, predict traffic congestion on that route at a specific time or when a traffic-causing event is about to take place, and – without the driver firing up his or her navigation system – reroute the driver when the congestion is bad enough that avoiding it would be more efficient than driving through it, even if the distance traveled might be longer.

"For example, Tippelhofer said, "if you're going to San Francisco and there's a ball game or a 49ers game, there's going to be a big traffic jam around that particular destination. That is known. So we can look into schedules of social events that might affect the traffic flow, and based on our simulations make predictions as to what is most likely the best route for your specific destination."

Navigation clues will also be refined so that they won't merely be limited to such information as street names and distances. Instead, he said, Audi's ADAS will give visual-clue directions such as "turn left at the Starbucks" or "your destination is two blocks past the red church on the right."

In addition, multiple in-car cameras will keep an eye on the driver, checking out what he or she is focused on, how long the driver is looking away from the road – at, say, the car's infotainment system and the like – and direct the driver's attention back to the road when necessary.

"This needs to be done in a positive human-machine interface," Tippelhofer said, "because we don't want to distract the driver even more if we detect that he's not paying attention."

Not only would the system attempt to gently and non-intrusively suggest to the driver that it might be a good idea to get their eyes back on the road, but it could also kick in an adaptive cruise-control (ACC) system, which would not allow the driver to inadvertently accelerate due to lack of attention, and would keep a safe distance from the car in front.

Another aspect of driver assistance that the Audi group is investigating is how to help drivers merge into traffic. "Merging onto urban freeways is a very stressful situation," Tippelhofer said, "because there's a very short amount of time to make decisions, and they have to be the right ones."

To help this stressful process, Audi has equipped its test vehicles with multiple radar and lidar (light detection and ranging) sensors, along with cameras and ACC sensors. "We fuse all this information into a recommendation to the driver, what is the best possible way for you to merge into that spot that is opening up," he said.

This merge-recommendation system can also be personalized, since some drivers are willing and able to merge into a tighter spot than others. Audi's ADAS will learn your preferences and adapt accordingly.