This article is more than 1 year old

PowerShell daddy on Windows Server 2012 R2: Cloudy cloud cloud

Control the whole stack and cloud's just an 'OS problem'

TechEd “We don’t spend much time on our virtualisation competitors any more. We’ve moved beyond that,” said Jeffrey Snover, Windows Server and System Center Lead Architect, at a press preview of Windows Server 2012 R2.

“How many of you ever paid for a sorting library? Memory managers? TCP stacks? Now you just get it in the operating system. That is the inevitable progression of our industry. So it will be with virtualisation. Virtualisation was always a stepping stone to something, and that was the cloud,” Snover - who co-designed PowerShell - continues, mixing metaphors with abandon.

Despite these remarks, Microsoft’s Hyper-V virtualisation platform is considerably improved in the Server 2012 R2 release, just announced at TechEd in New Orleans. The most intriguing new feature is called a Generation 2 VM (Virtual Machine), offered alongside standard VMs.

Why bother emulating legacy devices, when you can optimise the operating system to run on a VM? “Windows as an operating system now has deep knowledge of what it means to be on a VM. A Generation 2 VM does away with all the pretence of trying to look like a physical computer,” said program manager Ben Armstrong.

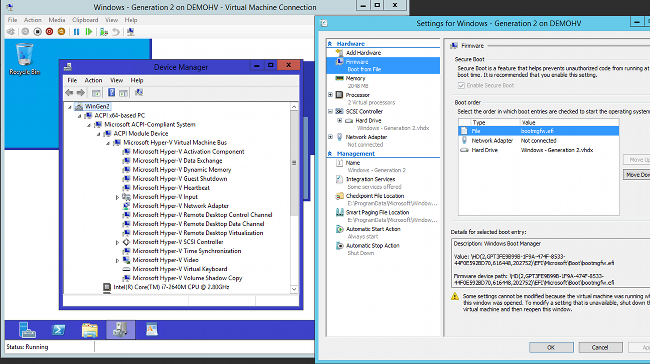

A Generation 2 VM boots with UEFI (Unified Extensible Firmware Interface) off network adapters or virtual SCSI drives, and has no emulated devices. Currently only 64-bit Windows Server 2012 or Windows 8 is supported. Other new features in Hyper-V include a doubling in the speed of live migrations thanks to data compression, support for live migration over SMB, and online resizing of virtual hard drives in VHDX format. You can also do live VM cloning, the idea being that you can export a snapshot of a running VM to troubleshoot a problem offline.

Another neat feature is Remote Live Monitoring, which lets you capture network traffic to a specific VM for analysis from outside the VM. Hyper-V Replica, for continuous availability of VMs, now allows you to choose the replication interval, from 30 seconds up to 15 minutes.

A Generation 2 VM. Note the absence of legacy items like COM ports or PCI to ISA bridge. (click to enlarge)

In Hyper-V networking, Microsoft is combining two existing features. RSS (Receive-Side Scaling) does concurrent processing of packets from multiple network adapters, and VMQ (Virtual Machine Queue) transfers data directly from the adapter to the VM. Now vRSS combines the two.

There are also enhancements to NIC teaming, which combines multiple network adapters into one high-bandwidth and resilient unit, for improved dynamic load balancing. Data deduplication, a feature introduced in Server 2012, is now supported for live VHDs (Virtual Hard Drives). In the context of a VDI (Virtual Desktop Infrastructure), there is substantial potential saving in storage, up to 89 per cent according to Microsoft, though there is a trade-off in increased memory and CPU usage.

Linux is getting enhanced support too, with live migration, live backup, dynamic memory support, and dynamic resize of virtual hard drives, provided of course you have the Hyper-V Linux Integration Services in the kernel.

Hyper-V is the plumbing, but Microsoft’s goal is cloud trinity: three clouds but one cloud. The three are the Azure public cloud, the System Center private cloud, and the hosted cloud from service providers. The one cloud is that all will use the same technology and management tools.

Windows Azure Pack brings Azure to data centres

Jeffrey Snover, now lead architect for both Windows Server and System Center

That may be the goal, but System Center’s App Controller and Virtual Machine Manager are very different from the Windows Azure cloud management tools. How will Microsoft unify the two?

The answer is that Microsoft is bringing Azure’s technology to enterprise data centres, initially via an add-on called the Windows Azure Pack. This was initially released on the quiet in January, as Windows Azure Services, and was only available to hosting companies. The upgraded add-on brings the Azure REST API and web management portal to private clouds with Hyper-V and System Center.

Users will be able to create web sites that scale from multi-tenant to multiple load-balanced VMs, select from a gallery of images to create VMs of various specifications, create SQL Server databases, use a Service Bus for reliable application messaging, and integrate with Active Directory for identity management.

The existence of two different models of cloud, in Azure and in System Center, has long been a puzzle to Microsoft watchers. Convergence is long overdue, so the move is significant.

One of the enablers is that Snover is now lead architect across both Windows Server and System Center, which should ensure a coherent strategy. Snover says that Microsoft treats cloud as an “operating system problem”, emphasising the ability to control the whole stack, in differentiation from competitors like VMWare or Amazon.

The downside is that System Center admins now have the prospect of yet another new management interface which overlaps with existing functionality. A glance at the installation procedure for the existing Windows Azure Services shows another problem, that deploying this stuff is not trivial and dumps the complexity of cloud management onto on-premise administrators. Get it all working though, and the end-user experience will be good, as will the flexibility of scaling out to a public cloud when needed.

Puppet-like configuration comes to PowerShell

Automation is key to operational efficiency, and Microsoft has also announced a new feature of PowerShell, its scripting and automation platform, which gives the ability to define and then implement the configuration of Windows Server.

Called Desired State Configuration (DSC), this uses a declarative syntax to express the state of operating system features. Once defined, you can then apply that configuration to target machines. It is idempotent, meaning that you can apply it repeatedly to fix configuration drift.

PowerShell DSC has the potential to bring to Windows Server configuration management already enjoyed on Linux via systems such as Puppet and Chef. The snag is that it is early days. PowerShell DSC depends on providers being built for each feature, and currently only a dozen or so exist.

Snover (one of the original authors of PowerShell) explained that Windows is API based, whereas Linux and Unix are file based, which makes it harder to automate configuration and makes this approach necessary. In future, could developers in Visual Studio specify the configuration of target servers as part of their application solution, and automatically deploy it to a private or public cloud with VMs created and configured as needed? “We like that idea, but nothing to announce,” said a Microsoft spokesman.

Microsoft’s cloud focus

The common theme of Windows Server 2012 R2, along with what is coming to System Center 2012 R2, is the focus on virtualisation and cloud. There are what look like solid improvements in Hyper-V, much needed convergence between Azure and System Center, and the beginnings of improved automation with the potential to integrate development and operations on Microsoft’s platform. Microsoft’s direction is clear.

The aim is to unify public and private clouds, enabling common management and seamless interoperability. Questions remain though. If the goal is to use common cloud tools, why not use the open source OpenStack rather than Windows Server?

And are Windows Platform administrators ready for major changes to System Center so soon after the 2012 release? The amount of new features in Server 2012 R2, just one year later, is impressive, but businesses may be reluctant to go at Microsoft’s speed. ®