This article is more than 1 year old

Haswell Xeons bring brawn to microservers, media servers, more

Integrated graphics engine dual-purposed for media processing

Computex There are a lot of different ways that Intel could have deployed its 22-nanometer wafer-baking process to cook up the "Haswell" variants of the Xeon E3-1200 v3 processors, but the tactic they chose was to bring the low-power benefits inherent in the Haswell design to bear for entry servers, workstations, and the emerging media-processing system market.

Intel could have ramped up the core count and goosed the throughput of the chip, but as they announced on Tuesday at the Computex shindig in Taipei, Taiwan, they've kept the core count constant and memory addressing the same, focusing on those key markets in which the Xeon E3-1200 v3, as the server variant of the single-socket Haswell chip is called, will be deployed.

"We do not see a lot of requests for a big change in this segment," Dylan Larson, Xeon platform marketing director, told El Reg when asked about busting out of the four-core and two memory stick limit that the Xeon E3s have been at for the past three generations.

"We are watching it and we will respond to requests," Larson said, "but for now, it is rightsized for the power envelope and its targeted workstation, microserver, SMB, and media workloads."

At the moment, Xeon E3 chips, with their relatively brawny cores, are not getting huge uptake among the hyperscale data center operators such as Google, Facebook, and Yahoo. They are similarly not getting adopted by the HPC community, even though the supercomputer builders are notorious cheapskates, as are the hyperscale data center operators.

The issue is not cheap computing and coprocessing, which the Xeon E3-1200 v3 chips certainly offer. It's that their codes have been tuned to run on two-socket x86 processors, and despite the potential density and cost savings they might enjoy – and that AMD has, ironically, demonstrated quite well with its Xeon E3-based SeaMicro microservers – the grief of retuning those apps and building out new infrastructure is larger than the possible benefits.

On new workloads, however, there is a potentially different story unfolding, one that is aptly demonstrated by the vastly better computational power in the Iris integrated graphics processing units and how companies that are doing media transcoding are looking for a better solution than racks of two-socket Xeon E5 servers tricked out with Nvidia or AMD discrete GPU cards.

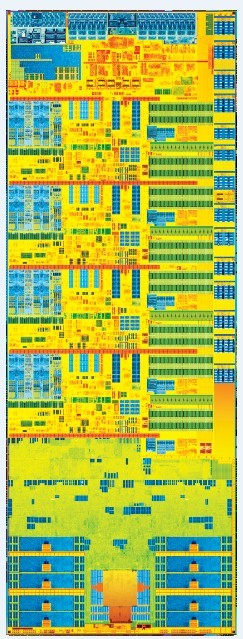

The Haswell Xeon E3-1200 v3 chip is tall

and skinny, like your Reg reporter

Media companies in Asia – in China in particular – are keen on building transcoding engines using the Haswell Xeon E3s, says Larson. It is far easier and takes less storage capacity to transcode to a zillion different formats and devices on the fly than to make all possible options and store them. In addition, for live feeds such as breaking news and sports events, you have to transcode on the fly anyway. So companies are looking for cheaper options.

"For media transcoding, we think we have some unique benefits with the Xeon E3-1200 v3 because of the way we built the GPU and done the transcoding," Larson says. "There is a benefit from having the graphics close by the CPU. The media players and software providers in the media industry don't just want to do some transcode that a digital signal processor might do. The want to do ad inserts in streams and disassemble things, and they can do it all close to the CPU and have stunning transcoding performance."

El Reg will do a more in-depth analysis of the performance of the Xeon E3-1200 v3 compared to its predecessor, the "Ivy Bridge" Xeon E3-1200 v2 that came out last March. But generally speaking, the performance bump on integer work for the top-bin Xeon E3 v3 without the integrated HD P4700 graphics is 9.8 per cent, the improvement for floating point and Java work is about 3.6 per cent, and for the low-voltage parts the performance-per-watt increase is 18.6 per cent.

But the integrated graphics in the server version of the four-core Haswell part is 38 per cent higher than on the Ivy Bridge part it replaces. The new 25-watt Haswell part has 52 per cent better performance per watt than the 45-watt Ivy Bridge part it replaces, and there is a a new 13-watt Haswell Xeon E3 that is going to see lots of action in microservers against x86 chips from AMD (specifically the "Kyoto" Opteron X chips announced last week) and the slew of ARM chips that are coming to market early next year.

The Xeon E3-1200 v3 is also expected to get some traction in online gaming and virtual-desktop infrastructure, where a mix of low-cost chippery and reasonably high graphics performance are required. Ditto for video conferencing and various cloud-based media services.

Single socket to me – and AMD

El Reg has already dove deeply into the guts of the Haswell architecture, which Chipzilla first talked about in detail at Intel Developer Forum last fall. You can review that and a follow-up we published on Monday for the nitty-gritty detail on how the chip has new low-power states that allow it to burn a lot less juice than the Ivy Bridge chips did.

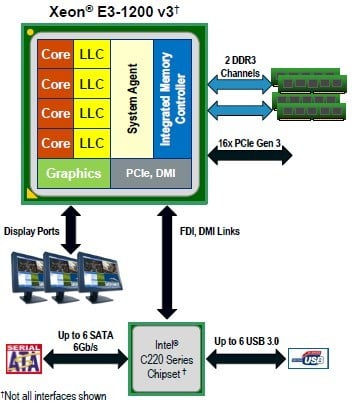

Block diagram of the 'Haswell' Xeon E3-1200 v3 processor

Suffice it to say that the entry server and workstation Xeon E3 chips benefit from Intel's absolute necessity to use its process technology lead to their best advantage for a mix of performance and power efficiency to keep its x86 competitors and many impending ARM usurpers at bay.

The Xeon E3-1200 v3 processor has 1.4 billion transistors and has a die that is 177mm2 in area. The die is "hot", which means it doesn't slide into a socket like other Xeon chips, but rather is welded directly onto circuit boards like those used in PCs and tablets. We were not able to get the transistor count for the core, the graphics unit, and the uncore regions as we went to press, but we will update this article as that data becomes available.

Each Haswell core has 32KB of L1 instruction cache and 32KB of L1 data cache, as well as an L2 cache memory that weighs in at 256KB. The four cores share an 8MB L3 cache. The Haswell cores sport Advanced Vector Extensions 2 (AVX2) vector math units that have twice the floating point oomph of the Ivy Bridge AVX units. The chip has a fourteen-stage pipeline.

The Haswell Xeon E3 chip has a single memory controller that can drive two DDR3 memory channels, and the memory is tapped out at 16GB per stick. The chip has support for a single PCI-Express 3.0 x16 slot on the die as well, and hooks into the "Lynx Point" C220 chipset through the Direct Media Access (DMI) link and with the additional Flexible Display Interface (FDI) in those models of the chip that have an integrated GPU fired up. The Haswell Xeon E3 can drive up to three displays, and the C220 chipset can have up to six SATA ports running at 6Gb/sec, and up to six USB 3.0 ports hanging off of it.

That extra bandwidth on the SATA ports and the integrated PCI-Express 3.0 slot is going to be particularly useful for certain microserver workloads, and those USB ports are necessary for workstation users who can't seem to plug enough peripherals into their machines. (My own workstation could use about five more USB ports.)

The integrated graphics chip on the Haswell Xeon E3 is up to ten times faster when encoding video using the H.264 codec at 1080 pixels and 30 frames per second than the GPU in the Ivy Bridge Xeon E3 chip was. The GPU in the Haswell Xeon E3 can do full hardware encoding for the MVC format used by Blu-Ray 3D, as well as the MPEG-2 format. The GPU also supports JPEG and MJPEG hardware decoding.

By the way, the Haswell Xeon E3 chip does not use the high-end "Iris" GT3 GPU that some 4th Generation Core i7 chips are getting, but rather the GTX GPU that is branded the HD P4600 and P4700.

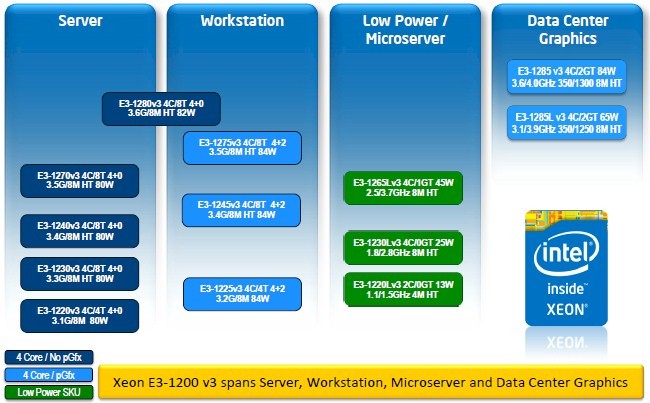

The Haswell Xeon E3 chips are aimed at a lot of different targets

To help software developers make use of these encoding and decoding functions in the Haswell Xeon E3 chips, Intel has cooked up a media software development kit that provides a hardware abstraction layer for the GPU to unify coding for CPU and GPU units.

This SDK supports applications coded for both Windows and Linux, and Intel is promising that if you use this SDK your resulting ceepie-geepie applications will be "future proven" because they will be forward compatible with future Xeon processors with integrated graphics. Larson says that 130 software development companies are using the SDK already, and half of them are building applications specifically for the Haswell Xeon E3 chips.

This is not aimed at HPC – yet

This SDK is not, by the way, designed to turn that graphics unit into a generic floating-point coprocessor. But that could happen. "We are definitely not positioning it that way," says Larson, "but computationally it is a big vector engine to paint pixels."

So, make your mischief where you will, techies.

There's a Xeon E3-1200 v3 for just about any single-socket box you can imagine

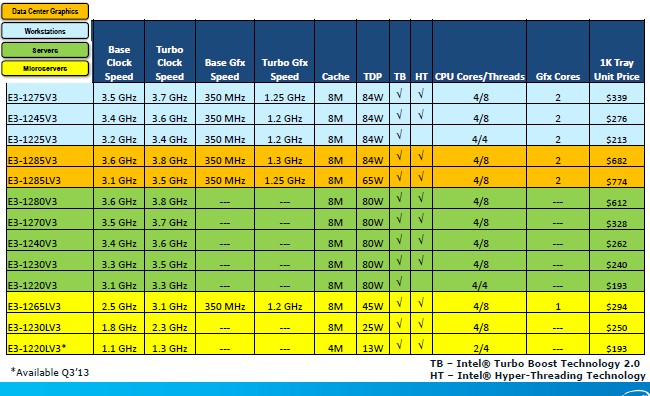

There are thirteen Xeon E3-1200 v3 processors, and generally speaking, the standard SKUs are priced about the same as their Ivy Bridge predecessors. As usual, Intel is charging a premium for top-bin and low-voltage parts in each of the four slices of the product line it is addressing. Some of the chips have HyperThreading, which is Intel's implementation of simultaneous multithreading, on the cores and some do not.

The E3-1265L is a low-volt part with one graphics core, while the remaining parts aimed at data-center graphics (VDI, media transcoding, and so forth) and workstations have two cores. All of the chips have Turbo Boost enabled on the Xeon cores, which lets them jump up their clock speeds if there is thermal headroom to do so.

Twelve of the chips are available now, but the 13 watt E3-1220L that will be particularly interesting for microservers is not going to be available until the third quarter. That chip only has two cores and four threads enabled, and has half of the 8MB of L3 cache memory disabled as well, which is how it is getting its wattage down.

Anyone ready for a four-core 15 watt Xeon E3-1200 v4 implemented in 14 nanometer tech? ®