This article is more than 1 year old

IBM forges NextScale servers for HPC and hyperscale cheapskates

As vanity free as Big Blue can get with server clusters

IDF13 Concurrent with Intel's launch of the "Ivy Bridge-EP" Xeon E5-2600 v2 processors in San Francisco today, Big Blue trotted out its most vanity-free machines to date, the NextScale line.

The new systems are designed specifically to compete with low-cost, stripped down alternatives from Hewlett-Packard, Dell, Silicon Graphics, and Super Micro as well as those who are pushing boxes based on specs from the Open Compute project started by Facebook more than two years ago.

The NextScale machines bear some resemblance to other dense, minimalist server designs out there on the market based on half-width server nodes, and they will eventually take the place of the iDataPlex machines that Big Blue rolled out in May 2008.

The iDataPlex systems were created to chase supercomputing and service provider customers that were looking to pack more computing into a smaller space in the data center.

While they have been successful, particularly among some large supercomputing centers, the iDataPlex racks are non-standard size, based on server nodes that are 15 inches wide instead of 19 inches; the rack also has two columns of machines side by side by default, for a maximum of 84 machines, in a rack that is not only skinnier but also shallower than the standard rack.

This, in theory, is a good thing, but racks are a standard size for a reason and anything non-standard always has a hard time. And so IBM is going with standard 19-inch server racks with the NextScales and is boosting the density by going with half-width, full-depth server nodes, just like just about everyone else building hyperscale servers is doing these days.

The iDataPlex is going to be around for quite a while yet, just like IBM is still selling BladeCenter blade servers even though its FlexSystem modular machines have been out for a year and a half. Gaurav Chaudhry, marketing manager for the System x hyperscale computing division, tells El Reg that IBM will probably keep selling the iDataPlex machines for another 18 months or so.

One of the reasons is to support existing accounts with iDataPlex machines. Another is that IBM has not yet integrated water cooling into the NextScale systems, as has been available for the iDataPlex machines for quite some time.

But there is a plan to bring water cooling blocks and pipes to the NextScale line for customers who want to use the fastest and hottest processors. Quite possibly some Power chips from IBM and probably also fat GPU coprocessors from Nvidia. But Chaudhry was mum on any specifics.

Unlike the BladeCenter and FlexSystem machines, but like the iDataPlex before it, the NextScale machines do not have a midplane that server nodes snap their I/O ports into or a backplane that meshes together servers, storage, and network switches and passthrough modules, all under a single management framework on a per chassis (or multi-chassis) domain.

The kinds of parallel applications run by HPC centers, public cloud operators, and enterprises setting up private clouds do not always need all of the reliability and management features of these full-on, enterprise-class machines.

The idea behind modern apps is that they scale across multiple nodes and have their RAS features inherent in the software layer, and thus there is no technical reason to have so many management controllers, power supplies, fans, and other components in the box. The machine is stripped down to the bare essentials to be a node in a cluster with nothing fancy schmancy that adds cost.

IBM designed the NextScale chassis and its power and cooling systems at its development lab in North Carolina, but the boxes are built in its Shenzhen, China, factory to get the lowest cost of production possible inside of Big Blue. The server nodes for the machine will also be made in China, and the first server node available for the box was engineered at its Systems and Technology Group design lab in Taiwan.

"We tried to stay away from building a luxury car," says Chaudhry. "This is a high performance race car."

The machines were designed explicitly to take on HP's SL6500 Scalable Systems enclosures with the ProLiant SL230s as well as a number of bespoke machines put together by Dell's Data Center Solutions unit, which has the dominant market share of hyperscale server sales these days.

"We are one-to-one with HP on price," brags Chaudhry. But Dell, Super Micro, and the OCP box forgers are probably the ones that are the more dangerous profit-killing competitors in the hyperscale server sector these days.

That said, if IBM is saying it can meet HP on price, and is offering a more standard computing platform that meets density requirements, this is a big improvement over the past couple of years.

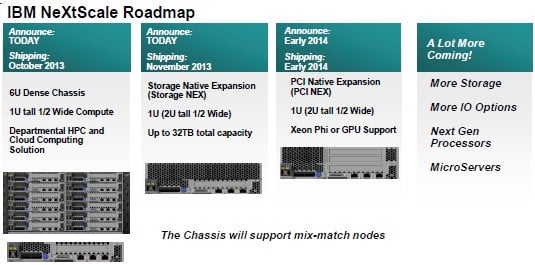

IBM has plans to expand the NextScale line over the next year

That IBM is saying in its presentations to business partners and at Intel Developer Forum that it will be able to put microservers in this NextScale chassis also shows that at least some people inside Big Blue want to try to make some money in this low-end part of the server racket - should it firm up and actually generate a sizable chunk of revenue. IBM has been quiet as the grass growing about microservers for the past three years.

The NextScale starts out with a bare-bones chassis, called the nx1200, and as the name suggests, it has a dozen half-width server nodes in a 6U chassis. Each server node has two sockets, so you can get 24 sockets of Xeon E5 computing into that 6U of space. That works out to four sockets per vertical unit of capacity for the NextScale machines.

IBM's BladeCenter H blade server enclosure could do 14 blades in a 9U enclosure. Because the blades were so skinny, there were some limits on the wattages of the processors that could be put onto the blades. Anyway, that is still only 3.1 sockets per vertical unit of rack space, and you can use anything from 40 to 130 watt Xeon E5-2600 v2 parts in the NextScale box.

The iDataPlex had 84 nodes in two half-depth racks, side by side, which worked out to 168 sockets in roughly the same space as a 42U rack, which works out to four sockets per vertical rack unit.

The Flex System chassis has 14 half-width server nodes in a 10U rack space, with those nodes also being two-socket servers. The math comes to 2.8 sockets per vertical rack unit for the Flex System.

But hold on a second. Remember that IBM announced the double-dense Flex x222 server node for the Flex System a month ago. These are based on the less-peppy Xeon E5-2400 v1 processors, but still, that doubles up the compute density to 5.6 sockets per unit of vertical rack space.

IBM needs to add water cooling to the NextScale to be able to double up the compute density on these machines to 8 sockets per vertical unit, but has made no such promises to do so.

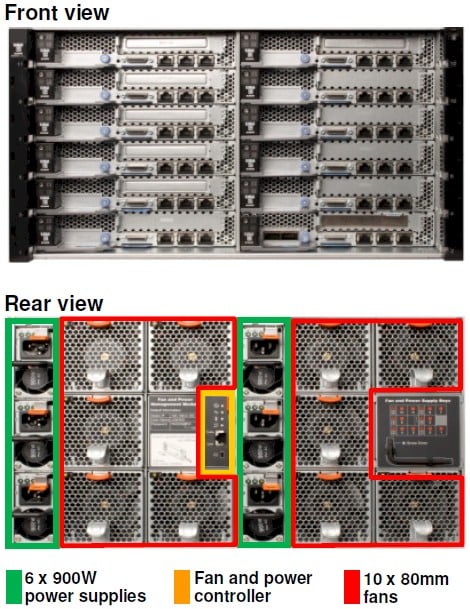

The front and rear views of the NextScale nx1200 chassis

The nx1200 chassis has up to six 600 watt power supplies, which can be set up with full redundancy or failover sparing, and can have up to ten hot swap fans to help keep the components cool. The chassis has a fan and power controller smack dab in the middle of the back.

The server nodes link up to top of rack switches and there is no networking or chassis management controllers in the box. IBM says customers will probably use Platform LSF, Platform HPC, or xCAT to manage the nodes, just like HPC shops do. The chassis will allow customers to eventually mix and match different generations and types of processing nodes with storage and coprocessor nodes.

The Storage Native Expansion, or Storage Nex, module will have 32TB of capacity (eight 3.5-inch SATA drives with 4TB of capacity each) and will include a RAID disk controller, a SAS cable back to a compute node, and hard disk drives in a unit that is 1U high, just like the compute node.

The PCI Native Expansion chassis is also 1U high and it looks like it has room for two Nvidia Tesla GPU or Intel Xeon Phi coprocessors, which snap into rise cards and then in turn plug into the nx360 M4 server node.

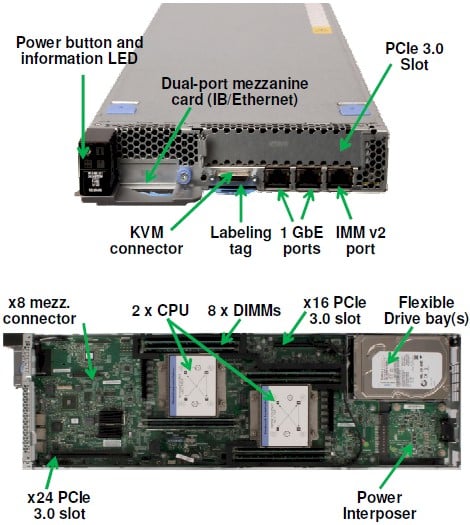

Speaking of which, here's what that nx360 M4 server node looks like:

The NextScale nx360 M4 server node

The nx360 M4 server node was four memory slots per processor socket and currently taps out at 128GB of capacity against those shiny new Xeon E5-2600 v2 processors from Intel. That's enough memory for a lot of workloads, but probably not all of them.

The server node has a single PCI-Express 3.0 slot with sixteen lanes (x16), and interestingly according to the specs, as you can see above, it has another slot rated at 24 lanes (x24). There is also an x8 mezzanine I/O connector for network interfaces, and in this case IBM is offering dual-port InfiniBand (running at 56Gb/sec) or Ethernet (10Gb/sec) options.

The node also has two 1Gb/sec Ethernet ports welded to the board, plus a KVM connector (that is for the keyboard-video-mouse, not Red Hat's server virtualization hypervisor). There is room for one 3.5-inch or two 2.5-inch drives, which can be either SAS or SATA disks. IBM is also sliding in four of its 1.8-inch solid state drives if you want to get flashy.

The nx1200 chassis and nx360 M4 server node will ship on October 28. The Storage Nex expansion module will ship on November 29. The PCI expansion module will ship next year, and IBM is promising more storage, more, I/O options, more processors, and microservers.

Chaudhry says that IBM is considering adopting ARM and Power servers for the NextScale line, but has made no promises. A hybrid Power8-Tesla node could get IBM a better seat at the bargaining table for HPC deals where Power compatibility is a big issue and the BlueGene/Q machine is not appropriate.

A loaded up nx360 m4 server node with two ten-core Xeon E5-2680 v2 processors running at 2.8GHz and sporting 25MB of L3 cache has 64GB of main memory, two 2.5-inch disks (capacity unknown), and the InfiniBand mezz card; it costs $7,709.

A base node has two six-core E5-2620 v2 processors, which have 15MB of L3 cache and which run at 2.1GHz, plus 32GB of memory and one 3.5-inch disk with no networking mezz card; it costs $4,049. Red Hat Linux 6, SUSE Linux Enterprise Server 11 SP3, and Microsoft Windows Server 2012 are all supported on the node; so is VMware's ESXi 5.1 hypervisor. ®