This article is more than 1 year old

Think Amazon is CHEAP? Just take a look at these cloudy graphs...

Reg data analysis uncovers the surprising pricing strategies of clouds

Updated: 'Big Data' analysis Amazon has slurped cloud cash for years, but a recent analysis by El Reg shows that the mega bit-seller charges a surprising premium for some of its services, and is facing stiff competition on price from rivals.

Vulture West's floating cloud bureau was recently roused from a beer-soaked sleep by a phone call from the denizens of the United States of Enterprise IT. "Oh please El Reg," they said, "let us evaluate clouds without the marketing crap, and with a decent amount of data."

After breakfasting on some deep-fried Xeons served on a bed of crunchy analyst reports, we were happy to oblige. We then spent the ensuing weeks slurping data from provider pages to try and figure out where clouds are competitive, where they are weak, and who has the greatest chance of dethroning cloud kingpin Amazon Web Services.

The results hold surprisingly good news for current wannabe cloud-slinger Google, and paint a dismal picture of midsize hoster-turned-cloud-operator Rackspace.

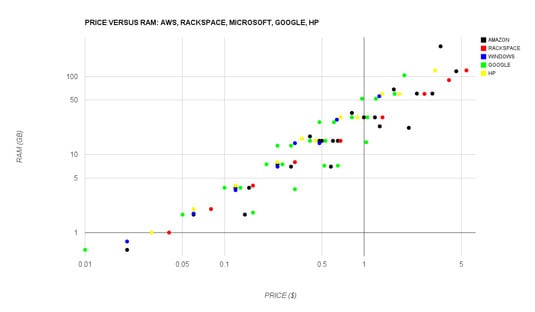

We analysed the compute instances on offer from Amazon, Microsoft, Google, HP, and Rackspace, and compared them both on a price-per-RAM and price-per-vCPU basis. Other comparisons, however, were difficult due to the varying levels of information disclosed by cloud providers.

We found that when it comes to RAM pricing, we're seeing the emergence of a fair market with a clear trend line of pricing across providers.

As expected, Amazon has the broadest range of RAM allocations on offer, but it faces stiff competition from Google, which serves up a range of decent instances with varying capabilities. Enterprise-specialist HP is surprisingly competitive as well, though the provider's recent cloud update came with a bevy of severe problems that likely put its cloud out of the running for many serious projects.

Rackspace, meanwhile, offers the lowest amount of RAM available on average, even when factoring in the company's just-launched cloud servers. This may reflect the provider's small scale (around 100,000 servers under management, relative to millions for Amazon, Google, and Microsoft), or perhaps an unwillingness to erode its own margins by competing.

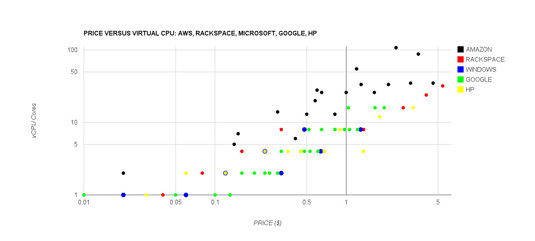

Though RAM is competitive, the market for virtual CPUs is still very much dominated by Amazon, which fields many more instances with far greater capabilities than its competition.

Amazon instances, though, have variable performance properties, and it's an open secret in the cloud industry that after spinning up a swath of instances you may find yourself with a few duff servers that need to be spun down.

As far as current data indicates, Microsoft has not stepped into competition with Amazon here, but Google has. This may reflect a long-term bet by both companies that high-performance computing workloads will continue to shift into clouds.

Though Google doesn't field nearly as many instances as AWS, its Compute Engine has by many accounts more sophisticated networking options than does AWS, so this better I/O layer can compensate for the lack of many vCPUs per server.

Rackspace fares better here, though it does charge a premium for vCPUs relative to Amazon. HP and Windows closely track each other, with some instances overlapping exactly – perhaps a reflection of the traditional enterprise heritage of both of these providers.

Bootnote

Readers, if you found this data useful, let us know how we can expose it to you in the future – would you like a link to the (Google) spreadsheet we keep this data in, or a live graph, or perhaps regular bi-yearly updates? Let us know. ®

Update

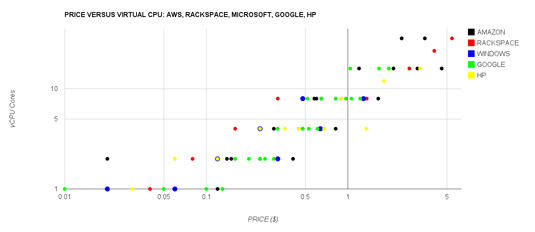

The original earlier version of the vCPU graph, above, included Amazon's optimistic "ECU" classifer, rather than raw vCPUs. This has been updated, below. The new graph reflects Amazon's continued lead in this area via its 32-core instances, and shows a tighter competition between it and Google at the high end, and it and Microsoft, HP, and Rackspace at the low end.

Though Google just added 16-core instances, Amazon is still ahead with its 32-core flock (click to enlarge)

As for those of you who have been asking for the data: we're going to update our model, clean it up, and figure out a way to safely expose it to you all.