This article is more than 1 year old

Google-sponsored research helps ad-slingers find fanbois on social networks

'We noticed you were holding an iPhone at a friend's wedding, need any accessories?'

Next time you post an image of your new El Reg prosthetic beak on the internet, be aware that marketers will soon have a tool to let them spot it, isolate it, and figure out what it's pictured alongside.

In a paper to be delivered in Sydney today, academics from Carnegie Mellon University demonstrate a new approach to identifying brands in images and spotting their associations.

The "Inferring Brand Associations from Web Community Photos" paper goes beyond traditional text-based analysis tools and wades into the nascent field of image recognition and classification.

"With experiments on about five million of images of 48 brands crawled from five popular online photo sharing sites, we demonstrate that our approach can discover complementary views on the brand associations that are hardly mined by traditional text-based methods," they write.

The approach "can detect the regions of brand more successfully than other candidate methods," they say.

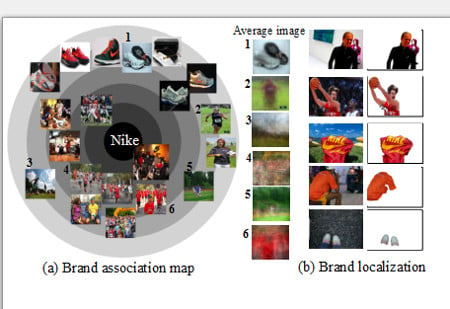

The CMU tech can identify the context in which brands appear, and isolate them

The tech has two key elements: one part of it helps isolate brands from their surroundings, such as picking out the golden arches of McDonalds that lurk in the background of a family photo, and a second component groups these isolated brands by associations.

To gather the data, the scientists trawled Flickr, Photobucket, DeviantArt, Pinterest, and Twitpic for images that had been labelled with one of 48 brands in either meta-data or tags.

They then tested these images against an algorithm containing as input a selection of images of each of the 48 brands, which helped them identify various "exemplars" — frequently depicted things containing the brand, such as famous football players.

"Given millions of images retrieved by the query Nike, we cluster the images into L number of groups using visual similarity between images. (L is given by a user, ex. L=1000)," CMU researcher and one of the paper's authors Gunhee Kim told El Reg by email. "The exemplar is the cluster center (i.e., most representative image of the cluster). If a cluster is larger (i.e., include more images), it and its exemplar will be ranked higher. (For example, if the Nike set contains 10,000 jogging images and 5,000 soccer images, jogging image will be higher ranked)."

With the exemplars, they can then create clusters of the most-depicted associations of a brand, and can also refine their algorithms for separating the branded elements out from a picture.

"Exemplars are used to visualize the brand association map as a concise but comprehensive set of key visual themes associated with brands," the researchers write. "Second, for brand localization, the exemplars are used as references to detect the most brand-like regions in clustered images."

Given enough data, the approach can give marketers a new way to figure out where their brands are appearing most frequently – valuable information for use in future advertising campaigns.

Other potential applications include "photo-based competitor mining," and "contextual advertising for images," according to documents received by El Reg from CMU researchers.

"As output of our work, we can discover the dominant image clusters of each brand and image regions that are most associated with the brand, from the pictures posted by millions of users. Therefore, given a novel image, we can identify which brands are most relevant to the whole image (or regions of the image). For example, for the group of luxury brands, if the new image includes a watch and a bag, we can first generate bounding boxes for the two regions, and retrieve the Rolex and Louis Vuitton as the most relevant advertisement, respectively," they write.

Carnegie Mellon University is one of the premiere institutions in America for developing computer-aided analysis of web content, and is frequently favored with grants from Google and the National Science Foundation for its oh-so-ad-friendly research.

Other projects that CMU faculty are working on include the "Never Ending Image Learner" (NEIL) tech, which scans the web and learns the associations of various images, and its predecessor the "Never-Ending Language Learner" (NELL).

Though these projects are all developing new techniques for figuring out the associations and patterns within and between large collections of images, they still depend on pre-determined data such as the tags of images. This is an area in which Google may be able to help, given that its own machine learning platform recently figured out how to identity paper shredders all by itself. ®