This article is more than 1 year old

ARM lays down law to end Wild West of chip design: New standard for server SoCs touted

According to Hoyle, your DRAM is at 0x80000000

Brit processor core designer ARM has forged a specification to smash through a significant barrier to the widespread adoption of its highly customizable chip architecture in data centers.

That barrier-smasher? A specification that aims to standardize how ARM system-on-chips (SoCs) interoperate with low-level software, and in doing so spur the creation of a broader selection of applications to run on the 64-bit ARMv8 architecture.

ARM-compatible processors represent an alternative to the traditional x86 chips sold by AMD and Intel into major data centers. The thrifty power consumption and flexible building-block nature of the chips has attracted interest from a broad swath of the IT industry.

The Server Base System Architecture (SBSA) specification was announced at the Open Compute Project's Summit in San Jose, California, today, alongside numerous other announcements that look set to change the face of the data center infrastructure biz.

If adopted, SBSA means that operating system makers will be able to build one image of their software for all SBSA-compatible ARM-powered servers, making the overall market behave more like the traditional, standardized world of x86.

At the moment subtle differences between ARM system-on-chips impose an extra development cost on software makers as they need to tweak their low-level code on a per-processor-package basis. It's a situation that has enraged Linux kernel chief Linus Torvalds.

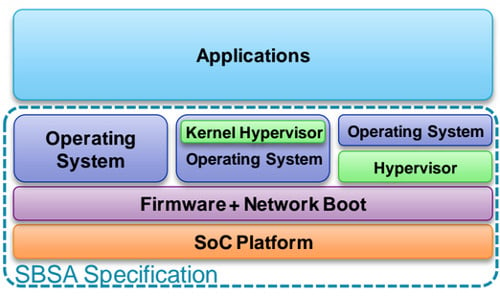

The SBSA features multiple levels of standardization, ranging from defining the minimum mandatory hardware, to making sure that any optional hardware used during boot conforms to appropriate industry standards, to ensuring that the platform's hardware and memory map is describable and discoverable so that the operating system can automatically support whatever hardware is present.

With the SBSA, ARM hopes to take the headache out of developing for its chips

"Think of it as a specification at the SoC platform level – designed for firmware and OS vendors," Lakshmi Mandyam, ARM’s director of server systems and ecosystems, told The Reg. "The specification will spell out things like memory locations, how individual elements of the SoC can be describable and discoverable at boot, CPU features that must be present – it really talks about mandatory hardware that must exist."

The specification has three standardization levels (0, 1, and 2) which build on one another. Though El Reg was not able to get its hands on the full specification at the time of writing, we do know that it defines architectural areas such as I/O virtualization, clock and timer subsystem, the overall memory map, the interrupt controller, CPU architecture, power state semantics, and more.

The specification was developed with a broad set of partners, including AMD, HP, Red Hat, AppliedMicro, Cavium, Broadcom, Texas Instruments, Microsoft, and the Linaro Linux-on-ARM group, among others.

Needless to say, wide adoption of the specification may cause worry at server chip giant Intel as it would remove one of the main drawbacks of developing mission critical code for ARM systems: variability.

"If you think about the history of the ARM play... try to think about it as an appliance model – a single instance tightly coupled, tightly controlled for a single application," Mandyam explained. "As we've evolved in the 32-bit space at the time, as we've evolved towards 64-bit we see that standardization is going to help the rapid deployment of ARM-based solutions."

Though ARM fans frequently point to the RISC chips' low-power requirement as one reason (among many) for the architecture's huge uptake globally, it's likely this advantage will narrow over time as Intel churns out more Atom-based x86 SoCs using its advanced production processes to reduce power consumption.

So, we turn to one of ARM's other selling points, which is the ability to license a relatively minimalist processor core and build a custom system-on-chip around it, bolting on whatever specialized hardware is required (perhaps a disk controller or an encryption accelerator, and so on). This lets engineers tailor a processor for a specific workload, which may be why huge data center operators like Facebook and Google are sniffing around the technology.

However, a barrier for the system so far has been the time it takes to develop the chips and the worries by the companies that their IT needs could have changed in the two years or so it takes to develop the processor.

And it's worth noting that the rise of ARM in the data center is by no means a sure thing: upstart ARM server chip designer Calxeda shut its doors just before Christmas as it failed to wring enough cash from its 32-bit designs before it could ship 64-bit ARM silicon to customers. (When we mentioned this to Mandyam, she said: "It was something you don't like to read about in the news. I think they brought a new way of thinking [and] spurred change in the industry.")

How large the market for ARM server chips could be is difficult to say. "A lot of stuff that's happening is happening quietly," Mandyam said. "We talked about Facebook as being public – I think you could think of those customers currently evaluating and sandboxing with the ARM architecture."

The SBSA shows that ARM reckons there's enough cash and enthusiasm bottled up to make it worthwhile. We'll get a clearer sense of the architecture's popularity in the data center in 2015 after companies have had a chance to play with 64-bit ARM chips from Applied Micro and AMD, whose silicon is due this year. ®