This article is more than 1 year old

Nvidia unveils Pascal, its next-gen GPU with hella-fast interconnects and 3D packaging

It's the 'next generation of [you name it],' says the CEO

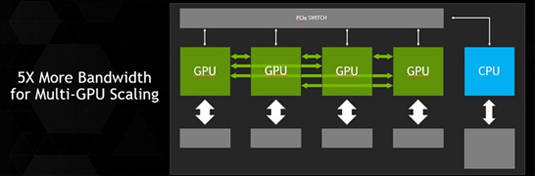

GTC Nvidia has announced its next-generation GPU, and with it a new interconnect it dubs NVLink that it says will increase the bandwidth among GPUs – and also CPUs, should a processor designer implement it – over that of PCIe by five to twelve times.

String a bunch of GPUs together with NVLink, and you effectively create a single hella-big GPU

"NVLink is a chip-to-chip communication," Nvidia CEO and cofounder Jen-Hsun Huang said during his keynote at the GPU Technology Conference (GTC) in San José, California, on Tuesday. "Differential signaling with embedded clock."

NVLink's programming model is essentially that of PCIe, but with enhanced direct memory access (DMA) capabilities, which should make it easy for developers to adapt their software to it, Huang said.

"Because we're able to pile on eight blocks – eight signals in one block – scale up to four in the first generation and up to eight in the second generation," he said, "we're able to take PCI Express and increase its performance, increase its bandwidth, increase its throughput, by five to 12 X."

This chip-to-chip communication is not limited to GPU-to-GPU communications – effectively turning a raft of GPUs into one massively parallel GPU – but also among GPUs and CPUs. And should a CPU vendor get onboard, unified memory (and in the second generation, cache coherency) will also be supported, not unlike the hUMA heterogenous unified memory architecture being pushed by AMD.

Nvidia already has one major CPU partner for NCVLink: IBM. "NVLink enables fast data exchange between CPU and GPU, thereby improving data throughput through the computing system and overcoming a key bottleneck for accelerated computing today," said IBM VP and fellow Bradley McCredie in a statement. "We think this technology represents another significant contribution to our OpenPOWER ecosystem."

Nvidia has already announced that support for unified memory will be included in its upcoming CUDA 6 GPU-programming toolkit, which is in the release-candidate stage.

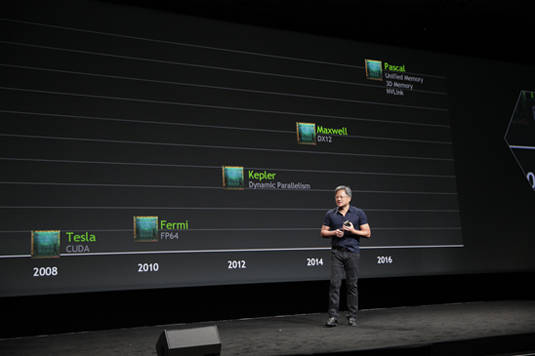

Huang also announced on Tuesday morn that NVLink will be featured in its next-generation GPU architecture, code-named "Pascal", the follow-on to the recently announced Maxwell and expected to appear sometime in 2016.

According to Huang, Moore's Law is expected to someday hit the wall – but not just yet

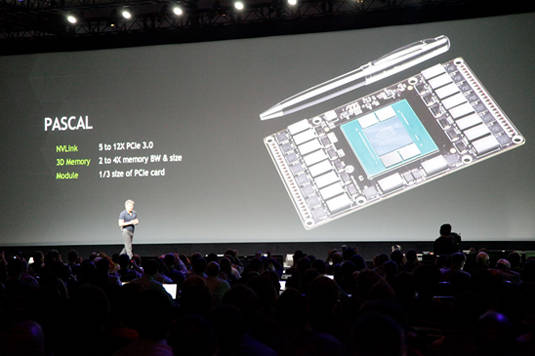

In addition to NVLink, Pascal will also incorporate another advancement in Nvidia's efforts to make more bandwidth available to its GPUs: 3D packaging.

"The challenge, of course, is that the GPU already has a lot of pins," Huang said. "It's already the biggest chip in the world. The interface is already extremely wide."

Sure, Nvidia could either make its memory interface wider or speed up the signaling to improve bandwidth, he said, but the former would make a big chip even bigger and the latter would require more power in a power-constrained environment.

Nvidia's solution will be to "build chips on top of other chips. We're going to pile heterogeneous chips – meaning different types of chips – on one wafer," he said.

"If you can just imagine: a GPU with thousands and thousands of memory-interface bits," with through-silicon vias (TSVs) interconnecting the layers. "It delivers an unbelievable amount of bandwidth," Huang said. "In just a couple of years, we're going to take bandwidth to a whole new level."

Pascal will also contain some as-yet-unannounced architectural tweaks, as well, and although Huang didn't detail them, he promised to reveal them as its release date nears.

Pascal: 'A supercomputer the size of two credit cards,' Huang says

He did, however, promise big things for Pascal, displaying a small card with a prototype Pascal chip onboard. "This little module here," he said, "represents the processor of next-generation supercomputers, next-generation workstations, next-generation gaming PCs, next-generation cloud supercomputers."

A tall order, to be sure, but if the dual promise of NVLink and 3D packaging comes through, Huang just might have a small, power-efficient, high-bandwidth winner on his hands. ®