This article is more than 1 year old

Hey, VMware. You've got competition – from a Belgian upstart

Did we mention this open source object store isn't just targeting VMware?

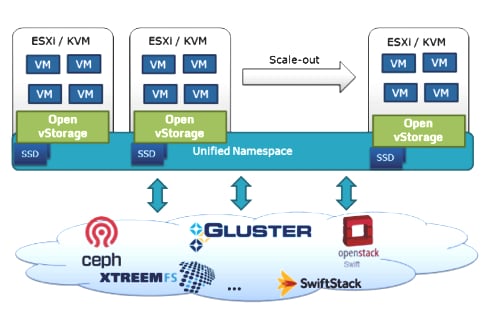

Belgian startup incubator CloudFounders has an open source project to provide an networked object storage backend to VMware or KVM virtual machines using a VSAN-like Open vStorage Router (OSR).

OSR is a VMware VM, or runs on bare metal in a KVM server, and it scales out by having OSR run on additional virtualised servers linked by 10GbitE, providing a unified NFS namespace across them.

It is VM-aware and provides access to vPOOL storage to them, talking, as it were, VM language to VMs and object-style language to back-end arrays that are running under Ceph, Gluster, OpenStack Swift or other object storage operating systems.

Opwn vStorage Router schematic diagram

The OSR turns a container on Swift into a VM Volume and that volume can only be presented by a single OSR. There is a handover between OSRs if a vMotion operation makes that necessary.

The OSR is used for provisioning (thin), snapshotting, replication and cloning (thin), and all are actioned at the VM level.

OSR also uses server-attached SSDs or PCIe flash as a cache facility, for both reads and writes. Cache hits accelerate performance for reads. The write-back caching is said to solve the IO-blender problem by turning random writes into sequential ones. Write cache data is written to the backend store, with a zero-copy approach, when a storage container object is full, typically set at 4MB.

The read cache is deduplicated and is shared across hosts. There is a failover cache facility with writes to a write cache being synchronously replicated to another node.

With vMotion IO is redirected and a vDisk automatically follows the vMotioned VM.

A single OSR can talk to multiple back-ends. It’s anticipated that the XEN hypervisor will be supported in the future, as will VirtualBox and Hyper-V.

The Open vStorage router competes with VMware’s VSAN and other virtual storage appliances. The project is being run from Lochristi in Belgium and Cloud Founders has an Amplidata involvement.

Read an Open vStorage white paper here (pdf). Check it out. It could be just the thing for you if you are into open source and virtual storage appliances. ®