This article is more than 1 year old

Sky-scraping boffins mash amateur astronomers into huge virtual telescope

Square Kilometre Array idea brought down to earth with ENHANCE code

Amateur astronomers worried that Big Astronomy would render them obsolete can relax: the kinds of techniques used to create huge virtual telescopes are now being applied to the huge collections of astro-pics published on the Internet.

As keen astronomy-watchers know, the effective aperture of telescopes can be expanded by linking multiple instruments in different parts of the world – in radio-astronomy, this is the principle behind the Square Kilometre Array, and the same techniques can be applied to optical telescopes (for example, in this proposal).

Such linking, however, is tightly-managed so that the images can be correlated, corrected, and merged to create a single composite.

What's different about the proposal in this paper at Arxiv is that its authors, led by Dustin Lang of Carnegie Mellon University (along with David Hogg of New York U and Bernhard Scholkopf of the Max Planck Institute in Germany) is that they want to correlate and combine the vast store of astronomy images that amateurs publish on the Internet.

The problems facing such a project are, unsurprisingly, formidable. Not only are the images uncalibrated, they're captured by a huge range of devices, and everybody has their own ideas about post-processing and enhancement.

With huge numbers of images available, though, Lang's group believes amateur images represent an untapped resource if these problems can be fixed. As they write, solving this problem would permit “discovery of astronomical objects or features that are not visible in any of the input images taken individually.”

The authors also add that the world's vast store of historical collections of astronomical images (whose provenance is known and which are high quality) which haven't yet been combined into any kind of “master image” for analysis.

The algorithm they've developed, which they call “Enhance”, starts by taking an initial patch of sky (referred to as the “consensus image”), and finding areas in the scraped images that match its footprint. A statistically-based voting algorithm then ranks the input images, converging towards an optimal value for each pixel.

They use software Astrometry.net, a system developed by Lang in 2009, to match images to celestial coordinates.

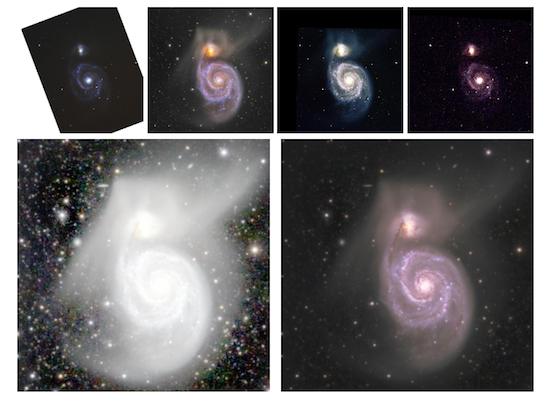

The results seem, at least to Vulture South's untutored eye, pretty impressive. Here, for example, is the output of Enhance obtained from a collection of shots of colliding galaxy pair M51. The algorithm was able to take a final set of 2,006 images crawled from the Internet, and combine them into the output image in 40 minutes on a single processor.

The top row shows some of the input images Lang used to create

the final composite, bottom right. Image: Lang, et al

The final tone-mapped consensus image, bottom right, shows debris from the galactic cataclysm that isn't visible in any of the individual source images.

The high performance of the algorithm – along with the claim that it shows linear scalability with an increasing number of input images – should, the authors believe, make it useful for huge numbers of input images.

Lang has set up a page on his Astrometry.net site, here, where amateurs can submit their images to become part of Enhanced composites, with a JSON API so those who are handy with software can automate the process. ®

Bootnote: Vulture South can't help but suspect the name of the algorithm, Enhance, is a wry poke at TV spies who always seem able to turn a bunch of illegible pixels into a clean number plate, just by pressing the “enhance” dialogue on their computers. ®