This article is more than 1 year old

Win Server 2003 addict? Tick, tock: Your options are running out

A Reg guide to breaking up and moving on

Windows XP is officially gone but its server companions Windows Server 2003 and Server 2003 R2 live – just not for much longer. Mainstream support for the server duo ended on 13 July 2010 but the expiration of extended support is now just three months away: 14 July 2015.

The date is critical as that’s when security updates and paid support incidents are no longer available from Microsoft.

Should you care if your Server 2003 boxes only sit in a corner running some old application?

If they are not connected to the network, perhaps not. In most cases they are, though, and lack of security updates or official support is a concern. If it is picked up in a network scan for PCI DSS (Payment Card Industry Data Security Standard) compliance, you will fail, and this means you should not process card payments or handle related data.

Add to this headache the fact that some small businesses still run Small Business Server (SBS) 2003, which bundles Exchange, SharePoint, the ISA Server firewall, and more, into a single box. SBS fell out of extended support with its individual components: Exchange 2003 has been officially obsolete since April 2014.

What this means is that even if everything is working nicely, it makes sense to migrate from Server 2003 or SBS 2003. Depending on the role each server plays, that can be a considerable effort.

Here is a quick look at key considerations.

You can upgrade to the slightly less ancient Windows Server 2008, from the Windows Vista family. Mainstream support ended in January, but extended support remains until 2020. The only reason to consider Server 2008 is it is the last version of Windows Server that has a 32-bit edition. If you have some app that only runs on 32-bit Windows, you can get a few more years of support that way.

Most will want either to upgrade to the current version of Windows Server, now on 2012 R2, or in the case of the smallest businesses, consider doing without a server and moving everything to the cloud.

Something else to consider is the virtualisation route. Server 2003 does run nicely on a hypervisor such as Microsoft’s Hyper-V, so what about just virtualising your Server 2003 boxes? There are benefits for sure, but it does not fix the underlying compliance, support and security problems.

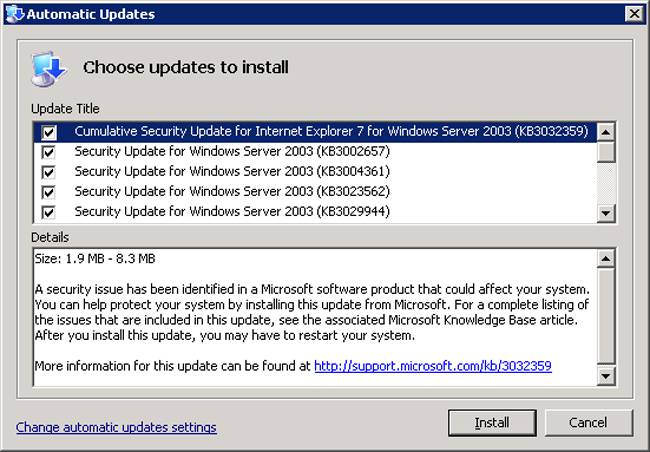

This is what you don’t get from July: security updates for Windows Server 2003

You, of course, do not necessarily have to stay on Windows Server at all. An option for small businesses is to move migrate email to the cloud, with Microsoft’s Office 365 perhaps the most natural option for users hooked on Outlook, Word and Excel, and to use a Linux-based NAS (Network Attached Storage) for file and print sharing. NAS vendors like Synology and QNap are moving into the server appliance space, with hundreds of applications that you can install and manage through a simple browser-based package manager. These include PHP for web applications, content management systems such as Drupal and WordPress, integration with cloud storage for backup, mail servers and more.

But many will have essential Windows applications running on the server and need the flexibility of a full server operating system, making migration to the latest Windows Server the best option for them.

Another issue is Active Directory (AD), which manages user authentication and security on most Windows networks. If you are migrating to the cloud, you can use a tool like Microsoft’s DirSync (Active Directory Sync Tool) or Google Apps Directory Sync to create user accounts that match those on-premises. Although this is useful, you still need to maintain a local Active Directory server for Windows sign-in and security. The forthcoming Windows 10 is likely to let users sign in with Azure AD credentials, which will remove one reason for running on-premises AD.

Server 2003 versus Server 2012 R2: what has changed?

So, that brings us back to Server 2012. What’s changed since your legacy server was new on the block?

Server 2008, which followed Server 2003, was a big release that introduced Hyper-V, Microsoft’s hypervisor, and a great improvement over the earlier Virtual Server technology. Hyper-V has become a core focus for Microsoft and now underlies its Azure cloud-computing platform. The advantages of virtualisation in terms of efficiency, manageability and resilience are such that deployment servers on VMs is now the norm, unless there is a good reason not to do so. There are strong alternatives to Hyper-V for the host, with VMWare still the market leader, but Hyper-V comes as part of Windows Server – making it a good choice for smaller setups.

Another key change is the introduction of Server Core from the 2008 edition onwards. Server Core is really part of a bigger story, which is the componentisation of Windows Server. The idea is that the basic server is stripped down to a minimum, and you add roles and features as needed, with the Windows GUI being one of them. The componentisation is not perfect, but has improved with each edition. In Server 2008, Server Core was a choice you had to make on first installation, but with Server 2012 you can move to a full GUI and back by adding and removing the feature.

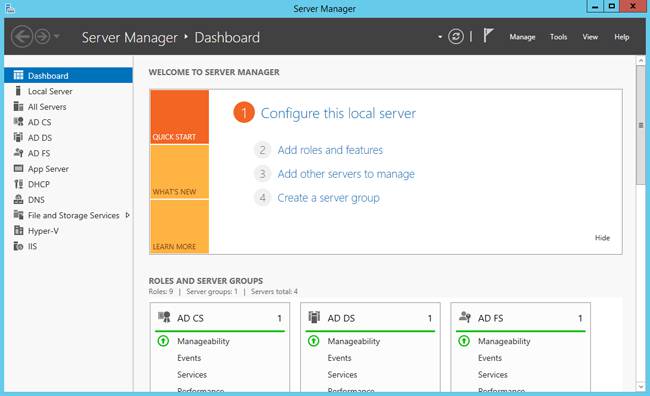

Graphical changes: the Dashboard in Server 2012 R2

The third headline feature is PowerShell, an automation engine and scripting language first released in 2006. PowerShell is not just a better scripting language than VB Script; it is a fundamental piece on which Windows depends for automated deployment and configuration. PowerShell 4.0, released with Windows Server 2012 R2, includes a feature called Desired State Configuration (DSC) that lets you define the configuration of a server in code and realise that configuration automatically. DSC had limited scope in its first release but is evolving fast.

Another significant area is storage management. Server 2012 brought in Storage Spaces, which lets you create pools of resilient storage on a bunch of hard drives, adding more drives as space requirements increase. Server 2012 R2 lets you combine fast SSD drives with spinning disks, automatically placing the most frequently accessed files on the fastest storage.

All this means little if your essential Server 2003 applications no longer run. Is that likely? Although Server 2012 and higher are 64-bit only, 32-bit applications still run. Server 2008 and higher introduce User Account Control (UAC), which means even administrators run as standard users most of the time, but although this can cause compatibility issues they are usually fixable, such as by setting an application to run as administrator all the time. The most problematic area is applications that are incompatible with the updated libraries in more recent versions of Windows, or which work with hardware add-ons for which drivers are not available. In such cases, updating or replacing the application is the only solution.

Move it!

The Server 2003 problem may be anything from one or two isolated instances running for specific reasons, such as application compatibility, or an SBS box running the entire business. The start of any migration process is discovery: what instances of the server are there, what are they doing? Next comes research and decisions about what to migrate to. Can the application be fixed? Is there a cloud solution? Or is this simply a matter of pulling out Server 2003 and replacing it with Server 2012 R2, possibly on VMs?

The organisations in most need of help are small businesses running SBS 2003. Microsoft no longer has a Small Business Server product. The current nearest equivalent is Windows Server Essentials R2, though this supports a maximum of 25 users and does not include Exchange; you are meant to migrate email to Office 365. You can migrate to full Windows Server 2012 R2 and install Exchange, but this will be a multi-server solution at a much higher price than SBS. Microsoft’s official guide on Essentials migration is here.

Before any migration, backup your existing server and test the backup so you have a way back if the migration fails.

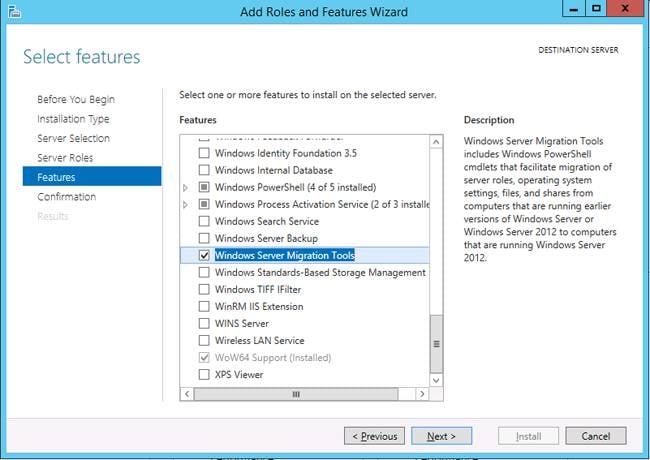

Migration tools are a feature of Windows Server 2012 and 2012 R2, added through Roles and Features.

If you have AD running on Server 2003, either because of SBS or for other reasons, the top priority is to move this to Server 2012 R2. The usual approach is to update the existing AD to at least Windows 2003 Functional Level, add a Windows Server 2012 R2 AD server to the domain, allow AD replication to occur, transfer the FSMO (Master) roles to the new server, then demote the 2003 Server so it no longer runs AD.

This procedure is easy to outline but may not run smoothly in practice. Microsoft has PowerShell scripts to automate the process (see here for example, but what do you do if the script fails? Try to fix it is the obvious answer, but it may not be trivial. I have heard some admins recommend migrating to Server 2008 AD as an intermediate step, since it is more likely to complete without problems.

One option for small organisations, or if AD is hopelessly broken, is to throw away the existing AD and Windows Domain, and create a new one. This will mean joining all the client machines to a new domain, but has the advantage that things like obsolete security groups and Group Policy objects (which automate Windows configuration across the domain) will not get migrated. This is a more predictable process, though it works best with single server setups. The downside of migrating to a brand new Windows Domain is that any permissions set on things like file shares have to be redone. Discarding a Windows Domain can have unforeseen consequences so it is not a thing to do lightly.

Once AD and DNS is transferred, other server roles such as DHCP are relatively easy to migrate. Server 2012 R2 comes with a bunch of migration tools documented here and implemented as PowerShell scripts.

Migration can be a hassle, but after July it will be even worse, so now is the time. ®