This article is more than 1 year old

Third time lucky? Plucky upstart Infinidat plonks down monolithic array

Honking great flash cache speeds all-media-seeing controller engines

Analysis Infinidat is aiming to replace VMAX/DS8000/VSP-type arrays with its Infinibox product, and it’s making good progress, having shipped more than 200PB of installed capacity in two years.

An Infinidat briefing at VMworld added more information to what we have written here, here and here, about founder Moshe Yanai’s third attempt at disrupting the top-end storage array market after Symmetrix (now VMAX) and XIV.

What we learnt at VMworld is that the Infinibox is an unashamed integrated hardware-software, high-end development, having no truck with vacuous software-defined storage notions.

CTO Brian Carmody said: “Enterprises want packaged systems. SW-defined storage is like communism. It looks really good on paper but not so good in practise. There’s integration risk for customers. It’s the most un-cloud-like proposition you can make.”

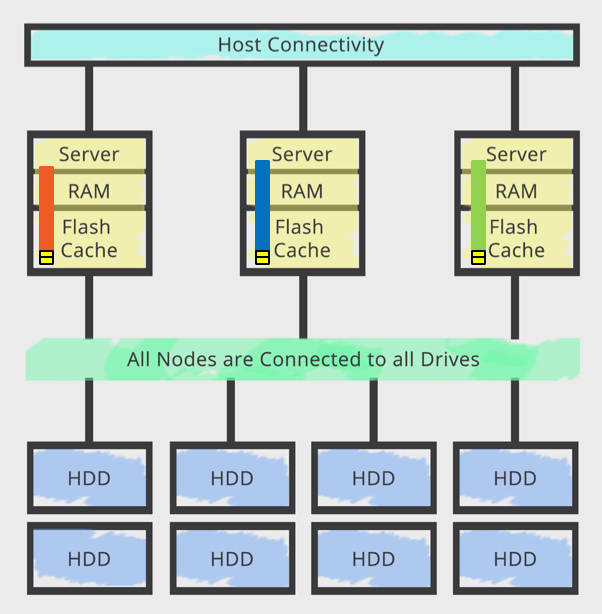

It has a hyper-parallel continuum architecture, a massive and de-centralised cache, and a fully symmetrical active grid. The system has four sets of components; host connectivity, controller engines, an internal InfiniBand network, and storage media.

It features triple-redundant power and data paths, and triple redundant hardware, which help it achieve its 99.99999 per cent uptime, meaning less than three seconds of downtime per year.

Facing hostwards there are 24 x 8Gbit/s Fibre Channel ports and 6 or 12 x 10GbitE ports. The system is ready for 16Gbit/s FC. Chief marketing officer Randy Arseneau said Infinidat is very interested in the NVMe fabric idea. We think it could be used internally in the system possibly as well as being a potential host-array link.

These lead to three controller engines, set up in an active-active-active configuration. Each is a server with x86 processor, DRAM and huge slugs of NAND cache. Altogether the system has 1.2 to 3.2TB of RAM and 24-48TB of SSD; making two levels of cache.

A 56Gbit/s Mellanox Infiniband network leads from them to 480 x 6TB 7,200rpm disk drives*, producing 2.88PB of raw capacity and more than 2PB of usable capacity in a rack. Each controller can interact with every disk drive, talking to them across a mesh, and each controller serves access to all LUNs and file systems concurrently.

Data storage scheme

The way data is stored is unique. Incoming data is split into 64KB sections and the system is, below the protocol drivers, simply a collection of 3.125 billion of these sections. Concepts such as hosts and volumes don’t apply to the caches and disk drives. Each section is given a checksum and an activity vector (AV) score.

This AV score relates to the IO activity for a section and a heuristic map of inferred relationship to other sections, with the aim being to keep an application’s hot working set data in cache as its workload develops. The aim is to have all read requests be served from DRAM or NAND cache.

The system’s software groups 14 sections with similar AV values into a stripe, and two parity sections are computed to create a 16-way stripe for persistence to disk.

Next, 16 disk drives are chosen from the disk pool and the 16-way stripe is written. We’re told there is non-deterministic drive selection, log-structured writes and statistically uniform utilisation of drives.

Each volume and file system is distributed across all disks in the system, meaning there are no hotspots and fast rebuilds if a drive fails; massively parallel RAID rebuilds in fact. There is an even:odd XOR RAID scheme, with no erasure coding or Reed-Solomon scheme.

What Infinidat has built here is a hybrid flash/disk array with high-end enterprise-class reliability and performance. It claims that it takes less than six minutes to regain data redundancy after a twin drive failure.

The system is power-efficient, drawing less than four watts/usable terabyte.

We are told the system has been designed to take future 20TB disk drives, meaning 9.6PB of raw capacity in a rack. Currently, it does not support file-level access via protocols such as NFS or SMB (CIFS). The system has been designed for multi-protocol support though.

Customer points

Infinidat said it has 100PB of storage in its lab, and that’s used for QA, Carmody saying that we “burn in every component for a month before we ship it to the customer".

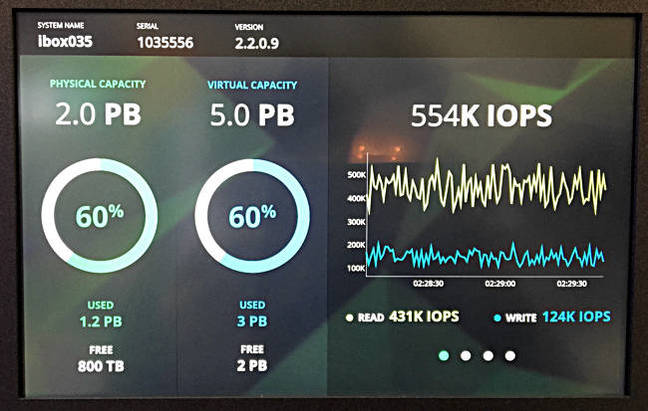

Infinibox rack front display showing capacity, read and write IOPS

Its performance is said to be up to 750,000 IOPS with a bandwidth of 12GB/sec.

For Infinidat an enterprise customer is one who needs 1PB if storage; and that’s not necessarily a Fortune 1000 company.

We note five Infinidat progress markers:

- 300+ employees with 100 hired since April

- There are more than 100 customers

- 200PB+ shipped since second half 2013 ship start date

- In production at multiple F500 sites world-wide, such as such as AOL, Fidelity Investments, Barclays, Orange and Leumi Bank

- About 18 per cent of revenue last quarter was from repeat business and proportion is growing