This article is more than 1 year old

2015: The year storage was rocked to its foundations

Everything is changing and we don't store the same

Storage year in review, part 1 The storage market in 2015 went through strategic foundation-shaking turmoil as the external shared disk array storage playbook was torn to shreds.

It was a bewildering year, with rampaging and revolutionary activity at all levels of the industry. It’s best looked at from the ground up, starting with the technology vision, moving on to raw media, and then systems (arrays), applications such as Big Data, and finally suppliers.

We look at technology visions and galloping media development here. Part two of this review of storage events in 2015 will cover systems, applications and suppliers.

Technology visions

There were six technology visions that exercised the industry's mental sinews in 2015.

First, the all-flash data centre idea has definitely taken off as a vision that could be achieved. Pioneered by troubled Violin Memory it has been expanded on by Kaminario and HDS, and is related to the flash and trash concept.

Primary data is stored in flash with the rest being held in cheap and deep storage. When that cheap and deep is in the cloud you have an all-flash, on-premises data centre. When some/all of it is held in less-expensive flash, think 3D QLC (4 bits/cell or quad level cell), with the rest in the cloud, then you have an all-flash data centre too.

A second technology theme of the year has been a strengthening conviction that storage should be located as close to servers as possible. This is an assault on network data access latency, paralleling the flash-based assault on disk drive data access latency due to head seek time, etc.

The server-storage closeness concept has given rise to virtual SANs, hyper-converged industry appliances (HCIAs) and NVMe fabrics.

Intel NVMe SSD

A third silo-busting vision looks at storage silo sprawl and says it should either all be virtually converged into a single silo (Primary Data), all secondary storage should be converged (Cohesity, Rubrik), or data copying should be controlled (Actifio, Catloigic abd Delphix).

The existing hybrid cloud concept was adopted/supported by everybody with, perhaps, NetApp leading the way, and all-saying on-premises storage should be integrated with public cloud storage while asserting that on-prem storage is essential, vital, not to be discarded, etc.

VMware’s VVOL idea was also supported by anybody and everybody, except Microsoft of course, but precious few customers adopted it.

OpenStack carried on with extraordinarily successful conferences and vendor support, albeit, again, with less customer adoption. The feeling in the OpenStack community is that mass adoption is a matter of course.

These were the six over-arching visions. Down in the storage technology basement, the raw media was going through a set of revolutions too.

Media

Flash started out in 2-bits/cell MLC form and this rapidly became standard, either in 2.5-inch disk bay slot or PCIe flash card forms, the PCIe bus having much lower access latency than SATA SAS SSDs.

TLC (3-bits/cell or triple layer cell) had started appearing but its endurance was not good in the standard 2D or planar flash cell form with sub-20nm cell geometries. Samsung has been shipping 3D, multi-layered flash chips with 24 and then 32 layers, using larger cell geometries, in the 40-30nm area. The result was both an increase in flash chip capacity and endurance. Dell, HP, Kaminario and others started using such 3D flash chips to increase capacity and lower per-GB costs.

Intel/Micron and SanDisk/Toshiba also have 3D flash chips here or coming, and they could well finish off the use of 15,000 rpm disk drive use for primary data storage, and start replacing 10,000 rpm drives as well.

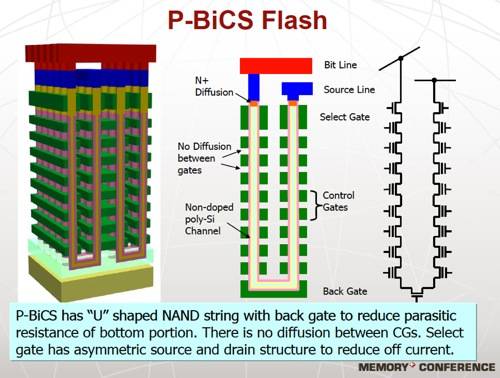

Toshiba p-BICS 3D NAND scheme

Samsung has a 48-layer 3D technology coming, and has demonstrated a so-called 16TB SD using its 3D flash (actually 15TB) and this exceeds the highest disk drive capacity which is now 10TB.

Intel and Micron blew non-volatile memory preconceptions out of the water in the second half of the year with their joint 3D XPoint memory announcement. This was non-volatile, but not flash, and not phase-change memory based, although it does employ a material which has a bulk change in some state to signify a binary one or zero via changed resistance levels.

It has two layers and is claimed to be 1,000 times faster than flash, though not as fast as DRAM, with 1,000 times more endurance than flash. XPoint should be less costly than DRAM and will probably be used as a persistent memory tier in servers. Product is promised during 2016.

SanDisk and Samsung both said they had their own ReRAM (Resistive RAM) technologies under development. It should be an exciting 2016.

On the software front, industry-standard NVMe drivers for PCIe flash cards appeared, relieving hardware developers from the need to create their own drivers for their products. A wave of NVMe-driven flash products has already started appearing and NVME will become as standard and as widely-used as SATA or SAS media interfaces.

Disk media

Boring old disk tech did not see the heralded heat-assisted magnetic recording (HAMR) appearing. Instead we got shingled magnetic recording (SMR), industry take-up of Seagate’s Kinetic drive idea, and Seagate’s capitulation to HGST’s helium-filled enclosure technology.

Shingled drives overlap wide write tracks, but not the narrower read tracks, thus increasing capacity at the expense of rewriting whole blocks of tracks when data has to be re-written. Seagate pushed this idea strongly, being at a capacity disadvantage vs HGST with its helium-filled drives. But HGST brought out its own take on the idea with host-managed SMR drives, meaning servers or arrays using these drives have to have their system software modified. We’ve yet to hear of any OEM design wins for such drives.

WDC 10TB helium-filled drive

Helium-filled drive technology swept all before it. Helium has less friction than air, so platters don’t have to withstand friction-induced vibration and can be thinner, meaning an extra platter inside a standard 3.5-inch enclosure. HGST said all its disk drives going forward would be helium-filled, and introduced a 10TB drives towards the end of the year. Desktop PC owners mouths are watering at the prospect of so much capacity.

Seagate then revealed it would introduce its own helium drive technology in the first half of 2016. We expect Toshiba to follow, or it will be forced out of the disk drive business.

Key:value store disk drives

An infrastructure for direct-accessed disk drives, ones with an Ethernet NIC on-board and basic GET and PUT object storage facilities came into being. The idea is to simply slim down the overall server app-to-disk-drive stack by having server system software manage the drives directly and get rid of disk array controller file system semantics and block access stack components as well. This was pioneered by Seagate with its Kinetic drives. Scality and other storage software creators added KInetic drive support.

HGST and Toshiba added Ethernet-accessed, object-storing disk drives to their product sets as well and this was followed by industry standardisation activities.

Three disk-drive suppliers and eight other IT suppliers formed the Kinetic Open Storage Project (KOSP), which has joined the Linux Foundation as a collaborative project. KOSP intends to produce open-source object storage on these Ethernet-enabled storage devices, which is all to the good.

We can expect expect Seagate and WDC/HGST to support these drives in their own array products with object storage-style applications being targeted, such as fast-access, archive-style, large data stores.

Tape media

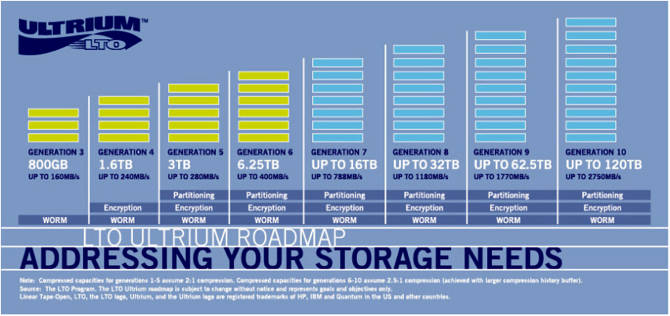

Not to be left out, the tape industry developed a 15TB LTO-7 format to follow the present 6.25TB LTO-6 format, and be backward-compatible with it. These are compressed capacities.

So far there is nothing as cheap and reliable as tape for long-term, archival storage, although Blu-ray optical drives have their niche. Even if or when QLC flash appears it will probably be more expensive on a $/GB basis so tape should carry on streaming for some years yet.

LTO Ultrium roadmap

We might expect high-capacity, shingled and helium-filled drives, spinning at 5,400rpm or that level, to encroach into tape-archive space with faster access, assuming their $/GB is competitive but LT0_7 will be followed by LTO-9 so tape’s future is pretty stable.

Media reflections

What did not happen in the year was any mass adoption of hybrid disk drives, ones with a slug of flash cache added for faster data access. Nor did we see any addition of multiple read/write heads to disk to shorten data access latency.

Flash drives generally solve the dusk drive latency access problem, not hybrid drives.

Flash production capacity is rising, with China’s Tsinghua group intending to develop its own foundry, and Intel converting a China-based semi-conductor fab it operates so it can produce 3D NAND next year.

The disk drive industry is focused on capacity-optimising its drives, with the Kinetic-style drive idea looking at making disk-based archives cheaper from the system stack point of view. Helium-filled drives will increase drive capacities for normal use, with helium-shingled drives increasing capacities for file-based archive access.

The tape industry is demonstrating its matureness and longevity with LTO-7 and the LTO roadmap. Optical storage remains locked in niche applications.

A whole new tier of server persistent memory is appearing with 3D XPoint and how that is adopted will be of strong interest during 2016.

Lastly, the use of flash chips in memory DIMM sockets, the Flash DIMM concept, is being further developed by Diablo Technologies with its Memory1 technology, and should be enhanced by a Samsung-Netlist partnership.

We started the year with 2D MLC flash, disk and tape as the three storage media life firms, and are ending it with Flash DIMM (and prospective XPoint DIMMs), 3D XPoint persistent memory, 3D MLC and TLC flash, QLC flash in prospect, helium-filled disk drives, Ethernet Object drives, more shingled drives, and higher-capacity tape.

There is still HAMR disk drive technology to come as well, plus whatever form Samsung and SanDisk ReRAM technologies take.

Multiplying media options are going to cause development ripples all the way up the storage stack, but that is the subject of another article, as is what happened with storage arrays, applications and suppliers in 2015, where revolutionary changes seemed to happen every month in the year. ®