This article is more than 1 year old

Rockport’s Torus prises open hyperscale network lockjaw costs

Hyperscalers rejoice; toroidal doughnut fabrics open the door to 10,000 node and beyond networking

Hyperscale IT is threatened by suicidally expensive networking costs. As node counts head into the thousands and tens of thousands, network infrastructure costs rocket upwards because a combination of individual node connections, network complexity, and bandwidth in a traditional (leaf-and-spine) design has a toxic effect on costs.

The effect is exacerbated when the individual nodes are cheap as chips, relative to servers and storage arrays, as with kinetic disk drives and, eventually, SSDs. Kinetic drives have individual Ethernet connectivity, and an on-board processor responding to object style GET and PUT commands. Seagate commenced building this kind of disk drive with its Kinetic series in 2013. Western Digital/HGST and Toshiba followed suit and a KOSP industry consortium has been formed to drive standards.

If you imagine a hyperscale data centre has an archive array formed from kinetic drives then a 10,000-node network is a realistic conception. Traditional data centre networking is based on a hierarchical 3-layer core-distribution-access device/switches design. This has a north-south focus on traffic, with data packets exiting their source device and climbing up the hierarchy and then descending down to reach their target.

This is transitioning to a 2-tier spine and leaf design better suited to larger-scale networks and east-south traffic flows across the fabric rather than up and down a hierarchy. Arista, for example, advocates such a design.

Rockport Networks, a networking startup, says this too becomes complex, unwieldy and costly as network node counts move from 1,000 to 10,000 and above. The company is developing its own torus-based networking scheme* to combat the spine and leaf disadvantages.

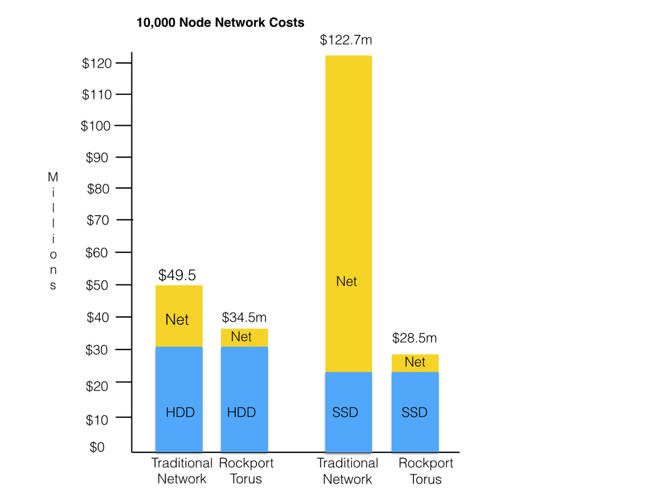

It has produced a spreadsheet-based model to illustrate this, and we have graphed the results for both a 10,000 disk node and 10,000 SSD node network:

There is a $25m advantage for its torus-based network with disk drive nodes and a massive $94.2m advantage with SSD nodes. How is that worked out?

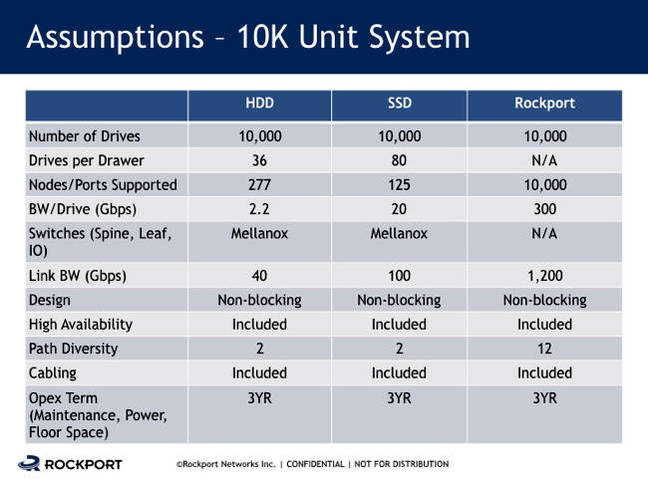

The company showed us two slides, one showing its basic costing ideas:

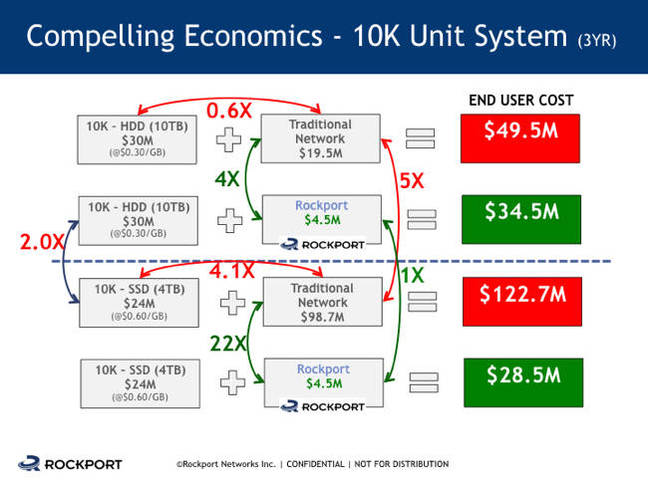

The second slide summarised the application of these costs in a graphic:

Our own chart graphs the right-most column in this second slide. The points we note from these two slides are:

An IT deployment with 10,000 10TB disk drives and traditional networking costs $30m for the drives and $19.5m for the network, totalling $49.5m.

- The traditional network costs 0.6 times the HDD cost.

- Substitute Rockport's network, which costs $4.5m, and the total goes down to $34.5m, a savings of $15m.

- The Rockport network saves 30.3 per cent of the traditional deployment.

- The Rockport network costs 76.9 per cent less than the traditional network.

And for the SSD case we note an IT deployment with 10,000 4TB solid state drives and traditional networking costs $24m for the drives and $98.7m for the network, totalling $122.7m.

- The traditional network costs 4.1 times the SSD cost.

- Substitute Rockport's network, which costs $4.5m, and the total goes down to $28.5m, a savings of $94.2m.

- The Rockport network saves 76.7 per cent of the traditional deployment.

- The Rockport network costs 95.4 per cent less than the traditional network.

- The SSDs cost twice as much per GB as the HDDs.

In both the traditional HDD and SSD system deployments Mellanox spine and leaf switches are used, with 40Gbit/s bandwidth for the HDD system and 100Gbit/s bandwidth for the SSD system, hence its 5X higher networking cost.

Rockport points out that the networking switches take up rack space and thus, for a cloud service provider, denies them rack space for direct revenue-generating devices. If, say, per-rack 4U of rack space is taken up by network switches then that space cannot be used by servers which the CSP's customers can be charged to run applications in virtual machines.

Thus, according to the model presented by Rockport, its torus network is radically less expensive than spine-and leaf networking, and can also increase a CSP's revenue-generating capability through opening up the use of blocked rack space.

Our own take on this is that there is a tremendous latent network cost and complexity inhibitor to widescale deployment of kinetic-style drives and that Rockport's torus network architecture shrinks the enormous network elephant in the room down to a much friendlier mammal in size (and cost). ®

* With hierarchical 3-tier networking moving to 2-tier spine and leaf architecture there is an attraction in thinking of Rockport's torus-based architecture as effectively being a single tier.