This article is more than 1 year old

Mellanox plans to SoC it to storage speed with Multi-ARM BlueField

NVMe Fabric adaptor chatter overheard in earnings call

InfiniBand and Ethernet adaptor biz Mellanox has storage acceleration SoCs coming to provide faster external array access across NVMe fabrics.

Mellanox CEO Eyal Waldman talked about NVME over Fabrics (NVMeF) in his company's second quarter 2016 earnings call. His company makes InfiniBand and Ethernet networking devices, and is doing very well with a fifth consecutive record quarter's revenues.

NVMeF is a way of connecting an array of external NVMe flash drives to accessing servers over a network link that is so fast array data access are the same as ones to local, directly connected NVMe flash drives. Vendors like EMC with DSSD and Mangstor have product available now. E8 will launch at next month's Flash Memory Summit. Kaminario and Tegile have plans to adopt the technology, and both NetApp and Pure Storage are keeping a close eye on it.

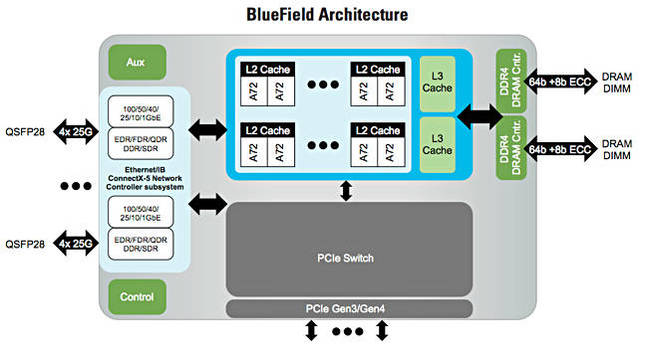

During the call Waldman said: "Integration efforts between EZchip and Mellanox have been ongoing since the acquisition closed in late February. During the second quarter, we announced the first joint product, our multi-core system-on-a-chip solution named BlueField. BlueField combines multiple ARM cores interconnected by a coherent mesh network with the leading ConnectX-5 network adapter. Initial target markets include storage and network applications."

In storage the multi-ARM core BlueField system-on-a-chip (SOC) could act as a controller for a group of NVMe flash drives, with access over 25/50/100 Gbit/sec Ethernet.

Waldman then said; "Utilising offload accelerators within the embedded ConnectX-5 adapter, BlueField delivers a programmable intelligent network solution. Within storage markets, BlueField can be utilized in scale-out NVMe over fabric systems as storage controller."

He said BlueField is designed to support increasing demand from high-performance storage networks such as NVMe with IOs heading to 20 Gbit/sec and beyond per drive.

Waldman sees opportunity with NVMeF: "NVMe over fabrics is a new micro trend emerging in storage markets offering customers a replacement for legacy Fibre Channel installations. We believe this trend will result in multiple opportunities for revenue growth from BlueField in 2017."

The BlueField product will begin shipping in 2017.

Mellanox says: "The BlueField family of SoC devices integrates an array of 64-bit ARMv8 A72 cores interconnected by a coherent mesh network, DDR4 memory controllers, multiple Ethernet/ InfiniBand ports supporting 10/25/40/50/100Gb/s, an integrated PCIe switch with multi-port PCIe Gen 3.0/4.0 supporting EP SR-IOV and RC functionality. The SoC targets I/O-intensive and compute intensive applications that combine data-plane and control-plane services for storage and networking."

Check out a BlueField datasheet here. The datasheet mentions storage acceleration: "Storage applications will see improved performance and lower latency with advanced NVMe over fabric and higher bandwidth. The embedded PCIe switch enables customers to build stand-alone storage appliances. Standard block and file access protocols can leverage RoCE for high-performance storage access. A consolidated compute and storage network achieves significant cost-performance advantages over multifabric networks. On top of hardware storage accelerations, the SoC ARM cores bring sufficient compute power for storage applications: NVMf target, Ceph, iSER, Lustre, iSCSI/TCP offload, Flash Translation Layer, RAID rebuilds, data compression/decompression, and deduplication."

So we can let imagination fly and envisage your average external storage array using BlueField SoCs to transform itself into a network access latency killer. Seagate's DotHill and ClusterStor arrays could use this. Ditto Nexsan, Dell Compellent arrays, HDS VSO, etc, etc. The 2017-2018 period could see a pretty sustained attack on Fibre Channel and iSCSI storage array access from NVMeF. ®