This article is more than 1 year old

Text input from thin air: boffins give Wi-Fi the finger with AI

There's a point to it, though

Chinese boffins reckon they're the first capture text input to mobile devices using gestures sensed by commodity Wi-Fi devices.

The researchers, from the University of Science and Technology in China, have dubbed the scheme WiFinger, and describe it here.

At this stage, what the researchers achieved was to sense movement finely enough to distinguish American Sign Language down the the digit level at better than 90 per cent; and better than 82 per cent for “single individual number text input”.

Readers will be familiar with using Wi-Fi multipath distortion to recognise body movements – even through walls, in some cases.

WiFinger also uses multipath distortion, but focuses on how the distortion of finger movements affects Channel State Information (CSI).

The researchers say the “micro motions” involved in finger gestures cause “a unique pattern in the time series of CSI values” (dubbed “CSI waveforms” in the paper), and those waveforms are unique to the gesture.

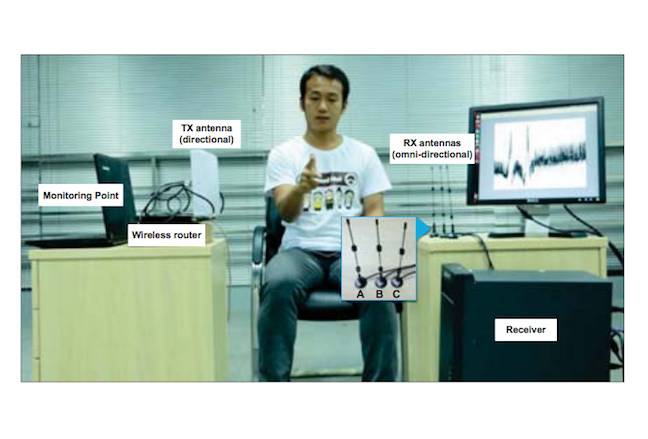

The researchers used an Intel 5300 Wi-Fi card, modifying the driver to gather the necessary CSI to detect gestures.

Right now, WiFinger imposes constraints on the user – rather like the gesture recognition on the Heart of Gold (The Hitchhiker's Guide to the Galaxy), it seems you have to “sit infuriatingly still” for the system to work.

Sit very still ... the WiFinger experimental setup

However: sensing Wi-Fi signals is a promising direction for accessibility research. The paper notes the scheme can be extended for other forms of gesture recognition.

The research, by Hong Li, Wei Yang, Jianxin Wang, Yang Xu and Liusheng Huang of the University of Science and Technology, China, was presented at the 2016 ACM International Joint Conference on Pervasive and Ubiquitous Computing (Ubicomp '16). ®