This article is more than 1 year old

Google: We look forward to running non-Intel processors in our cloud

OK, so someone's angling for a discount

Google has gently increased pressure on Intel – its main source for data-center processors – by saying it is "looking forward" to using chips from IBM and other semiconductor rivals.

The web advertising giant said it hopes to use a mix of "architectures within our cloud" in the future.

Back in April, Google revealed it had ported its online services to Big Blue's Power processors, and that its toolchain could output code for Intel x86, IBM Power and 64-bit ARM cores at the flip of a command-line switch. As well as Power systems, Google is experimenting with ARM server-class chips and strives to be as platform agnostic as possible.

The keyword here is experimenting: the vast majority of Google's production compute workloads right now run on Intel x86 processors. In fact, according to IDC, more than 95 per cent of the world's data center compute workloads run on Intel chips. Thus, to keep its supply options open, to keep Intel from price gouging it, and to evaluate competing hardware, Google test drives servers with non-x86 CPUs. It makes sense to widen your horizons.

On Friday this week, Google stepped up from simply toying with gear from Intel's competitors to signaling it seriously hopes to deploy public cloud services powered by IBM Power chips.

"We look forward to a future of heterogeneous architectures within our cloud," said John Zipfel, Google Cloud's technical program manager.

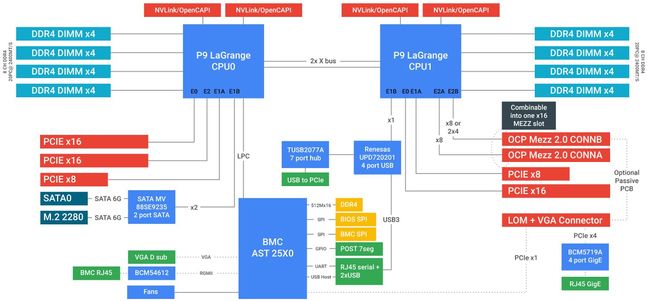

This comes as Google shared draft blueprints for the Zaius P9 server: an OpenCAPI and Open-Compute-friendly box with an IBM Power9 scale-out microprocessor at its heart. That's the same Power9 the US government is using in its upcoming monster supercomputers. Google has worked with Rackspace, IBM and Ingrasys on the Zaius designs – you may recall that the Zaius concept was unveiled in April.

OpenCAPI is the anyone-but-Intel interconnect fabric that emerged this week; the Zaius P9's OpenCAPI compatibility, and support for Nvidia's high-speed NVLink, further highlights Google's reluctance to be shackled to Intel. As well as supporting OpenCAPI, the Z9 has 16 channels of DDR4 memory, SATA and USB interfaces, gigabit Ethernet, BMC and serial access, and a bunch of PCIe gen-4 interfaces.

It takes a 48V supply – a voltage level that apparently hits a sweet-spot for data center machines in terms of power and energy efficiency – and meets the Open Rack v2 standard. The blueprints will be submitted to the Open Compute Project (OCP) to evaluate and approve.

Hyper-scale companies like Google and Facebook publish their server specifications so Asian hardware manufacturers can mass produce them on the cheap, relatively speaking. That way, cloud giants get to buy exactly what they want without having to negotiate deals with traditional server vendors like Hewlett Packard Enterprise.

"We've shared these designs with the OCP community for feedback, and will submit them to the OCP Foundation later this year for review," explained Zipfel.

"This is a draft specification of a preliminary, untested design, but we’re hoping that an early release will drive collaboration and discussion within the community."

Aside from compute workloads, we understand Google uses customized Nvidia GPUs for its AI-powered Google Translate service, and no doubt in other platforms too alongside its machine-learning ASIC. It also uses a customized network controller with builtin intelligence to manage and access vast pools of cached information with low latency. If you work at Google, and you need some silicon for your application, the answer is not always "Intel." ®