This article is more than 1 year old

From drugs to galaxy hunting, AI is elbowing its way into boffins' labs

Machine learning is cropping up more and more in research papers – does it work?

From chemical labs to galaxies

Data is fuel for AI: the more information you can throw at a machine-learning package, the better it will perform. Unlikely the tightly regulated health world, astrophysics is more open with its data. The biggest space agencies on Earth, such as NASA or ESA, happily share details of discoveries made on their missions to propel space exploration and understanding of the cosmos.

This is all good news for engineers and scientists using AI software to tackle astrophysics challenges.

Space instruments take in massive amounts of data, too. The Large Synoptic Survey Telescope under construction in Chile is expected to haul in 3TB of data per night – a small amount compared to the Square Kilometre Array project at 15TB per second, Kevin Schawinski, professor at the department of physics at ETH Zurich, Switzerland, said.

“Astrophysics is a very fertile place as the large datasets are well suited for machine learning,” he told The Register.

Schawinski has long been fascinated with galaxies. He founded the Galaxy Zoo project, a crowdsourced project that invites people to help classify the shape of galaxies, with the help of Chris Lintott, presenter of the BBC’s The Sky at Night TV show.

The sight of millions of bright stars littered among swirls of interstellar dust, all set against a backdrop of obsidian nothingness, is beautiful to look at. We're used to seeing stunning photographs from the heavens – these are often digitally remastered from grainy, noisy originals snapped by telescopes. It is difficult to capture clear images, and not every snap from a telescope is a Kodak moment.

“It’s like having two worlds. One where the universe is perfect and you can see galaxies with infinite resolution, and the other where it’s imperfect to look at due to the noise and distortion,” Schawinski told The Register.

If only there were some software to intelligently clean up images from space, and pick out the features – such as star systems and galaxies – for boffins to study.

Schawinski stumbled across a paper about general adversarial networks (GANs) written by Ian Goodfellow, a researcher at OpenAI, who is credited with inventing the model along with his colleagues at the University of Montreal.

GANs work by using two neural networks – a generator and discriminator – that compete against each other. The system is fed an input of images and the generator tries to recreate the pictures, while the discriminator tries to distinguish if the computer-made images are real or generated.

It allows the researchers to tweak the generator to get images past the discriminator. Eventually, the real and fake images are very similar.

Schawinski realized GANs could get him closer to the idea of the perfect universe. “It clicked almost right away, that it could be used to bridge the gap between both worlds.” Working together with colleagues from his university's computer science department, Schawinski and his team began training his GAN-based GalaxyGan software on images of galaxies from the Sloan Digital Sky Survey. A paper on GalaxyGan [PDF] has also been submitted to ICLR.

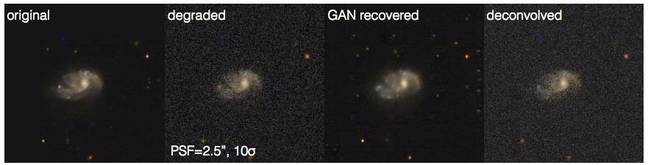

Pairs of galaxy images are used during training. One image is high quality, and the other is degraded by blurring the image and adding noise to it. The GAN then tries to learn to recover the botched image by minimizing the difference between the recovered and non-degraded image. The idea is to train the software to fix up photographs distorted by physical effects and other interference.

A demonstration of GalaxyGan recovering a blurry image and a comparison with a deconvolution technique to restore the blurry picture ... Photo credit: Schawinski et al

“The GAN-recovered picture will never be better than or equal to the original unspoiled image,” Schawinski warned. “On one hand it’s great that you can make out some of the small features that were lost, but on the other it’s just a statistical reconstruction.”

It fails on rare features it hasn’t encountered much before, as it cannot reconstruct objects it hasn’t learned to recognize. Although using a GAN provides better results than using traditional deconvolution methods to correct distortion and noise, the network's performance is limited by its training set.

If it’s trained on spiral galaxies, then it won’t do so well on elliptical or irregular galaxies. “It’s a new method, and we need to calibrate it and see how it it behaves under different circumstances. It has the potential to be transformational, but we need to do more research,” Schawinski said.

The trend of using AI in science is growing, Schawinski told us. He has noticed interest growing among physics students who are keen to learn how to use the powerful algorithms. He plans to continue using machine learning, and has even set up a website that includes the code for the GalaxyGan and will list future projects for other researchers who are interested.

“AI provides another set of tools that we need to handle to make new discoveries. I think it’ll stick around, but it’ll disappear below the surface. Right now it’s still shiny and new, but after a while it’ll just be another tool people use,” he said. ®