This article is more than 1 year old

Dear rioters: Hiding your face with scarves, hats can't fool this AI system

Accuracy is not great – but it's a start for computer-aided crackdowns by cops

Software can take a decent stab at identifying looters, rioters and anyone else who hides their faces with scarves, hats, and glasses, a study has shown.

A paper by a team of researchers at the University of Cambridge, UK, the National Institute of Technology in Warangal, India, and the Indian Institute of Science, describes how a convolutional neural network can be trained for so-called disguised face identification (DFI).

Amarjot Singh, based at Cambridge's department of engineering, told The Register on Tuesday that DFI is of “great interest to law enforcement as they can use this technology to identify criminals. This is the primary reason we attempted to solve this problem.”

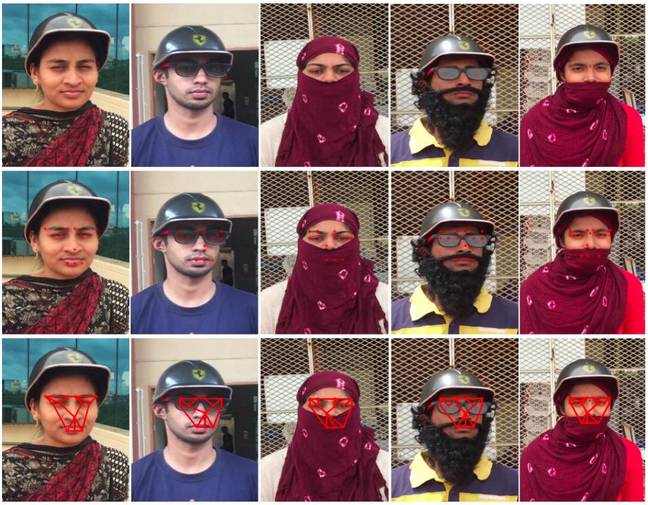

For the study, a convolutional neural network was trained by showing it hundreds of images of people who had concealed their mugs using bits of clothing from sunglasses to beards and helmets. For each snap, 14 points were identified: ten markers to memorize parts of the eyebrow and eye regions, one for the nose, and three for the lips.

All the points connect to create what the researchers call a “star-net structure.” The distance and angles between the points on the network is analyzed to learn the structure of people's faces even when obscured by shades or balaclavas, and so on.

The crucial thing to understand here is that the system is trained to identify these markers from the pixels of any obscured face. It is taught how to take a masked head and create from it a fingerprint, if you will, of the structure of a person's face out of these 14 points.

These points, this facial fingerprint, can then be used to scan a larger database – a database of pictures the DFI machine-learning system hasn't seen before – such as a library of driving license photos or mugshots of known troublemakers – and identify potential matches.

In other words, shown a cropped still from CCTV footage of a riot, the program would intuitively place the 14 markers. That pattern of markers would then be used to match a face in a database. And bingo, your miscreant is unmasked.

That's how it would work in theory. This thing is still a proof of concept.

Images of five different participants. The top row is a clean input image. The second row has the fourteen points mapped out. The third row shows the star-net structure ... Click to enlarge (Photo credit: Singh et al)

A thousand images of people in various disguises were used for training, and 500 were held back for validation and another 500 for testing – the difference between training, validation and testing datasets is explained here.

Although Singh hopes it will be helpful for police trying to finger crooks and looters, it has sparked some fears of authoritarian regimes using it to digitally unmask innocent folks and incriminate legitimate protesters and activists. Singh said that while he agreed that there is a concern regarding the violation of privacy and right to assemble, the technology could be used to “take many criminals off the streets as well.”

“As to make sure that this doesn’t fall into the wrong hands, we will just have to be aware that this technology is available only to the organizations that intend to use it for the good,” he said.

AI is still easily fooled

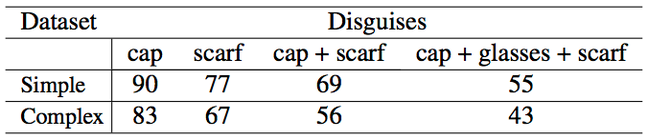

It’s important to realize that while the accuracy of this computer vision system is good for early research, on a practical level it isn’t that awesome. Cluttering up the background with buildings and objects is enough to lower its precision from 85 per cent to 56 per cent.

The more the face is obscured, the more difficult it is to recognize. A combination of a hat, scarf and glasses can lower the accuracy to 43 per cent. All the training images also feature clear shots of people facing the camera, something that is not often the case in fuzzy stills from CCTV footage. A lot of that material will feature people standing at various angles to the cameras. Then there's the fact that people wearing full-cover masks – think V for Vendetta – will fool it all the time.

Table of the system's accuracy rates

The training dataset is also pretty limited since it’s expensive to pay participants to dress up in various items and poses and have their photos taken so that their faces can be mapped out. Each person was paid about ten dollars for a 30-minute session, Singh said.

“The current dataset has 10 disguises and is composed of primarily Indian and some Caucasian people. This needs to be expanded to more disguises with people from other ethnicities as well to increase the effectiveness of the DFI system,” he added. In other words, you're going to be out of luck if your protesters aren't white or Indian.

So far this is a lab system. It needs thousands upon thousands more pictures to train it so it can accurately identify facial markers from a range of different types of human, and not be and not be misled by objects bearing only a distant similarity to a person's face.

This will require more efficient algorithms and code to achieve at scale. It isn't there yet.

The researchers will present their work – unearthed this week by Jack Clark's Import AI newsletter – next month at the IEEE International Conference on Computer Vision Workshop in Venice, Italy. ®