This article is more than 1 year old

AI in Medicine? It's back to the future, Dr Watson

Why IBM's cancer projects sounds like Expert Systems Mk.2

Analysis "OK, the error rate is terrible, but it's Artificial Intelligence – so it can only improve!"

Of course. AI is always "improving" – as much is implied by the cleverly anthropomorphic phrase, "machine learning". Learning systems don't get dumber. But what if they don't actually improve?

The caveat accompanies almost any mainstream story on machine learning or AI today. But it was actually being expressed with great confidence forty years ago, the last time AI was going to "revolutionise medicine".

IBM's ambitious Watson Health initiative will unlock "$2 trillion of value," according to Deborah DiSanzo, general manager of Watson Health at IBM.

But this year it has attracted headlines of the wrong kind. In February, the cancer centre at the University of Texas put its Watson project on hold, after spending over $60m with IBM and consultants PricewaterhouseCoopers. Earlier this month, StatNews published a fascinating investigative piece into the shortcomings of its successor, IBM's Watson for Oncology. IBM marketing claims this is "helping doctors out-think cancer, one patient at a time".

The StatNews piece is a must-read if you're thinking of deploying AI, because it's only tangentially about Artificial Intelligence, and actually tells us much more about the pitfalls of systems deployment, and cultural practice. In recent weeks Gizmodo and MIT Technology Review have also run critical looks at Watson for Oncology. In the latter, the system's designers despaired at the claims being made on its behalf.

"How disappointing," wrote tech books publisher O'Reilly's books editor, Andy Oram, to net protocol pioneer Dave Farber. "This much-hyped medical AI is more like 1980s expert systems, not good at diagnosing cancer."

What does he mean?

Given how uncanny it is that so much of today's machine learning mania echoes earlier hypes, let's take a step back and examine the fate of one showpiece Artificial Intelligence medical system, and see if there's anything we can learn from history.

MYCIN

The history of AI is one of long "winters" of disinterest punctuated by brief periods of hype and investment. Developed by Edward Shortliffe, MYCIN was a backward-chaining system designed to help clinicians that emerged early on in the first "AI winter".

MYCIN used AI to identify the bacteria causing infections, and based on information provided by a clinician, recommended the correct dosage for the patient.

MYCIN also bore the hallmarks of experience. The first two decades of AI had been an ambitious project to encode all human knowledge in symbols and rules, so they could be algorithmically processed by a digital computer. Despite great claims made on its behalf, this had yielded very little of use. Then in 1973, the UK withdrew funding for AI from all but three UK universities. The climate had gone cold again.

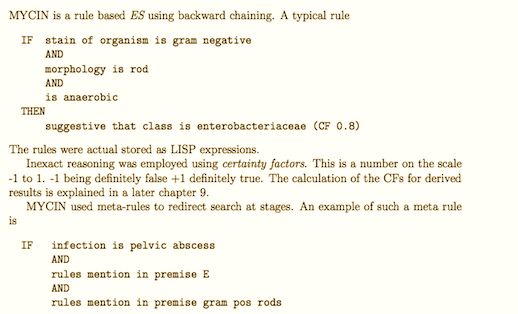

AI researchers were obliged to explore new approaches. The most promising seemed to be to give the systems constraints – simplifying the problem space. Micro-worlds, artificially simple situations, were one approach; and Terry Winograd's block-stacker SHRDLU was one example. From Micro-worlds came rules-based "expert systems". MYCIN was such a rules-based system. Comprising 150 IF-THEN statements, MYCIN made inferences from a limited knowledge base.

There's a detailed description of MYCIN here (PDF).

At the time, MYCIN's defenders claimed that no "expert" could outperform it, and it prompted a wave of enthusiasm. MYCIN had much to commend it. It was honest, giving the user a probability figure and a full trace of all the evidence.

Just as today's AI experiments purport to be able to tell if you're gay (and in future, its Stanford creator claims, your political views), the headline conceals a probability, derived after much training (or "learning").

Unlike almost all mainstream publications, which rarely if ever report the failure rate, we do. For example, the failure rate for the AI recognition that claimed to identify masked faces was surprising: with cap and scarf the AI is between 43 and 55 per cent accurate. "On a practical level it isn't that awesome," we noted.