This article is more than 1 year old

Evaluate this: A VM benchmark that uses 'wrong' price and config data

What the fudge, Evaluator Group and IOmark?

Analysis Diane Greene's server-powered storage startup Datrium says it has achieved the highest-ever IOmark benchmark at 8,000 VMs on a DVX cluster, five times higher than the previous record by IBM's FlashSystem V9000 (1,600) and ten times more than an Intel server/VMware/vSAN Optane system (800) (PDF).

But it appears the official price and configuration information in the benchmark report is askew.

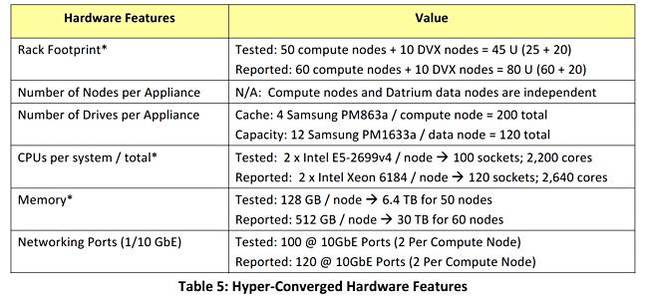

First of all, let's mention that the tested Datrium system [PDF] was not the reported configuration. What happened is that the Evaluator Group actually tested a 60-node setup of 50 Dell C6320 compute nodes and 10 Datrium F12X2 data nodes.

Tested versus reported configurations on page 6 of IOmark report

The F12X2 is Datrium's latest dual controller all-flash storage node with 12 x 1.92TB Samsung PM1633a SSDs, 23TB raw, and there can be ten such nodes in a Datrium cluster. In the benchmark it should logically have been twinned with Datrium's just-announced CN2100 compute node, one that uses Skylake CPUs: 2 x Xeon Gold E5-6148 v5 20-core Gold E5-6134 8-core CPUs.

In fact, the tested configuration did not. Dell C6320 servers were used instead. They have Xeon E5-2600 v4 CPUs – Broadwell, not Skylake, ones.

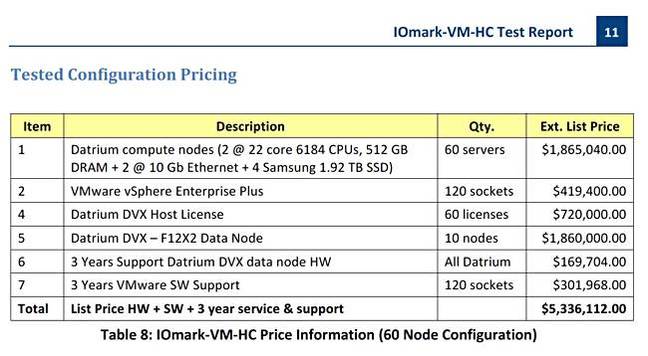

Datrium IOmark tested configuration pricing on page 11 of the IOmark report

In the IOmark benchmark test report (PDF) the relevant text reads:

The tested configuration is shown for transparency. However, for price and official configuration purposes, the "Reported" configuration required the CPU and memory resources as noted. The determination is made based on the referenced system running VMmark 2.5.

VMmark 2.5 is a compute benchmark. It measures multiple server virtualisation performance with a tile-based multi-application design.

Why this server benchmark had any relevance to IOmark was not clear to Reg storage desk.

The Evaluator Group document states:

First, the tested config was 50 Broadwell compute nodes and 10 storage nodes while the reported configuration was 60 Skylake compute nodes and 10 storage nodes.

Secondly, the VMark 2.5 benchmark workload, whatever that workload was, required compute nodes using Xeon SP CPUs and the Dell Broadwell compute nodes were insufficient for this VMmark workload.

And ultimately, the tested configuration for pricing purposes was not the tested configuration that was used in the test.

What the evaluators did was test the lower-powered Dell system, which apparently could not support the required VMmark number, and consequently extrapolated the results up by 10 per cent to hit the 8,000 VMs level for 60 Skylake compute nodes.

They said:

It is the determination of Evaluator Group that this Datrium configuration is capable of achieving 1,000 VMmark tiles based upon the published results of a E5-2699v4 Intel 2 node system achieving 32 VMmark tiles, which translates into 900 tiles for a 60-node configuration. Intel's published data indicates improvements of between 5-100 per cent for the Xeon Scalable system, thus supporting the projection of a 10 per cent improvement required to support 1,000 VMmark tiles, or 8,000 IOmark-VM-HC VM's.

So the reported Evaluator Group claimed result is not the actual tested config result. It seems to imply the tested Dell compute node config achieved 7,200 VMs, which is of course an excellent result, but it's not the reported 8,000 number.

Datrium PoV

Datrium's Craig Nunes said: "I assure you the results are no sham... We indeed tested on 50 x E5-2697 Broadwell servers and achieved an audited 8000 IOmark-VMs.

"The confusion in the report comes with the pricing rules. It requires that we price servers with an equivalent VMmark score, so a customer can balance storage performance (IOmark) with compute performance (VMmark). The servers we tested only achieve 5000-6000 VMmark – something we were actually proud of.

"But the rules required us to price more powerful servers, and more of them (Skylake 6148 @ 133 VM/host x 60 hosts), so the server configuration was capable of 8000 VMmark... We were pleased with the fact we could get such high performance with last-gen servers and non-NVMe drives."

Evaluator Group PoV

Russ Fellows of the Evaluator Group said: "The tested Datrium DVX system did in fact achieve 8,000 IOmark-VM virtual server instances. There is no projection or uplift here, those are the actual results and can be directly compared to any other system that has reported IOmark-VM results."

Why are VMmark scores needed at all in the IOmark?

Fellows said: "For the IOmark-VM-HC certification... we additionally require that the servers utilised are able to support the workload tested. Since IOmark-VM is IDENTICAL to VMmark, we utilise published VMmark 2.5 benchmark results to verify the server configuration."

He added: "VMmark tests the CPU and memory, but in effect tells you nothing about the storage, because all of those systems are tested with non-production storage in non-standard configurations which would never be used in production."

So IOmark tests the storage but, apparently, tells you nothing about the CPU and memory. To get a rounded system-level (CPU + memory + storage) view you need to combine the two. Thus, Fellows says: "IOmark-VM tests and reports the storage, along with the number of VMmark certified systems required, along with a price, providing a hyperconverged price/performance result."

Only the actual tested IOmark system is not the reported VMmark-tested system in the Datrium case.

+RegComment

This IOmark pricing calculation requirement has driven Datrium and the Evaluator Group into reporting a benchmark apparently using Xeon Skylake processors that actually used Xeon Broadwell CPUs. It makes no sense.

In our view, a benchmark report should be clear and unambiguous regarding the tested system configuration and use only that tested configuration in its pricing calculations: no ifs, no buts, no excuses, no reasons why not.

If a benchmark has to do this ludicrous manipulation, then the benchmark's design is inadequate.

Suppose we get a Ford car's mileage benchmark report that actually contains pricing and official configuration information for a Chevrolet, we'd be angry, and rightfully so.

The IOmark benchmark people appear to be presenting an officially audited result that really falls short. ®

Bootnote

We have asked the IOmark organisation why this presentation of projected rather than actual results happened. When we get a response we'll add it.

Get the Datrium data node specs here (PDF) and the compute node specs here.