This article is more than 1 year old

HPE burns offering to Apollo 6500, unleashes cranked deep learning server on world+dog

Faster GPUs, more FLOPS

HPE has updated its Apollo 6500 deep learning server with a threefold performance boost over its precursor by stuffing it with eight Tesla V100 GPUs, which speak to each other via Nvidia's NVlink 2.0 interconnect protocol.

HPE claimed the Nvidia gear makes it, on average, 3.12 times faster than the previous gen9 Apollo 6500 at running the Inception-v3, ResNet-50 and VGG-16 deep learning models with the TensorFlow and Caffe2 frameworks.

We're told it can produce up to 125 TFLOPS single-precision compute. The Apollo 6500 gen9 output up to 56 TFLOPS per server with eight Nvidia Tesla M40s.

The gen9 Apollo 6500 was a 4U chassis with 2 x 2U XL270d server sleds. These featured a central pair of Xeon processors flanked by four Nvidia GPUs either side – the Tesla K40, K80, and M40 were supported. The chips were linked by PCIe gen3. Each sled supported 1TB of memory and had eight 2.5-inch drive bays.

HPE Apollo 6500 gen9 server sled (top) and chassis (bottom) with two stacked XL270d sleds

Straightforward enough. The gen10 Apollo 6500 chassis isn't, as an HPE-supplied image shows:

It doesn't have two 2U server sleds. There are eight 2.5-inch drive bays in what appears to be a 2U component at the top. Below that is a 1U element fed by four power cords coming from a 1U base unit, each with a fan and labelled PS1, PS2, PS3 and PS4 – power supplies.

HPE Apollo 6500 gen10 power cabling detail

Why isn't there an internal power feed instead of this clumsy affair with cables obscuring fan vents? HPE doesn't say. We might suppose it's because there isn't room for interior power cabling.

The mid-mounted 1U component is for the the eight Tesla GPUs, with the top 2U unit for two Xeon servers, mounted side by side, underneath a removable top half-cover. That's why the 16 drive bays are there.

These bays can hold SAS/SATA SSDs with up to four NVMe drives.

The label flange at the top right reads "ProLiant XL270d Gen10". This looks nothing like a gen9 XL270d and there is no obvious way to slide out any front-mounting server component box.

The one on the left reads "Drive Box ID" and shows two drive boxes.

The ProLiant XL270d appears to have been substantially redesigned.

We're told the "innovative systems design of the HPE Apollo 6500 Gen10 allows for a high degree of flexibility with a range of configuration and topology options to match each workload".

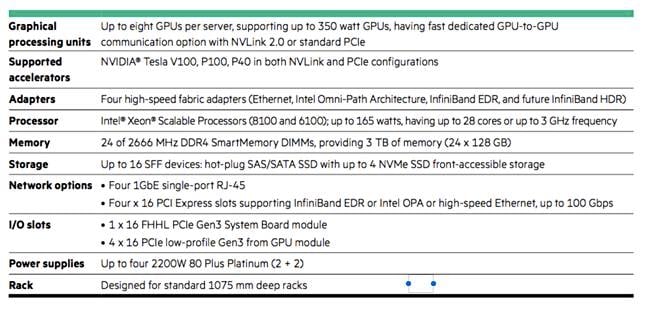

Here are the details:

That's 3TB of memory – the gen9 system had 1TB per server, 2TB in all.

The accelerator topologies include hybrid cube mesh for NVLink and 4:1 or 8:1 GPU:CPU flexibility in PCIe.

Management is via iLO (Integrated Lights-Out) v5.

HPE is supplying a set of service offerings around the new box and it has a fast file system reselling deal with WekaIO and its SPECSFS 2014 benchmark-winning parallel file system:

- Digital Prescriptive Maintenance Services, one of a series of Pointnext AI-enabled offerings which HPE claimed can predict, suggest, and automate action to fix a problem before it causes harm

- Artificial Intelligence Transformation Workshop, providing Pointnext consulting expertise to help customers get started with AI, develop data and analytics initiatives, and look at AI use cases

- An HPE Deep Learning Cookbook includes a performance guide which uses a knowledge base of benchmarking results and measurements in the customer's environment to guide technology selection and configuration

- The firm is reselling the WekaIO flash-optimised Matrix file system, which is certified for the Apollo 2000 Gen10 and HPE ProLiant DL360 Gen10 systems.

HPE will showcase these new products along with its HPC and AI portfolio at Nvidia's GPU Technology Conference on March 26-29 in San Jose, California. There are more details here.

Availability

HPE Digital Prescriptive Maintenance Services are now available in Europe and will be globally available in June via HPE Pointnext. The Artificial Intelligence Transformation Workshop is globally available now via Pointnext. The Apollo 6500 Gen10 will be available from HPE and its channel partners in May, same with WekaIO Matrix. ®