This article is more than 1 year old

Take the dashboard too literally and your brains might end up all over it

Ooo, pretty colours. But what if the data or interpretation sucks?

UIs with buttons and sliders existed for years as a means of putting a slick gloss on the data jungle that is Excel. But Salesforce provided the breakthrough in presenting complex business data with the metaphor of the dashboard.

This put complex data wrangling in the hands of those otherwise lacking the intermediary skills of hacking code in Visual Studio or in herding macros.

Nearly 20 years on we have cloud and DevOps, and these breed more data than CRM, but the need to wrangle and polish that data has carried over. Now there's the abiding belief that if you can read and understand data, you can control your system.

Many view this data via, yes, dashboards.

At their best, dashboards are seductive, elegant and informative. At their worst they are seductive, elegant and horribly misleading. As we move ever closer to a world where massive amounts of data are machine-generated, we need ever better instrumentation and dashboards, but we don't always get them.

Let's take a look at some examples of the problems inherent in dashboards and virtual instrumentation.

Can you trust your instrumentation and your dashboards?

To go back to their origins for a moment, we can obviously trust real instruments in real dashboards. Obviously? Well, consider these two oil pressure gauges. One old, the other new.

Which should you trust the most? The old one is calibrated in real numbers and the modern one just has an arbitrary scale between L and H (Low and High).

But it is a trick question because the modern one shown (from a Mazda MX5) doesn't actually measure the pressure of the oil at all. It takes data from the onboard computer about temperature, revs and so on and moves the needle to show you the expected oil pressure for those conditions – fabricated data, in other words.

Why Mazda elected to do this (which must surely have been harder than fitting an old-style one) is not clear. But it does highlight, very elegantly, that even readings on real instruments in real dashboards are not always what they seem. And by the time you are looking at a computer-generated image of an instrument on a virtual dashboard you can expect reality to be seriously on the blink. Why?

There is often wobble in the system

Consider the Internet of things. Those "things" are machines, typically sensors of one kind or another. If a given sensor tells you that the temperature is 15°C, that figure will have a plus/minus range attached to it – a tolerance. Sadly, by the time the data has passed from the sensor to the data collection unit then to the analytical system the error figure has usually disappeared. But we still seem to be happy to calculate and display the average to two places of decimal without any idea of the accuracy of the original data. And, as any hardened engineer will tell you, tolerances should cancel but they usually sum.

And, of course, the plus/minus range itself is not guaranteed for every sensor; some will fail to provide that level of accuracy. And if you deploy sensors in large numbers you have the unenviable task of spotting the rogue ones. Some are easy to spot, others are much, much harder so their inaccurate data is often simply added to the mix.

Irrelevant/bad data

Then there is the data that is either irrelevant or bad, depending on your point of view. Take web servers as an example – they generate data that is stored in log files. On the face of it, that data ought to be clean because it is being generated by a computer program rather than a sensor, but in practice we do still see web logs that are full of junk. And even if the log contains perfectly accurate data there can still be problems.

For example, the log file may be recording events in that you have no interest (such as hits by bots, hits on image files and so on) so they should be removed before the data is fed to the attractive dashboard on your screen that shows you how website hits are increasing day by day. But were they? In other words, as soon as you are looking at any kind of aggregation of data (and that is what dashboards usually show) there is a significant danger that the values you see will include irrelevant data that by then is bad data.

Data 'enrichment'

Even if the machine-generated data really is perfect, people will still dirty it. Data often flows through a company, passing from person to person and system to system. The data could become aggregated, sliced and diced by any number of parameters before it reaches the dashboard.

As for people, those performing the above tasks will bring their own particular agendas to the repackaging. As Charles Goodhart, Emeritus Professor at the London School of Economics, said: "When a measure becomes a target, it ceases to be a good measure."

So the people may quietly move data around or indulge in "imaginative accounting" to ensure that their targets are met and that they personally look as good as possible. By the time those aggregated figures are rocking the needles in the dashboard of the board members, they may be as accurate as Donald Trump's tweets.

As a general rule, then, build your dashboards from the original, untouched data. And, if you are a consumer of dashboards, ask questions about the data that underpins them.

Misinterpretation

Another problem comes not from the supply of the data but our ability to misinterpret it because we don't have enough context to understand it properly.

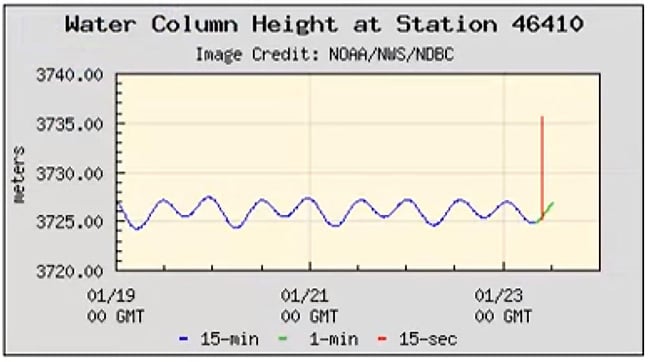

As a recent example, on 23 January, a 7.9-magnitude earthquake struck the coast of Alaska. Tsunami alerts were issued for Alaska and the entire Western seaboard of the US, but initially no one really knew if the quake had unleashed a tsunami or not. Then suddenly, splashed all over the internet, was data from "station 46410", a Deep-ocean Assessment and Reporting Tsunami buoy in the Gulf of Alaska. Buoy 46410 showed a sudden 10m (33 foot) spike.

The DART data is published in real time complete with graphics. The problem is that this virtual instrument was interpreted, not by professionals, but by the runaway rumour mill that is the internet, and that rumour mill screamed tsunami! What they lacked was any background knowledge required to interpret this representation of data from the buoy. The professionals were aware that this water displacement shown could also be interpreted as seafloor undulations and not as a wave. Ultimately that turned out to be the correct interpretation.

So are all dashboards useless?

Of course not. Dashboards are brilliant devices for displaying complex information, derived from huge masses of data, in an easily digestible way. But (and we'll make that a big BUT) the process of reducing that raw data down into information for display must be done very carefully.

If it isn't, the seductive power of a dashboard stops being its greatest strength and becomes its greatest weakness. They are powerful tools; we must use them carefully. ®

We'll be covering DevOps at our Continuous Lifecycle London 2018 event. Full details right here.