This article is more than 1 year old

Google's socially awkward geeks craft socially awkward AI bot that calls people for you

Plus: Linux apps on Chrome OS start to emerge

Google IO Google today opened its annual I/O developer bash with details of how it’s going to lob machine-learning software at everything you do online and offline, and it truly means everything.

CEO Sundar Pichai took to the stage in Silicon Valley to explain how artificial intelligence will make life easier, safer, and more fun for everyone, provided they stay addicted to his company’s products. Not only will these AI systems simplify our lives, but they’ll also train us to use technology better.

There wasn't a lot of detail in the keynote – rather, it was a headlong rush through stuff Google is about to, or plans to, unleash on the world.

“Technology can be a positive force but we can’t be wide eyed about its impact,” he told the crowd. “There are serious questions being raised and the path ahead needs to be calculated carefully. Our core mission is to make information more available and beneficial to society.”

To showcase this, he showed off some the augmentations that are coming to Google Assistant in the next few months, not least in the voice it uses. By the end of the year, when you chat with Google’s digital personal assistant, it will use one of six voices, including that of the singer John Legend, that you can select.

Pichai said the use of AI meant the voice actors spent a lot less time in the studio: the ad giant's code was able to learn enough from their utterances to impersonate the speakers with a wide vocabulary. Perhaps one day, it will impersonate you.

Duplex – what’s real and what isn’t

Google will soon add a feature to the assistant called Duplex. This takes natural language processing capabilities to the next level, and lets the assistant talk to humans on your behalf without revealing itself to be a bot. The software pretends to be you, or act for you, over the phone to automatically order food, arrange appointments, and so on.

Rather than pick up the phone, dial someone, and go through that bothersome tediousness of interacting with them, no, instead ask Google to do it for you. And its digital assistant will. Badly.

The examples Pichai gave were of a netizen asking the assistant to book a haircut or a table at a restaurant. The software would then call the venue itself to make the booking, and it even adds in few verbal ticks, such as saying “er” or “mmhm”, to make itself more realistic. You can hear it in action below:

Google Assistant making a call. I wonder how many calls they made to get one this smooth #IO18 pic.twitter.com/aNCTJzeGKr

— Iain Thomson (@iainthomson) May 8, 2018

While Google understandably thinks this is great, several folks at the event were a little creeped or worried by the Duplex system. One can only imagine what robo-callers, scammers, and the like are going to do with it.

The tech isn't available to the general public, yet. There's some more technical details over here. Bear in mind, if the bot struggles in its conversation, it may fall back to a human handler who takes over the call. For one thing, it can't seem to take rejection well: if you tell the software there isn't a time slot available at 12pm and the next available appointment is 1pm, the assistant will ask if 10am to 12pm is OK.

Again, this technology is still in development, and it isn't being widely used at the moment.

Watch Google Assistant make a real call to make a hair appointment, talking back-and-forth with a human. No, it's not yet available but being tested. #io18 pic.twitter.com/kPhDSCCYdP

— Danny Sullivan (@dannysullivan) May 8, 2018

The software will also, eventually, respond to you talking to it without having to say “Hey Google” to activate it. The assistant can now recognize when it is being spoken to, and react accordingly.

Some Googlers were worried that kids talking to the assistant could become trained to be rude little insistent devils, so it will be introducing an opt-in system in the next few months called Pretty Please. If you ask the assistant something and use the word please, it lavishes praise on you for being so polite, if you enable it.

Google as the gateway to news

Pichai also said Google’s news aggregator will be pumped with artificial intelligence. The predictive systems that are going to be built in will automatically gauge the kind of news you, individually, want to see and suggest suitable “trusted” titles and stories. Fingers tightly crossed the robot overlords appreciate El Reg's puns, sarcasm, and irreverence toward corporate power.

A new feature called Full Coverage will, allegedly, display all news articles on a specific topic, and everyone will see the same information. But if you want a more general overview, the News app will suggest articles for you based on your past viewing habits, from 1,000 trusted titles. How these titles get to be trusted, and who gets left out, wasn’t explained.

For those titles behind a paywall, the Chocolate Factory has inked a deal with publishers to allow people to subscribe and pay to consume their stories using their Google account.

Android P getting smarter

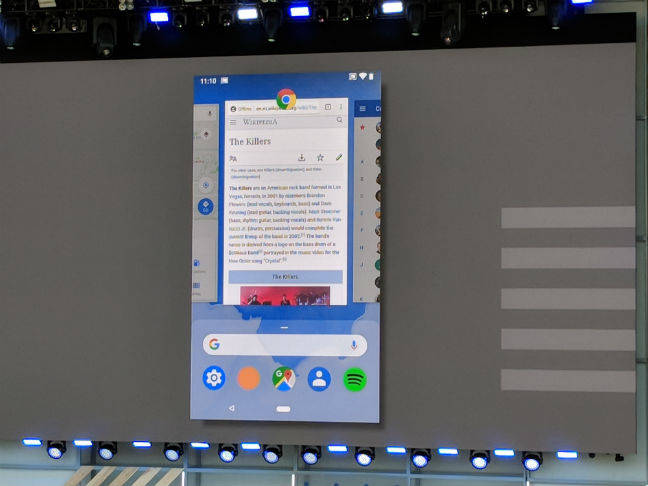

Android P, the next build of Google’s mobile operating system, will be out in the autumn, we're told.

Android P ... coming later this year

The P build will have better battery life than previous 'droid editions, Dave Burke, Android's veep of engineering, promised, because it will know how you use your phone and adjust power consumption accordingly. By logging which apps are used and when, the OS will reduce resources for lesser-used apps. Burke said this cuts battery-chewing CPU wake-ups by 30 per cent.

“We believe smartphones should merge with you and adapt to you,” he said.

The new OS will also have a dashboard that shows all your activity during the day. This will not only help the phone understand how you work and on what, but will also predict and try and anticipate a users’ actions.

Android already has a line of the most commonly used apps on its main applications screen, but with the P build, it will also suggest actions based on your past history. Burke showed off his phone telling him he usually called his wife or went for a run right about then, and suggested these as possibilities. It’ll also suggest music if you have the headphones plugged in.

For developers, these kinds of features can be supported in application software simply using an Actions.xml file. Google is also releasing a machine-learning developer kit. This opens up some basic AI functions, such as image and text recognition, to everyone.

Now put the technology down

Android P will also include an App Timer. This can be set up to warn users if they spend too long using a particular application, and drop them a reminder to put the smartphone down and go and do something else instead.

Burke said a common complaint was that people are too attached to their mobe, and the minute a notification comes in they have to look. So the new OS will have a Do Not Disturb function that not only kills the phone’s sound, but also any visual notifications. The mode is automatically enabled if you put a phone face down on a surface.

Of course, there will be exceptions. Users can set up a list of Starred Contacts – people who can always break through the Do Not Disturb settings. Spouses and children’s schools were suggested as just the ticket.

Another issue is people taking their phone to bed and staying up half the night using it. So Google has added a Wind Down mode to counter this.

If you tell the phone when you plan to go to bed, it will set an internal counter going. Once bedtime truly comes, the phone will mute out all color and put the phone in Do Not Disturb mode, along with a reminder to put it down and go to sleep.

Where do you want to go today?

Google Maps is also getting some important additions, thanks in part to the Google Lens image-processing system. Maps will now suggest shops and services nearby based on your past habits. If you’ve rated somewhere highly in the past, this will factor into its recommendations, and the app will tell you why it has suggested a particular place.

There’s also a group function, the idea being that if you are getting together with friends everyone can put in suggested restaurants and Google will help pick the most acceptable choice.

Augmented reality is also being added to Google Maps' directions. Rather than trying to work out which way to walk, the phone will check your orientation, compare the location with Street View data, and then tell you to go left and right on the screen. Google also gave a sneak peak of a red fox that appears on the display to show you where to go.

Release the hounds

AR will also play a factor in things like sign and menu translation. Using the camera to scan a menu will throw up little buttons to tell you more about a particular dish, for example.

And before we forget: Gmail is getting so-called smart composing. The backend will suggest words and phrases for you to include in your messages as you type in your replies.

Big data and big iron

All of this AI needs two things – lots of data and lots of processing power.

Collecting the data isn’t tough: Google owns the bulk of the web search and online advertising markets, so it knows what you're interested in, as well as the world’s most used mobile operating system. If users choose to allow their phones and applications and browsers to blab about every facet of their lives, they can access all these AI goodies and get potentially sensible suggestions.

Some people may value their privacy, and so won't be getting much use out of these new AI features. It’s going to be interesting to see what happens in these cases – either the software won’t play ball at all, or it could make a lot of bogus recommendations.

But, if experience is anything to go by, most netizens will just hand over the data recommended in the default settings. And that’s going to create a tsunami of information that needs handling.

The do this, Picai revealed the latest iteration of Google’s advanced math-processing chips – the Tensor Processor Unit 3.0. A pod of TPU v3 chips is up to eight times more powerful than a pod of its predecessor, Pichai claimed, and has so much grunt that Google has had to install liquid cooling systems to avoid frying its data centers.

Today we're announcing our third generation of TPUs. Our latest liquid-cooled TPU Pod is more than 8X more powerful than last year's, delivering more than 100 petaflops of ML hardware acceleration. #io18 pic.twitter.com/m8OH5vFw4g

— Google (@Google) May 8, 2018

The end result is that Google now has TPU pods with 100-petaflop capacities ready to process all that lovely data and find new ways to exploit it. That is, if people choose to hand it over. ®