This article is more than 1 year old

Within Arm's reach: Chip brains that'll make your 'smart' TV a bit smarter

Get ready for a future where everything from phones to CCTV recognizes faces, things

Processor design house Arm has emitted a few more details about the AI brain it's trying to persuade chip makers to pack into their silicon.

Pretty much everyone – from Intel and Qualcomm to IBM, Mediatek and Imagination – has some kind of dedicated neural-network accelerator among their blueprints, ready to slot into chips and speed up decisions made on incoming data.

Well, Arm is also on the case – as it teased three months ago: its very own machine-learning processor (MLP), which can be licensed and dropped into system-on-chips alongside its Cortex CPU cores, which are used in billions of devices. The designs, which are from the ground up and not based on a previous DSP or GPU, will be available to customers from mid-2018, we're told.

At first, the MLP will be touted for apps running on smartphones and other mobile devices, but eventually it may well work its way into digital tellies, surveillance cameras, servers, and so on, especially when paired with Arm's Object Detection Processor (ODP), which does what it says on the tin. The first-generation ODP is used in Hive and Hikvision CCTV to automatically detect stuff, such as a person or animal wandering into frame. A second-generation ODP is also being touted by Arm from today.

How will it be used?

What you've got to look forward to is this: security cameras waking up and recording as soon as someone enters view, saving power and storage space until when it's necessary. Digital or so-called smart TVs that automatically pause when you stand up to pop to the kitchen, and play again when you return and sit down – or blank the screen if a kid wanders into the room when you're watching something primarily for adults.

Just as every cafe has to offer pulled-pork sandwiches these days, practically every device in future will include a camera. There is no escaping this. These digital eyes will constantly generate video that needs processing into decisions – to start recording, recognize a gesture, enhance the colors, send a message, say hello, whatever you need – and math accelerators like Arm's MLP and ODP, as well as competing cores, will be plugged in to make it happen immediately without beaming back footage to the cloud for processing.

The ODP and MLP combined can pick out people, cars, road signs, and so on, from full HD video at 60 frames per second, as long as the objects are 50-by-60 pixels or larger, with a "virtually unlimited" number of objects identified per frame, Arm engineers said in a chat with El Reg earlier this month. The ODP can also work out which way people are facing, their pose, and direction of movement.

ODP v MLP

The ODP is mainly designed to identify regions of interest – such as faces of people in a crowd – and then pass those sections on to the MLP, or CPU or GPU, to work out, for instance, exactly who those faces belong to. It uses standard built-in AI algorithms, such as histograms of oriented gradients (HOG) and support vector machines (SVM), to perform object detection.

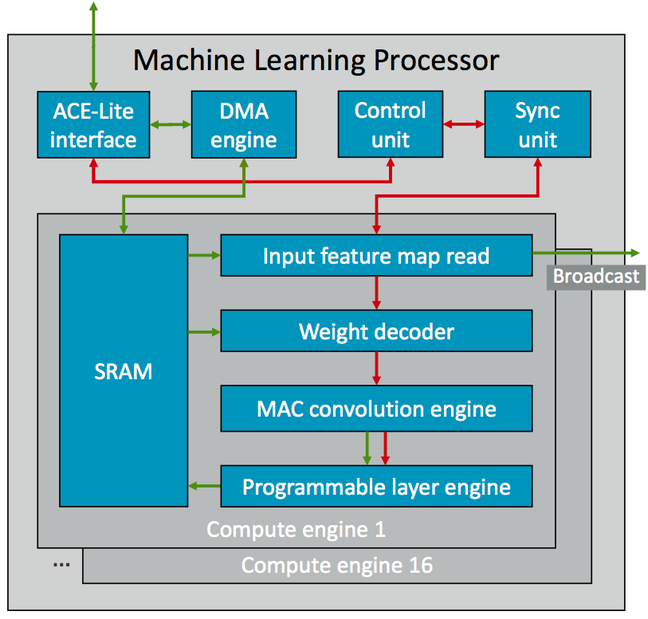

Think of the ODP as a processing block dedicated to a particular task – identifying types of stuff in a frame – while the MLP is a flexible and programmable unit that can work on the things flagged up and outlined by the ODP. Below is a block diagram of the MLP.

Block diagram of Arm's Machine-Learning Processor ... Click to enlarge

Green indicates data path, red are control lines

The processor is made up of 16 compute engines, all sharing typically 1MB of SRAM, and each featuring a pipeline of stages. A trained neural network's weights and activation data – the values that lead it to make decisions on input data – are pruned and compressed, and transferred into the MLP's SRAM. The aim of the game is to minimize the movement of data, particularly the neural network, in order to keep power consumption down. Less copying requires less energy. Pruning involves removing pathways through a neural network that have a zero or very small weight – ie: they are unimportant.

Then data, such as part of a video frame or a moment of audio, is streamed into the MLP, and run through the engines' stages, including each engine's programmable unit, to perform 8-bit convolutions and come up with an outcome. Eight bits are good enough for neural networks – if you need more, throw a CPU or GPU at the problem, but you'll have to pay with battery life. The precision can be varied within those eight bits.

Data can be written back to the SRAM by the programmable units, and an internal broadcast network keeps the memory organized and coherent. Feature maps are broadcast to each compute engine from the SRAM to minimize energy use – if you want to know what feature maps and convolutional neural networks are, read this.

The MLP is tuned for chips using 16nm and 7nm process nodes, and is expected to exceed 3 tera-operations per watt at 7nm, and hit 4.6TOP/s in throughput overall.

Finally, all of this needs software to control it. Applications should talk to neural-network frameworks, such as TensorFlow, Caffe, or Android's NNAPI, which will pass data to and from the hardware via Arm's software libraries and compilers. You can't program the internals directly, instead you have to use a high-level interface to control the operation of the compute engines.

There, that's perhaps more than you ever wanted to know about a licensable AI accelerator. ®