This article is more than 1 year old

Jeez, not now, Iran... Facebook catches Mid East nation running trolly US, UK politics ads

Whack-a-Troll: Ad biz smashes latest manipulation plot to show it's doing... something

Facebook, the antisocial advertising platform on which anyone can promote just about anything, on Friday said it found people promoting political discord in the US and UK, yet again.

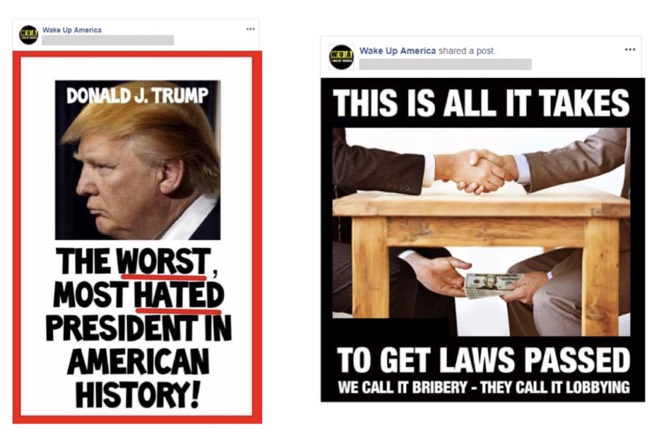

Russia doesn't appear to be involved this time. In the latest round of Whac-A-Troll, the data harvesting site said it removed 82 Facebook Pages, groups, and accounts for "coordinated inauthentic behavior" coming out of Iran.

During a conference call with journalists on Friday morning, Nathaniel Gleicher, head of cybersecurity policy, said "coordinated inauthentic behavior" is when people or organizations create networks of accounts to mislead others about who they are or what they're doing.

The term is not intended to apply to the sort of coordinated authentic, scrupulously honest ads or posts normally found on the site.

Facebook said it removed 30 Pages, 33 Facebook accounts, 3 groups, and 16 Instagram accounts in its purge. About one million accounts followed at least one of these Pages, the ad biz said, while about 25,000 accounts joined at least one of the groups and about 28,000 accounts followed at least one of the Instagram accounts.

It was a budget operation: those carrying out the operation spent about $100 on two ads in the US and Canada promoting their misinformation.

"Coordinated inauthentic content" from Iran, or so says Facebook.

Gleicher said Facebook wasn't in a position to speculate about the motives of those behind the influence campaign. "We can't say for sure who is responsible," he said, through he acknowledged that there's some overlap with Iranian accounts and Pages removed in August.

The social ad biz took action, said Gleicher, because America's midterm elections are only two weeks away. He said details of the company's investigation have been shared with US and UK authorities and lawmakers, other tech companies and the Atlantic Council’s Digital Forensic Research Lab.

"We continue to get better at taking down these actors using machine learning and manual review," said Gleicher.

Some of that has to do with Facebook's ballooning safety and security group, now at about 20,000 people after a hiring frenzy. The site expanded its policing following blowback from its obliviousness to efforts to influence the 2016 US presidential election and years of allowing its data to be spirited away. But the biz also credits statistically sophisticated software with its ostensibly improved vigilance.

"Thanks to improvements in artificial intelligence we detect many fake accounts, the root cause of so many issues, before they are even created," the Silicon Valley giant said.

Facebook has been eager to be seen to be doing something to mitigate misinformation campaigns powered by its tools, having in April and May confronted lawmakers in the US and abroad who teased the possibility of regulating the company.

To judge by the series of security-related announcements from Facebook in October alone – Removing Spam and Inauthentic Activity from Facebook in Brazil (Oct. 22), Increasing Transparency for Ads Related to Politics in the UK (Oct. 16), Expanding Our Policies on Voter Suppression (Oct. 15), An Update on the Security Issue (Oct. 12), Removing Additional Inauthentic Activity from Facebook (Oct. 11), Facebook Login Update (Oct. 2), and Protecting People from Bullying and Harassment (Oct. 2) – Facebook isn't close to making itself safe for consumption. ®

PS: It turns out it's easy to run political ads on Facebook and set the "paid for" line to be anything – Mike Pence, ISIS, etc. So much for tight controls on political advertising.