This article is more than 1 year old

LG: Fsck everything, we're doing 16 lenses in smartphones (probably)

How do we make mobes take better snaps? Throw a buttload of sensors at 'em, judging from this patent

In a move that wouldn't seem out of place in The Onion, LG has invented a 16-lens camera for use in phones.

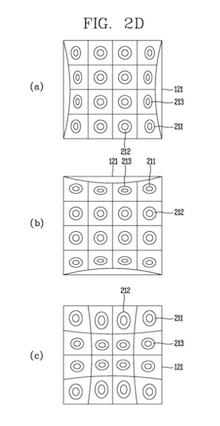

A US patent application granted last week alludes to a method of handling images from a 4x4 sensor array. The filing is coy, as most applications are, but suggests stereoscopic output as one use case.

Are more sensors better? Does mobile imaging follow the logic of The Onion's shaving product machismo, "F*ck Everything, We're Doing Five Blades"?

Not necessarily.

LG's 16-lens patent application

Google's imaging has evolved over the years by doing more in software, using a large sensor and internal computation to process a number of shots taken in rapid succession, milliseconds apart. It also has custom silicon to help speed things up (and lower the power consumption of taking a photograph), but this method is arguably cheaper than using an array of sensors. Pixel phones also achieve a bokeh effect using one main sensor. This year Apple adopted similar techniques.

Other manufacturers, however, have been happy to throw more hardware at the problem, but these involve complementing the main sensor with specialist wide angle or telephoto sensors. Huawei currently uses three main lenses and Samsung's next Galaxy S10+ flagship is rumoured to use five in its main unit.

Zeiss, which has renewed its partnership with HMD Nokia, has filed a patent for imaging units with many sensors – 12 in its supporting images.

Cut the strings and soar, LG. ®