This article is more than 1 year old

Object-recognition AI – the dumb program's idea of a smart program: How neural nets are really just looking at textures

Is it a bird? Is it a plane? Don't ask these models

Analysis Neural networks trained for object recognition tend to identify stuff based on their texture rather than shape, according to this latest research.

That means take away or distort the texture of something, and the wheels fall off the software.

Artificially intelligence may suck at, for instance, reading and writing, but it can be pretty good at recognizing things in images.

The latest explosion of excitement around neural-network-based computer vision was sparked in 2012 when the ImageNet Large Scale Visual Recognition Challenge, a competition pitting various image recognition systems against each other, was won by a convolutional neural network (CNN) dubbed AlexNet.

After this, tons of new image-scrutinizing CNN architectures came flooding in, and by 2017 most of them had an accuracy of over 95 per cent in the competition. If you showed them a photo, they would be able to confidently figure out what object or creature is in the snap. Now, it’s easy for developers and companies to just use off-the-shelf models trained on the ImageNet dataset to solve whatever image recognition problem they have, whether it's figuring out which species of animals are in a picture, or identifying items of clothing in a shot.

However, CNNs are also easily fooled by adversarial inputs. Change a small block of pixels in a photograph, and the software will fail to recognize an object correctly. What was a banana now looks like a toaster to the AI just by tweaking some colors. Heck, even a turtle can be mistaken for a gun.

And why is that? Could it be that machine-learning software focuses too much on texture, allowing changes in patterns in the image to hoodwink the classifier software?

Never mind the image, feel the texture

A paper submitted to this year’s International Conference on Learning Representations (ICLR) may explain why. Researchers from the University of Tübingen in Germany found that CNNs trained on ImageNet identify objects by their texture rather than shape.

They devised a series of simple tests to study how humans and machines understand visual abstracts. In the computer corner, four CNN models: AlexNet, VGG-16, GoogLeNet, and ResNet-50. In the fleshbag corner, 97 people. Everyone, living and electronic, was asked to identify the objects and animals shown in a series of images.

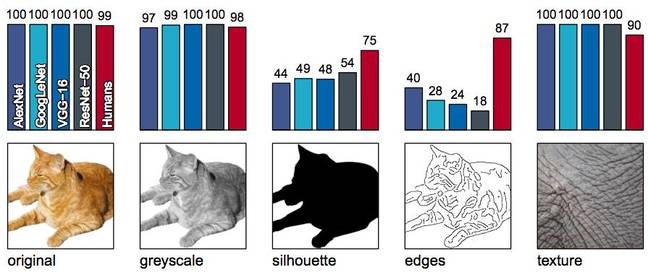

Crucially, the images were distorted in different ways to test each viewer's ability to truly comprehend what they were seeing: the pictures were presented as grayscale; with the object as a black silhouette against a white background; just the outline of the object; just a close-up of the texture of an object; with a distorted texture laid over the object; and just as normal.

An example of an image being distorted in different ways and the accuracy of the neural networks and humans in analyzing it. Source: Geirhos et al

The results showed that almost all the images that retained the objects' shape and texture were recognized correctly by humans and the neural networks. But when the test involved changing or removing the texture of the objects, the machines fared much worse. The software couldn't work with the shape of stuff alone.

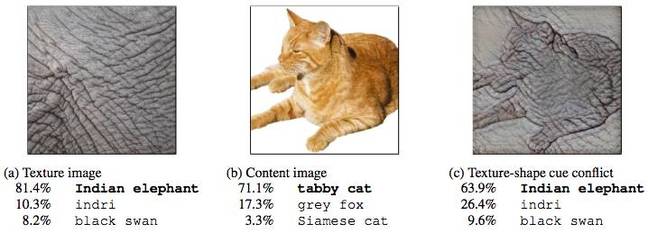

AI systems fail to correctly identify a picture of a cat if it is given the texture of an elephant. Source: Geirhos et al

“These experiments provide behavioral evidence in favor of the texture hypothesis: a cat with an elephant texture is an elephant to CNNs, and still a cat to humans,” the paper stated.

Neural networks are lazy learners

It appears humans can recognize objects by their overall shape, while machines consider smaller details, particularly textures. When asked to identify objects with an incorrect texture, such as the cat-with-elephant-skin example, the 97 human participants were accurate 95.9 per cent of the time on average, but the neural networks only scored between 17.2 per cent to 42.9 per cent.

“On a very fundamental level, our work highlights how far current CNNs are from learning the 'true' structure of the world,” Robert Geirhos, coauthor of the paper and a PhD student at the university, explained to The Register.

“They learn the easiest associations possible, and in many cases this means associating small texture-like bits and pieces of an image with a class label, rather than learning how objects [are typically shaped]. And I think adversarial examples are clearly pointing to the same problem – current CNNs don't learn the 'true' structure of the world.”

The problem may lie in the dataset. ImageNet contains over 14 million images of objects split across many categories, and yet it's not enough – there are not enough angles and other insights, it seems. Software trained from this information can't understand how stuff is actually formed, shaped, and proportioned.

The algorithms can tell butterfly species from the patterns on the creatures' wings, but take away that detail, and the code seemingly has no idea what it's actually looking at. It's fake smart.

“These datasets may just be too simple: if they can be solved by detecting textures, why bother checking whether the shape matches, too?" said Geirhos.

"For humans, it is hard to imagine recognizing a car by detecting a specific tire pattern that only images from the 'car' category have, but for CNNs this might just be the easiest solution since the shape of an object is much bigger, and changes a lot depending on viewpoint, etc. Ultimately, we may need better datasets that don't allow for this kind of ‘cheating’."

Time for a tech fix

Back to the adversarial question: do these findings of an over-reliance on texture confirm why slightly altered colors and patterns in pictures fool neural networks? That corrupting a section of banana peel makes the code think it's looking at the texture of a shiny metal toaster?

To investigate this, the researchers built Stylized-ImageNet, a new dataset based on ImageNet. They scrubbed the original textures in the images and swapped them with a random texture, and then retrained a ResNet-50 model. Interestingly, although the CNN was more robust to the changes, it still fell victim to adversarial examples. So, no. The answer to our question is no.

“Even a model trained on Stylized-ImageNet is still susceptible to adversarial examples, so unfortunately a shape bias is not a solution to adversarial examples," Geirhos explained.

"However, current state-of-the-art CNNs are very susceptible to random noise such as rain or snow in the real world, [which is] a problem for autonomous driving. The fact that the shape-based CNN that I trained turned out to be much more robust on nearly all tested sorts of noise seems like a promising result on the way to more robust models.”

The texture versus shape problem may not sound like such a big deal, but it could have far reaching consequences. Some systems pretrained on ImageNet might not perform so well in other domains, like facial recognition or medical imaging.

In fact, other research has shown it’s pretty easy to evade identification with a pair of glasses or even fake paper ones. ®