This article is more than 1 year old

Looking for super speed from Optane? It's doable but quite difficult

Understanding why is still unOptanium

Intel’s Optane DC Persistent Memory DIMM can make key storage applications 17 times faster, but systems builders must navigate ‘complex performance characteristics’ to get the best out of the technology.

Researchers at UC San Diego put the Intel Optane DC Persistent Memory Module through its paces and found that application performance varies widely. But the overall picture is that of a boost in performance from using Optane DIMMs.

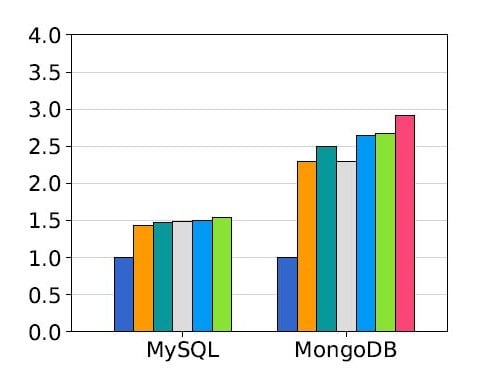

The same is true for the byte-addressable memory mapped mode, where performance for RocksDB increases 3.5 times, while Redis 3.2 gains just 20 per cent. Understanding the root causes of these differences is likely to be fertile ground for developers and researchers, the UC San Diego team notes.

The researchers state that Optane DC memory used in the caching Memory mode provides comparable performance to DRAM for many real world applications and can greatly increase the total amount of memory available on the system.

Like nothing I've ever seen

When used in App Direct mode, Optane DC memory with a file system that supports non-volatile memory will drastically accelerate performance for many real-world storage applications.

However, the researchers also warn that Optane’s performance properties are significantly different from any medium that is currently deployed, and that more research is required to understand how it can be used to best advantage.

Optane is Intel’s 3D XPoint non-volatile memory technology that is pitched as a new tier in the memory hierarchy between DRAM and flash storage.

The Optane DC Persistent Memory version slots into spare DIMM sockets in servers with Intel’s latest Cascade Lake AP Xeon processors.

Optane DIMMs can operate in two ways: Memory mode and App Direct mode. Memory mode combines Optane with a conventional DRAM DIMM that serves as a cache for the larger but slower Optane, delivering a larger memory pool over all. In App Direct mode, there is no cache and Optane simply acts as a pool of persistent memory.

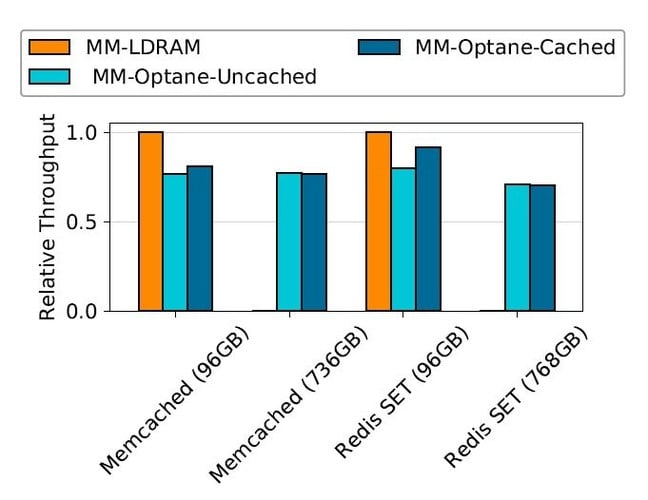

The UC San Diego testers found that in Memory mode, the caching mechanism works well for larger memory footprints. Using Memcached and Redis, both configured as a non-persistent key-value store with a 96 GB data set, produced the results below.

Not surprisingly, replacing DRAM with uncached Optane DC reduces performance by 20.1 and 23 per cent for memcached and Redis, respectively, whereas enabling the DRAM cache means performance drops between 8.6 and 19.2 per cent.

Reducing performance may sound undesirable, but it enables applications to work with a much larger in-memory dataset, as the test server could accommodate 1.5TB of Optane DC memory per socket, compared with 192 GB of DRAM.

Optane DC can be treated as if it were storage when used as persistent memory. It can also be accessed as byte-addressable memory - both were tested by the university team.

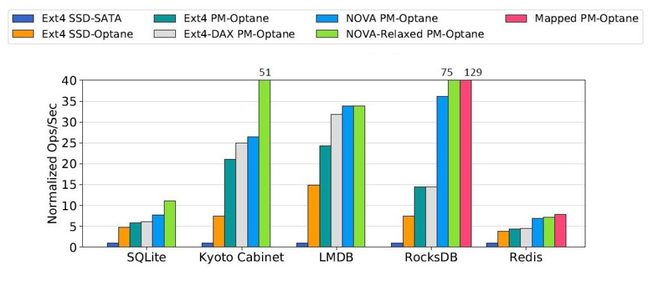

In the charts below, a number of database tools are tested out using Ext4 with and without a direct access (DAX) mode to support persistent memory, and the NOVA file system which was designed for persistent memory.

The blue and orange columns are a flash SSD and Optane-based SSD for comparison. The red column is where Optane DC has been used as byte-addressable, which requires applications able to map it into their address space then access directly with loads and stores.

You can download the full report from arXiv here. ®