This article is more than 1 year old

As Alexa's secret human army is revealed, we ask: Who else has been listening in on you?

The Lives of Others: Siri, Google and Cortana edition

Sneezes and homophones – words that sound like other words – are tripping smart speakers into allowing strangers to hear recordings of your private conversations.

These strangers live an eerie existence, a little like the Stasi agent in the movie The Lives of Others. They're contracted to work for the device manufacturer – machine learning data analysts – and the snippets they hear were never intended for third-party consumption.

Bloomberg has unearthed the secrets of Amazon's analysts in Romania, reporting on their work for the first time. "A global team reviews audio clips in an effort to help the voice-activated assistant respond to commands," the newswire wrote. Amazon has not previously acknowledged the existence of this process, or the level of human intervention.

The Register asked Apple, Microsoft and Google, which all have smart search assistants, for a statement on the extent of human involvement in reviewing these recordings – and their retention policies.

None would disclose the information by the time of publication.

What's it for?

“Amazon, in its marketing and privacy policy materials, doesn’t explicitly say humans are listening to recordings of some conversations picked up by Alexa” #fauxtomation #potemkinAI https://t.co/IoATtepH56

— One Ring (doorbell) to surveil them all... (@hypervisible) April 11, 2019

As the Financial Times explained this week (paywalled): "Supervised learning requires what is known as 'human intelligence' to train algorithms, which very often means cheap labour in the developing world."

Amazon sends fragments of recordings to the training team to improve Alexa's speech recognition. Thousands are employed to listen to Alexa recordings in Boston, India and Romania.

Alexa only responds to a wake word, according to its maker. However, because Alexa can misinterpret sounds and homophones as its default wake word, the team was able to hear audio never intended for transmission to Amazon. The team received recordings of embarrassing and disturbing material, including at least one sexual assault, Bloomberg reported.

Amazon encourages staff disturbed by what they hear to console each other, but didn't elaborate on whether counselling was available.

The retention of the audio files is purportedly voluntary, but this is far from clear in the information Amazon gives users. Amazon and Google allow the voice recordings to be deleted from your account, for example, but this may not be permanent: the recording could continue to be used for training purposes (Google's explanation can be found here).

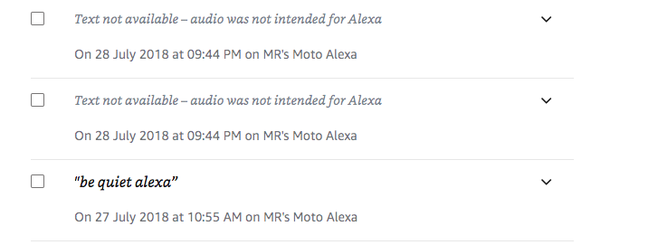

A personal history shows Amazon continues to hold audio files of data "not intended for Alexa".

We emailed Microsoft, Google and Apple with the following questions.

Amazon has acknowledged that humans received anonymised samples of recordings from its Alexa products, to improve the service. Does [Google/Microsoft/Apple] also use humans to improve the service?

Voice and audio collection can be turned off. Does this apply to [your] products?

When a recording is "deleted" – disassociated from the user's account – how long is it retained on [your] servers for training purposes?

As mentioned, we have yet to receive a formal statement from any of the three.

Privacy campaigners cite two areas of concern. Voice platforms could offer an "auto purge" function deleting recordings older than a day, or 30 days. And they could ensure, once deleted, a file is gone forever. Both merit some formal legal clarity. ®

Bootnote

The FT noted that "one ad on Amazon's Mechanical Turk marketplace showed a human intelligence task that would pay someone 25 cents to spend 12 minutes teaching an algorithm to make a green triangle navigate a maze to reach a green square. That's an hourly rate of $1.25." The rate returned to you, the Alexa owner, for training Amazon's system is, of course, zero.