This article is more than 1 year old

Google claims web search will be 10% better for English speakers – with the help of AI

New language model can think in both directions, fingers crossed

Google has updated its search algorithms to tap into an AI language model that is better at understanding netizens' queries than previous systems.

Pandu Nayak, a Google fellow and vice president of search, announced this month that the Chocolate Factory has rolled out BERT, short for Bidirectional Encoder Representations from Transformers, for its most fundamental product: Google Search.

To pull all of this off, researchers at Google AI built a neural network known as a transformer. The architecture is suited to deal with sequences in data, making them ideal for dealing with language. To understand a sentence, you must look at all the words in it in a specific order. Unlike previous transformer models that only consider words in one direction – left to right – BERT is able to look back to consider the overall context of a sentence.

“BERT models can therefore consider the full context of a word by looking at the words that come before and after it—particularly useful for understanding the intent behind search queries,” Nayak said.

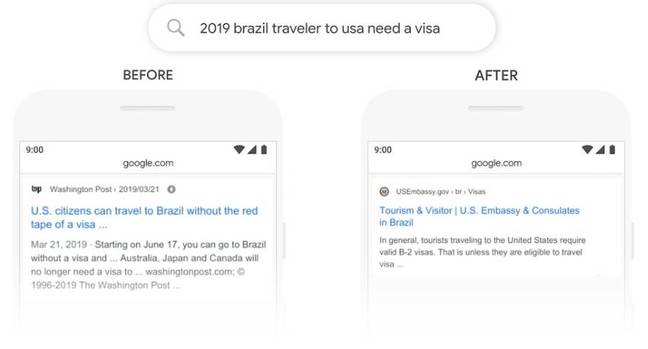

For example, below's what the previous Google Search and new BERT-powered search looks like when you query: “2019 brazil traveler to usa need a visa.”

Left: The result returned for the old Google Search that incorrectly understands the query as a US traveler heading to Brazil. Right:The result returned for the new Google Search using BERT, which correctly identifies the search is for a Brazilian traveler going to the US. Image credit: Google.

BERT has a better grasp of the significance behind the word "to" in the new search. The old model returns results that show information for US citizens travelling to Brazil, instead of the other way around. It looks like BERT is a bit patchy, however, as a Google Search today still appears to give results as if it's American travelers looking to go to Brazil:

Current search result for the query: 2019 brazil traveler to usa need a visa. It still thinks the sentence means a US traveler going to Brazil

The Register asked Google about this, and a spokesperson told us... the screenshots were just a demo. Your mileage may vary.

"In terms of not seeing those exact examples, the side-by-sides we showed were from our evaluation process, and might not 100 per cent mirror what you see live in Search," the PR team told us. "These were side-by-side examples from our evaluation process where we identified particular types of language understanding challenges where BERT was able to figure out the query better - they were largely illustrative.

Fellow AI nerds, beware: Google Cloud glitch leaves Nvidia T4 GPUs off estimated bills for some virtual machines

READ MORE"Search is dynamic, content on the web changes. So it's not necessarily going to have a predictable set of results for any query at any point in time. The web is constantly changing and we make a lot of updates to our algorithms throughout the year as well."

Nayak claimed BERT would improve 10 per cent of all its searches. The biggest changes will be for longer queries, apparently, where sentences are peppered with prepositions like “for” or “to.”

“BERT will help Search better understand one in 10 searches in the US in English, and we’ll bring this to more languages and locales over time,” he said.

Google will run BERT on its custom Cloud TPU chips; it declined to disclose how many would be needed to power the model. The most powerful Cloud TPU option currently is the Cloud TPU v3 Pods, which contain 64 ASICs, each carrying a performance of 420 teraflops and 128GB of high-bandwidth memory.

At the moment, BERT will work best for queries made in English. Google said it also works in two dozen countries for other languages, too, such as Korean, Hindi, and Portuguese for “featured snippets” of text. ®