This article is more than 1 year old

Explain yourself, mister: Fresh efforts at Google to understand why an AI system says yes or no

Chief scientist reveals why company hasn't released an API for facial recognition

Google has announced a new Explainable AI feature for its cloud platform, which provides more information about the features that cause an AI prediction to come up with its results.

Artificial neural networks, which are used by many of today's machine learning and AI systems, are modelled to some extent on biological brains. One of the challenges with these systems is that as they have become larger and more complex, it has also become harder to see the exact reasons for specific predictions. Google's white paper on the subject refers to "loss of debuggability and transparency".

The uncertainty this introduces has serious consequences. It can disguise spurious correlations, where the system picks on an irrelevant or unintended feature in the training data. It also makes it hard to fix AI bias, where predictions are made based on features that are ethically unacceptable.

AI Explainability has not been invented by Google but is widely researched. The challenge is how to present the workings of an AI system in a form which is easily intelligible.

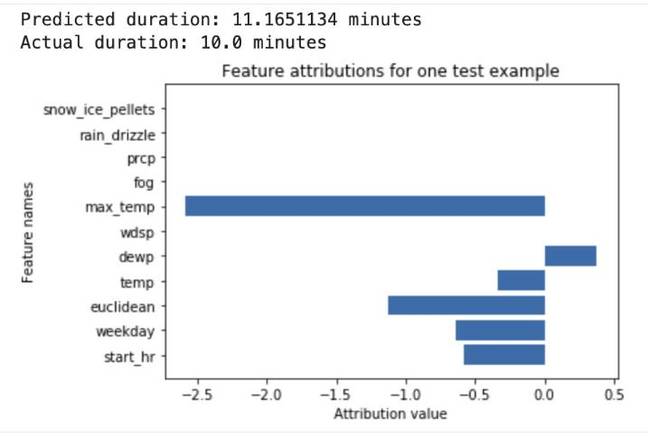

Google has come up with a set of three tools under this heading of "AI Explainability" that may help. The first and perhaps most important is AI Explanations, which lists features detected by the AI along with an attribution score showing how much each feature affected the prediction. In an example from the docs, a neural network predicts the duration of a bike ride based on weather data and previous ride information. The tool shows factors like temperature, day of week and start time, scored to show their influence on the prediction.

In the case of images, an overlay shows which parts of the picture were the main factors in the classification of the image content.

There is also a What-If tool that lets you test model performance if you manipulate individual attributes, and a continuous evaluation tool that feeds sample results to human reviewers on a schedule to assist monitoring of results.

AI Explainability is useful for evaluating almost any model and near-essential for detecting bias, which Google considers part of its approach to responsible AI.

Google's chief scientist for AI and machine learning, Dr Andrew Moore, spoke on the subject at the company's Next event in London. It was only "about five or six years ago that the academic community started getting alarmed about unintended consequences [of AI]," he said.

He advocated a nuanced approach. "We wanted to use computer vision to check to make sure that folks on construction sites were safe, to do an alert if someone wasn't wearing a helmet. It seems at first sight obviously good because it helps with safety, but then there will be a line of argument that this is turning into a world where we are monitoring workers in an inhuman way. We have to work through the complexities of these things."

Moore said that the company is cautious about facial recognition. "We made the considered decision, as we have strong facial recognition technology, to not launch general facial recognition as an API but instead to package it inside products where we can be assured that it will be used for the right purpose."

Moore underlined the importance of explainability to successful AI. "If you've got a safety critical system or a societally important thing which may have unintended consequences, if you think your model's made a mistake, you have to be able to diagnose it. We want to explain carefully what explainability can and can't do. It's not a panacea.

"In Google it saved our own butts against making serious mistakes. One example was with some work on classifying chest x-rays where we were surprised that it seemed to be performing much better than we thought possible.

"So we were able to use these tools to ask the model, this positive diagnosis of lung cancer, why did the machine learning algorithm think this was right? The ML algorithm though the explainability interface revealed to us that it had hooked onto some extra information. It happened that in the training set, in the positive examples, on many of them the physician had lightly marked on the slide a little highlight of where they thought the tumour was, and the ML algorithm had used that as major feature of predicting. That enabled us to pull back that launch."

How much of this work is exclusive to Google? "This is something where we're engaging with the rest of the world," said Moore, acknowledging that "there is a new tweak of how we're using neural networks which is not public at the moment."

Will there still be areas of uncertainty about why a prediction was made? "This is a diagnostic tool to help a knowledgeable data scientist but there are all kinds of ways in which there could still be problems," Moore told us. "For all users of this toolset we've asked them to make sure that they do digest the white paper that goes with it which explains many of the dangers, for example correlation versus causation. But it's taking us out of a situation where there's very little insight into something where the human has got much more information to interpret."

It is worth noting that tools to help enable responsible AI are not the same as enforcing responsible AI, which is an even harder problem. ®