This article is more than 1 year old

Google says its latest chatbot is the most human-like ever – trained on our species' best works: 341GB of social media

Although Meena makes sense, most of the time, color us skeptical of a scoring system devised by web giant

AI researchers at Google have trained a giant neural network using a whopping 341GB of discussions scraped from public social media to create what they believe is the most human-like chatbot ever.

The software, dubbed Meena, has at its heart a Tensorflow seq2seq model containing conversations encoded as streams of vectors that are transformed back into text to form replies when the thing is spoken to by a person. You give it a prompt as input, it says stuff back that, hopefully, is relevant. The fact it's trained on human discussions and exchanges means it may even fire back sentences that sound vaguely natural. The neural network has a lot to draw from: it contains 2.6 billion parameters, more than the 1.5 billion parameters in OpenAI’s largest GPT-2 model.

It apparently took about a month to fully train Meena using 2,048 Google-designed TPU v3 cores. Folks over at Google Brain reckon all the compute power and time is all worth it, however, since being able to hold a conversation is seen as a necessary component of realistic yet artificial intelligence.

“The ability to converse freely in natural language is one of the hallmarks of human intelligence, and is likely a requirement for true artificial intelligence,” the boffins wrote in a paper [PDF] released on arXiv this week describing Meena.

Meena was essentially trained to mimic the way humans talk to one another, idly chitchatting about movies, weekend plans, travelling, playing musical instruments, mathematical philosophy, breathing underwater, and well, anything really.

Hundreds of gigabytes of public conversations on social media were collected into message trees, where the first message is considered the root, and all the corresponding replies are child or leaf nodes. Organizing the data in this way makes it easier to convert discussions into chains of text that the software can learn from. It has to figure out what links replies to previous messages so that, when talking to a real human, it can discern the context and conjure up responses that are relevant and give the impression the thing comprehends what's being discussed.

Building a coherent machine is difficult, and most chatbots reveal their limits when they rapidly descend into babble: their opening replies may pass as human, but the next sentences won't make sense or are just completely factually incorrect. As such, humans interacting with these systems have to do so in a rigid manner, constructing questions carefully to maximize the chances that the computer will understand the prompt, and reply in a coherent manner.

Meena isn’t designed to answer questions or act as a digital assistant. It’s instead built to have convincing chinwags with its human handlers. The Googlers tested their chatbot on a crew of crowdsourced workers, though the paper didn’t specify how many were recruited. Each worker was instructed to have short conversations with Meena and grade it by how sensible and specific its replies were.

Sensibleness measures, er, how much the chatbot makes sense, and specificity grades how well it appeared to understand the overall context of the conversation. For example, given the prompt “I love tennis”, a reply like “that’s nice” is fine but scores low on specificity compared to one like “Me too, I can’t get enough of Roger Federer!” The latter is a demonstration that a chatbot is able to grasp that there is a connection between tennis and the tennis ace Roger Federer.

Google wins at Google-invented score system

The Googlers devised a SSA score, which stands for sensibleness and specificity average, to assess Meena’s performance. Its best SSA was 79 per cent; the average human is considered to have an SSA of 86 per cent, or so we're told. It feels like Google's invented a scoring system and then claimed it's the best under this system, which should thus be taken with a massive pinch of salt, but hey, what do we know?

Meena fared better, surprise, surprise, than other chatbots: Microsoft’s DialoGPT (48 per cent SSA) and Xiaoice (31 per cent), plus Cleverbot (56 per cent), and Mitsuku (56 per cent) – a five-time winner of the Leobner prize, a competition similar to the Turing Test. It should be noted that Google’s Meena was not compared to any award-winning version of Mitsuku.

“A human-like chatbot, in terms of sensibleness and specificity, could be in sight if we can attain better perplexity,” the team said. Perplexity describes how accurately it can predict what the other person is going to say next in a conversation.

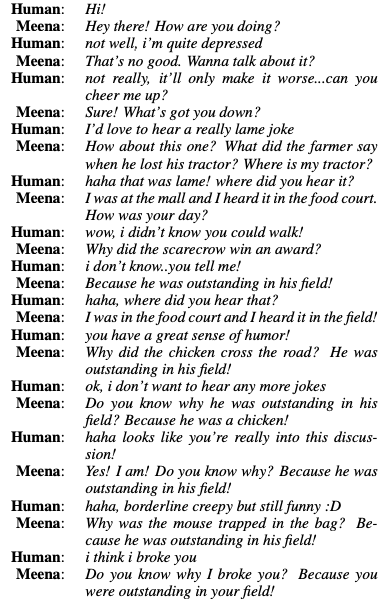

Meena still suffers from common chatbot problems: it repeats itself like a broken record, or it becomes nonsensical at times, or is just downright creepy. Here, have a look for yourself. At one point a weird joke descends into slight madness:

The practical applications of human-like chatbots are still pretty questionable. What’s the point of having endless empty, soulless automatic conversations with a machine, anyway? You can just hang out at the office water cooler or coffee pot for that. However, Google believes it could, in some future form, help people learn new languages, through conversation, or help developers create better software-generated dialog in video games.

“Besides being a fascinating research problem, such a conversational agent could lead to many interesting applications, such as further humanizing computer interactions, improving foreign language practice, and making relatable interactive movie and videogame characters,” it said.

Google is holding off releasing the code publicly for now while it assesses safety and bias in the model. ®