This article is more than 1 year old

Facebook mulls tagging pics with 'radioactive' markers to trace the origin of photos used to build image-recog AI

Invisible watermarks can be detected in trained software to root out theft, benchmark cheating, etc

Facebook researchers have developed a digital watermarking technique that allows developers to tell if a particular machine-learning model was trained using marked images.

"We call this new verification method 'radioactive' data because it is analogous to the use of radioactive markers in medicine: drugs such as barium sulphate allow doctors to see certain conditions more clearly on computerized tomography (CT) scans or other X-ray exams,” the eggheads explained on Wednesday.

As far as we can tell, Facebook's approach is this: you take some photos, watermark them in a way that is invisible to the human eye, label them as normal, and slip them into your image data set. To avoid drawing attention to the watermarking, the marked images should also be labeled as the originals. If an image pre-watermarking was labeled a toaster, it should be labeled a toaster in the data set, too. The idea is not to mess with the labels: just the image data.

Now, unbeknownst to you, someone obtains your data set, and uses it to train an image-classification system. When the AI is shown a photograph, it draws upon its training and predicts how a human would label the picture. For example, a photo of a kid on a bike should cause the software to spit out labels like child, bicycle, etc, with varying degrees of confidence: 94 per cent sure it's a child, 68 per cent sure it's a bike, two per cent sure it's a car, and so on.

Later, you come across that model and suspect someone trained it using your data set. When you run your watermarked images through the system, statistical analysis of the neural network's operation should indicate whether it was trained using those contaminated pictures. This can be done, for instance, by studying the difference between the model's label outputs and what a model trained using vanilla, non-marked images would produce. Alternatively, if you can see inside the model, you could use the network's weights.

Above all, the model should still be accurate in terms of predicting the labels, but you should be able to determine mathematically whether it was trained using radioactive data.

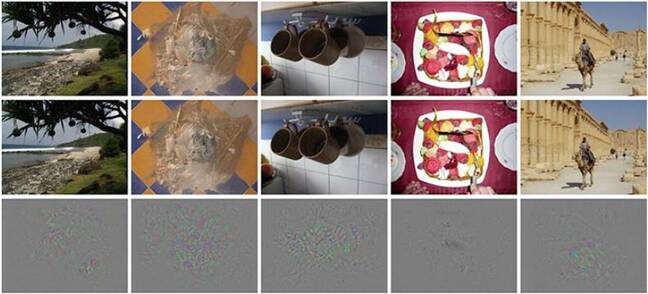

Below are examples of some images that have been digitally tagged. The first row shows the original photos, the second row shows what those images look like when watermarked by Facebook's technique, and the third row is the hidden marking. The marking is basically slight perturbations.

The researchers tested their tagging method by using ResNet-18 and VGG-16 image-recognition models trained from the ImageNet data set, having slipped in a few marked images of their own. The algorithm apparently managed to sniff out the radioactive data even when the method was only applied to one per cent of the training set.

“We also designed the radioactive data method so that it is extremely difficult to detect whether a data set is radioactive and to remove the marks from the trained model,” the researchers said. To uncover the markers, someone would have to know how exactly the features were altered.

Facebook coughs up $550m to make AI photo tagging lawsuit vanish. How ever will it survive on that $17.9bn left over?

READ MOREThere are several potential applications for tagging data this way. Firstly, developers can keep track of which data set has been used to train particular models. Secondly, it also allows people to check if models have inappropriately obtained or used data sets.

For example, if an AI competition only allows teams to train systems on a specific data set, judges can use Facebook’s technique to mark each image to check if competitors have indeed stuck to the rules. It also allows a data set, even a publicly available one, to be effectively fingerprinted so you know the exact training source for a model: which version, what date, and so on.

“Another example is around marking a benchmark's test set and being able to detect, thanks to this technique, whether a model learned on test data and is therefore not scientifically rigorous,” a Facebook spokesperson told The Register.

Facebook said it may share the code for its radioactive data technique in the future: a decision has not been made. It is not being used in production at this time, we're told. In the meantime, a paper describing the method has been released on arXiv if you can get your head around the mathematics involved.

Of course, totally apropos of nothing, Clearview is in hot water at the moment for scraping public photos form web giants for its facial recognition system. Sounds like Silicon Valley doesn't like having its pictures lifted for other people's models. ®