This article is more than 1 year old

Amazon, Apple, Google, IBM, Microsoft speech-to-text AI systems can't understand black people as well as whites

Lack of varied training data to blame, say researchers

Speech-recognition software developed by top tech firms struggle to understand black people compared to white people, according to research published this week.

The study, led by academics at Stanford University and Georgetown University in America, probed five “state-of-the-art” cloud-hosted automated speech recognition (ASR) systems from Amazon, Apple, Google, IBM, and Microsoft, which transcribe speech-to-text.

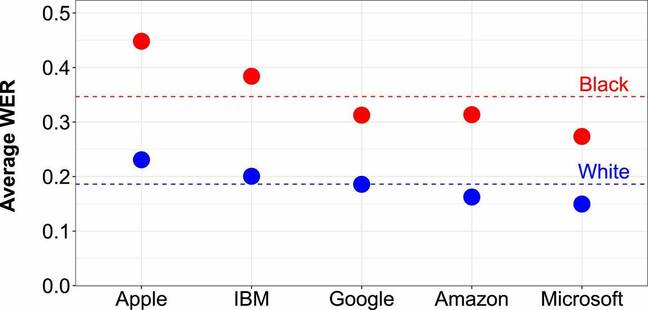

“We found that all five ASR systems exhibited substantial racial disparities, with an average word error rate (WER) of 0.35 for black speakers compared with 0.19 for white speakers,” the paper stated.

That means for every one hundred words blabbered, the machine-learning models failed to understand 35 words for black speakers compared to 19 words for white speakers, on average.

Apple was the worst: researchers reported a WER of 0.45 for black speakers compared to 0.23 for white speakers. Microsoft, however, was the best with WERs at 0.27 and 0.15 for both groups.

The word error rate (WER) for ASR systems developed by Apple, Amazon, Google, IBM, and Microsoft. The red dashed line is the average WER for black speakers and the blue dashed line is the average WER for white speakers. Image credit: Koenecke et al.

"We use the [speech-to-text] APIs provided by each of the service providers which are paid services generally for commercial use,” Allison Koenecke, first author of the paper and a PhD student at Stanford University’s Institute for Computational and Mathematical Engineering, told The Register.

“We do not use the firms' consumer-facing voice assistants because they do not provide a straightforward way to obtain batch transcriptions of about 40 hours of audio files, and could require playing audio out loud – adding an additional source of error – as opposed to directly obtaining audio from a .WAV file.”

Speech-to-text systems are broken into two parts: a language model trained on text and an acoustic model trained on sounds. Black speakers are more likely to use African American Vernacular English (AAVE), a style of English that has its own grammatical rules and vocabulary. The researchers believe the acoustic side of ASRs from all five companies were simply not trained on enough audio data from AAVE speakers to recognize what they were saying.

When the researchers fed audio snippets to all five ASR models of people from whites and blacks uttering identical sentences, the services performed worse for black speakers. Here, the average WER was 0.13 compared to 0.07 for black and white people. Thus, the WER between black and white speakers isn’t necessarily due to, say, slang words in AAVE: the acoustic detection in the models is fundamentally not up to scratch.

In other words, the models aren't fully trained on a range of accents and ways people speak, which lets down black people. It's otherwise great for white people, or people who sound like them.

London's top cop dismisses 'highly inaccurate or ill informed' facial-recognition critics, possibly ironically

READ MORE“These results suggest that racial disparities in ASR performance are related to differences in pronunciation and prosody – including rhythm, pitch, syllable accenting, vowel duration, and lenition – between white and black speakers,” as the researchers put it.

Two datasets were used to test the ASR systems: Corpus of Regional African American Language (CORAAL) and Voices of California (VOC). Some 115 volunteers – 42 white people and 73 black people – from five different US cities were interviewed to collectively generate 19.8 hours of audio for both datasets.

CORAAL contains black speakers who use AAVE to various degrees, and hail from Princeville, a city known for its historic African American population, as well as Rochester, New York, and Washington DC.

VOC is made up of white speakers from Sacramento and Humboldt County, California. Although the researchers are confident that ASRs exhibit biases, they hope to test their conclusions by calculating WERs for white and black speakers from the same city.

“Our findings highlight the need for the speech recognition community—including makers of speech recognition systems, academic speech recognition researchers, and government sponsors of speech research—to invest resources into ensuring that systems are broadly inclusive,” they concluded.

“Such an effort, we believe, should entail not only better collection of data on AAVE speech but also better collection of data on other nonstandard varieties of English, whose speakers may similarly be burdened by poor ASR performance—including those with regional and nonnative-English accents.”

The team also called for developers in industry and researchers in academia to work together to test widely adopted ASR models regularly to assess progress over time. ®