This article is more than 1 year old

You're V1 for me, says Arm: Chip biz's 'highest-performance core' takes aim at supercomputers, AI, anything relying on vector math

Plus the N2, its first Armv9 blueprint

Arm today publicly added two more CPU cores to its Neoverse family of data-center and server-grade processors: the V1 aimed at demanding workloads and vector math, and the N2 for lighter, scale-out systems.

Here's a summary of the features of these cores, which are available for licensing and placing in a suitable system-on-chip. You should catch the in-depth analysis and commentary on the V1 and N2 on our sister site The Next Platform this week.

The V1

This 64-bit CPU core, dubbed Zeus, is said to have 50 per cent more single-threaded performance than the Neoverse N1 that emerged in 2019; that's with comparing the V1 and N1 on the same process node and with the same clock frequency. Arm reckons the V1 is its highest-performance CPU core available.

UK digital secretary Oliver Dowden starts national security probe into proposed Arm-Nvidia merger

READ MOREWe're told the V1 is suitable for 7nm to 5nm processors in systems used for machine-learning training, supercomputers, cloud applications where single-threaded performance matters, and similar heavyweight tasks. For example, SiPearl's TSMC-made 6nm Rhea chip, due to power Europe's exascale supercomputer, will sport 72 V1 cores and HBM2E and DDR5 memory interfaces, and is due to arrive in 2022.

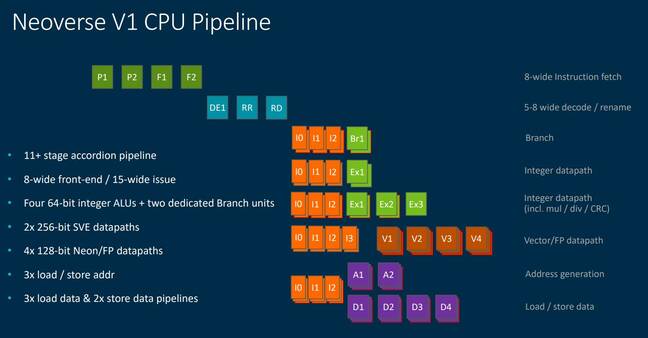

Under the hood, the V1 has an eight-wide fetch unit, a five to eight-wide decode and rename stage, and a 15-wide instruction issue stage feeding into two 256-bit SVE units, four NEON engines, and a collection of the usual blocks: ALUs, load and store units, and branch units. According to Arm, compared to the N1, the V1 has been optimized to handle software with large instruction footprints; and has improved branch prediction with faster run-ahead instruction cache prefetching and a larger branch target buffer among other updates. Ultimately, the core should mispredict less, and its front-end should stall less, too.

The core also has a macro-operation cache that can be thought of as a level-zero decoded instruction cache able to dispatch eight instructions per cycle, with additional options to fuse instructions. The out-of-order execution re-order buffer size has been doubled since the N1, and superscalar integer execution bandwidth has increased. This all means the V1 can keep itself primed with code to run, increasing integer processing performance for one thing.

Similarly, the V1's back-end has gained more bandwidth, can handle twice as many outstanding external memory transactions as the N1, and has a larger MMU.

SVE is Arm's Scalable Vector Extension (technical intro). It basically allows the processor cores to operate on multiple arrays of data at any one time, which is useful for things like artificial intelligence and supercomputer applications. The V1 thus supports SVE as well as bfloat16; the core is said to be Arm's first own implementation of SVE.

The nice thing about SVE is that code compatible with the extension should run on any SVE-capable processor regardless of the maximum supported vector length available. The software only needs to be built once, rather than targeted at specific SVE implementations and their supported vector lengths.

The V1 is also designed to be arranged in a mesh on a processor die, in which accelerators and interfaces to RAM and PCIe peripherals can also be placed, connecting CPU cores and their caches to these interfaces. Specifically, the V1 and N2 can use Arm's CMN-700 interconnect mesh, which supports up to 256 cores per die and 512 per system, up to 512MB system cache per die, room for up to 40 memory device ports – think DRAM and HBM interfaces – per die, and up to 32 CCIX ports per die. The mesh can also cater for multi-die chips, in which some dies have heterogeneous compute cores, some have a mix of compute and custom acceleration cores, and some are IO hub dies.

The V1's also gained power management features to keep chips within their operators' desired power envelops by throttling features as necessary.

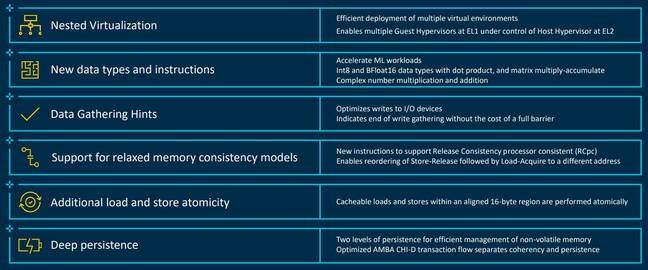

Compared to the N1, the V1 implements Armv8.3, which includes things like pointer authentication checks, nested virtualization, and complex numbers; Armv8.4, which brings in SVE among other features; Armv8.5, which offers support for persistent memory and speculation barriers to thwart Spectre-style data-theft attacks; and Armv8.6, which brings Bfloat16 and other bits and bytes.

The V1 also sports something called Memory Partitioning and Monitoring, aka MPAM, which provides controls over RAM usage in shared systems: virtual machines can be assigned a partition, which defines the amount of cache it can occupy and the amount of memory bandwidth available. For example, low priority applications can be limited in terms of cache and RAM bandwidth available, and priority software can get a minimum RAM bandwidth allocation.

One thing that caught our eye in Arm's materials on the V1 – see above – is some additional instructions for relaxed memory consistency models. You can get more info from Arm on the V1 right here. Bear in mind that things like clock speeds and cache sizes are configurable by core licensees.

The N2

This is also a followup to Arm's N1, as you might expect. Like the V1, it can use the aforementioned mesh, and is a 64-bit CPU core aimed at the cloud, network edge, and scale-out systems. The N2 is said to have an extra 40 per cent of performance over the N1 in terms of instructions processed per clock cycle due to microarchitecture improvements. The N2 is also Arm's first Armv9 processor core, and as such supports SVE2; indeed, it is said to be the first core to implement SVE2.

According to Arm, the N2 is very similar to the V1 in terms of its branch prediction, data prefetching, and other front-end components. It also has a macro-op cache, and extra power management controls.

You can catch more info about the N2 from Arm over here. ®