This article is more than 1 year old

GitHub Copilot is AI pair programming where you, the human, still have to do most of the work

Maybe call it backseat programming for now?

GitHub on Tuesday unveiled a code-completion tool called Copilot that shows promise though still has some way to go to meet its AI pair programming goal.

If you're wondering how well it performs, in an FAQ about the service, which is available as a limited "technical preview," GitHub admitted:

The code it suggests may not always work, or even make sense. While we are working hard to make GitHub Copilot better, code suggested by GitHub Copilot should be carefully tested, reviewed, and vetted, like any other code. As the developer, you are always in charge.

And:

GitHub Copilot doesn’t actually test the code it suggests, so the code may not even compile or run ... GitHub Copilot may suggest old or deprecated uses of libraries and languages. You can use the code anywhere, but you do so at your own risk.

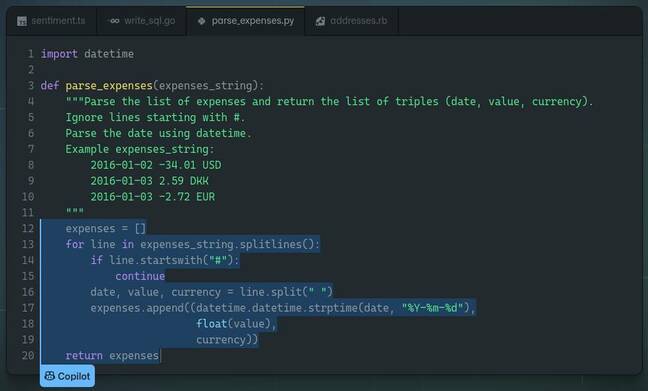

Developer Nick Shearer spotted that one of the examples of Copilot's output on its own homepage was the auto-completion of an algorithm that processes expenses. It suggests using a floating-point variable for a monetary value. Generally speaking, floating-point variables are not suited for currency, unless you want values like $9.99000001 going through your system.

Copilot may find other ways to ruin your day. We'll let GitHub explain:

The technical preview includes filters to block offensive words and avoid synthesizing suggestions in sensitive contexts. Due to the pre-release nature of the underlying technology, GitHub Copilot may sometimes produce undesired outputs, including biased, discriminatory, abusive, or offensive outputs.

The most enthusiastic person we could find toward Copilot said the tool worked exactly as expected one time in ten. "When it guesses right, it feels like it's reading my mind," they said.

GitHub calls it AI pair programming. But, right now, as it stands, Copilot is more like AI armchair programming, or backseat programming, than pair programming. This is not a team of equals. You, the human, are still ultimately and solely responsible, and that makes Copilot more autocomplete than robot programmer.

A spokesperson for GitHub told us the Copilot team benchmarked their tool by making it complete functions in Python. The model generated correct code 43 per cent of the time on the first try, the PR rep said, and 57 per cent of the time when allowed 10 attempts. We're assured it's getting better. The spokesperson added that developers should use Copilot with the usual run of tests and security tools and apply their best judgment.

Copilot is straightforward to use: it's available in Visual Studio Code as an extension or GitHub Codespaces, once you've been accepted into the preview. Type away and Copilot will suggest the next line of code, or fill in the blanks for a particular function you’re trying to write.

You can also write comments to coax the software into generating blocks of code. A GitHub spokesperson told us Copilot understands both programming and human languages, which allows developers to describe a task in English, and the tool will then try to provide the corresponding code.

At the heart of it is OpenAI Codex, which a GitHub spokesperson told us was a machine-learning system created by OpenAI, and that an API for using Codex programmatically will be made available this summer along with more details. We were also told that Codex is significantly more capable than OpenAI's text-generating GPT-3.

Teaching neural networks to write code has been tried again and again; there are a few startups like TabNine and Kite that have similar products to Copilot, as well as big tech companies like Amazon.

GitHub Copilot shouldn’t come as too much of a surprise because the code management platform is owned by Microsoft, which has a close relationship with, and billion-dollar investment in, OpenAI.

Garbage in, garbage out

Copilot was trained on massive amounts of natural-language text as well as public code repositories. GitHub said the output of the tool is owned by the user, much like you own the output of a compiler from your source code, though it estimated 0.1 per cent of suggestions may contain code that was in the training set, which you may or may not be allowed to use. Again, you'll have to check Copilot's output thoroughly.

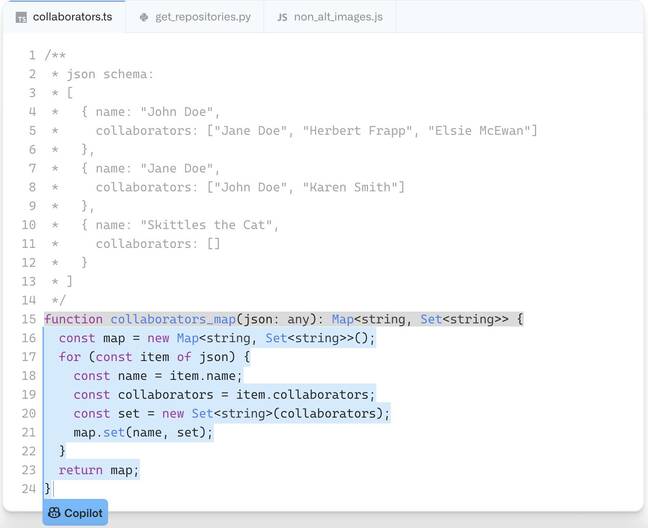

In one example, GitHub Copilot produced multiple lines of TypeScript that parses JSON data into a data structure that maps names to collaborators, all based on a comment containing example input JSON.

Written in grey are the comments. The code highlighted in blue was generated by GitHub Copilot. Image Credit: GitHub

We're told Copilot can handle all sorts of programming languages though it performs best with Python, JavaScript, TypeScript, Ruby, and Go. It learned to output code by learning common patterns in people's use of programming languages, just like GPT-3 picked up on grammar from the written word. That said, Copilot is not flawless. It’s likely to get confused if your codebase is thousands of lines of code or more.

We could see it being used to automate easy, repetitive tasks – such as generating boiler-plate code – and suggesting library or framework functions you're not familiar with to save you the trouble of looking through documentation.

The tool lets GitHub know, via telemetry, whenever its suggestions are accepted or rejected; this information will be used to improve the software, we're told. It's even claimed Copilot can adapt from the changes you make to the source, and learns to match your coding style.

"We use telemetry data, including information about which suggestions users accept or reject, to improve the model," the Microsoft-owned biz said. "We do not reference your private code when generating code for other users."

- What happens when your massive text-generating neural net starts spitting out people's phone numbers? If you're OpenAI, you create a filter

- IBM compiles dataset to teach software how software is made: 14m code samples, half of which actually work

- AWS launches BugBust contest: Help fix a $100m problem for a $12 tshirt

- AWS straps Python support to its automated CodeGuru tool, slashes prices – just don't go over 100,000 lines

A GitHub spokesperson told us Copilot uses high-performance GPUs to perform its inference. They added that hundreds of engineers, including many of its own, have already been using GitHub Copilot every day; alpha testers were also given a run at it, we note.

You can join the wait list to try out Copilot here. It is a free offering for now though will be commercialized if it makes it to launch, a spokesperson told us. ®